Boundless Statistics for Organizations by Brad Griffith and Lisa Friesen is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License, except where otherwise noted.

Online Consortium of Oklahoma

Oklahoma City

Boundless Statistics for Organizations by Brad Griffith and Lisa Friesen is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License, except where otherwise noted.

1

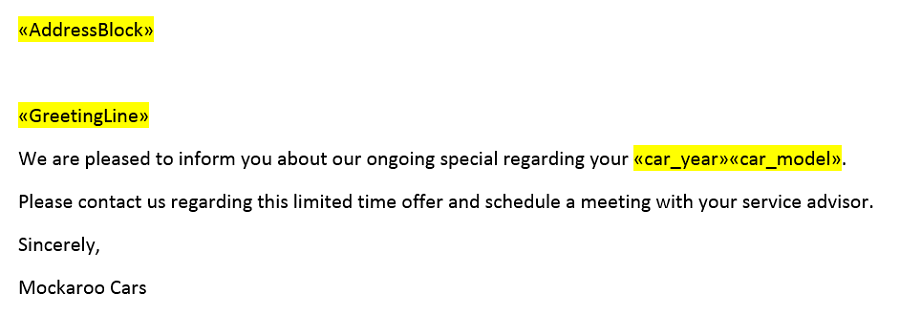

Boundless Statistics for Organizations is a 2021 adaptation of Boundless Statistics from Lumen Learning, customized for the Reach Higher Organizational Leadership program in Oklahoma. For more information, visit the Reach Higher website (click image below):

If you have suggestions for improvement or need to report an issue, please contact Brad Griffith(bgriffith@osrhe.edu).

2

I

There are four main levels of measurement: nominal, ordinal, interval, and ratio.

Distinguish between the nominal, ordinal, interval and ratio methods of data measurement.

An example of an observational study is one that explores the correlation between smoking and lung cancer. This type of study typically uses a survey to collect observations about the area of interest and then performs statistical analysis. In this case, the researchers would collect observations of both smokers and non-smokers, perhaps through a case-control study, and then look for the number of cases of lung cancer in each group.

There are four main levels of measurement used in statistics: nominal, ordinal, interval, and ratio. Each of these have different degrees of usefulness in statistical research. Data is collected about a population by random sampling .

Nominal measurements have no meaningful rank order among values. Nominal data differentiates between items or subjects based only on qualitative classifications they belong to. Examples include gender, nationality, ethnicity, language, genre, style, biological species, visual pattern, etc.

Defining a population

In applying statistics to a scientific, industrial, or societal problem, it is necessary to begin with a population or process to be studied. Populations can be diverse topics such as “all persons living in a country” or “all stamps produced in the year 1943”.

Ordinal measurements have imprecise differences between consecutive values, but have a meaningful order to those values. Ordinal data allows for rank order (1st, 2nd, 3rd, etc) by which data can be sorted, but it still does not allow for relative degree of difference between them. Examples of ordinal data include dichotomous values such as “sick” versus “healthy” when measuring health, “guilty” versus “innocent” when making judgments in courts, “false” versus “true”, when measuring truth value. Examples also include non-dichotomous data consisting of a spectrum of values, such as “completely agree”, “mostly agree”, “mostly disagree”, or “completely disagree” when measuring opinion.

Interval measurements have meaningful distances between measurements defined, but the zero value is arbitrary (as in the case with longitude and temperature measurements in Celsius or Fahrenheit). Interval data allows for the degree of difference between items, but not the ratio between them. Ratios are not allowed with interval data since 20°C cannot be said to be “twice as hot” as 10°C, nor can multiplication/division be carried out between any two dates directly. However, ratios of differences can be expressed; for example, one difference can be twice another. Interval type variables are sometimes also called “scaled variables”.

Ratio measurements have both a meaningful zero value and the distances between different measurements are defined; they provide the greatest flexibility in statistical methods that can be used for analyzing the data.

Because variables conforming only to nominal or ordinal measurements cannot be reasonably measured numerically, sometimes they are grouped together as categorical variables, whereas ratio and interval measurements are grouped together as quantitative variables, which can be either discrete or continuous, due to their numerical nature.

Measurement processes that generate statistical data are also subject to error. Many of these errors are classified as random (noise) or systematic (bias), but other important types of errors (e.g., blunder, such as when an analyst reports incorrect units) can also be important.

Statistics is the study of the collection, organization, analysis, interpretation, and presentation of data.

Define the field of Statistics in terms of its definition, application and history.

Say you want to conduct a poll on whether your school should use its funding to build a new athletic complex or a new library. Appropriate questions to ask would include: How many people do you have to poll? How do you ensure that your poll is free of bias? How do you interpret your results?

Statistics is the study of the collection, organization, analysis, interpretation, and presentation of data. It deals with all aspects of data, including the planning of its collection in terms of the design of surveys and experiments. Some consider statistics a mathematical body of science that pertains to the collection, analysis, interpretation or explanation, and presentation of data, while others consider it a branch of mathematics concerned with collecting and interpreting data. Because of its empirical roots and its focus on applications, statistics is usually considered a distinct mathematical science rather than a branch of mathematics. As one would expect, statistics is largely grounded in mathematics, and the study of statistics has lent itself to many major concepts in mathematics, such as:

However, much of statistics is also non-mathematical. This includes:

In short, statistics is the study of data. It includes descriptive statistics (the study of methods and tools for collecting data, and mathematical models to describe and interpret data) and inferential statistics (the systems and techniques for making probability-based decisions and accurate predictions based on incomplete data).

A statistician is someone who is particularly well-versed in the ways of thinking necessary to successfully apply statistical analysis. Such people often gain experience through working in any of a wide number of fields. Statisticians improve data quality by developing specific experimental designs and survey samples. Statistics itself also provides tools for predicting and forecasting the use of data and statistical models. Statistics is applicable to a wide variety of academic disciplines, including natural and social sciences, government, and business. Statistical consultants can help organizations and companies that don’t have in-house expertise relevant to their particular questions.

Statistical methods date back at least to the 5th century BC. The earliest known writing on statistics appears in a 9th century book entitled Manuscript on Deciphering Cryptographic Messages, written by Al-Kindi. In this book, Al-Kindi provides a detailed description of how to use statistics and frequency analysis to decipher encrypted messages. This was the birth of both statistics and cryptanalysis, according to the Saudi engineer Ibrahim Al-Kadi.

The Nuova Cronica, a 14th century history of Florence by the Florentine banker and official Giovanni Villani, includes much statistical information on population, ordinances, commerce, education, and religious facilities, and has been described as the first introduction of statistics as a positive element in history.

Some scholars pinpoint the origin of statistics to 1663, with the publication of Natural and Political Observations upon the Bills of Mortality by John Graunt. Early applications of statistical thinking revolved around the needs of states to base policy on demographic and economic data, hence its “stat-” etymology. The scope of the discipline of statistics broadened in the early 19th century to include the collection and analysis of data in general.

Statistics teaches people to use a limited sample to make intelligent and accurate conclusions about a greater population.

Describe how Statistics helps us to make inferences about a population, understand and interpret variation, and make more informed everyday decisions.

A company selling the cat food brand “Cato” (a fictitious name here), may claim quite truthfully in their advertisements that eight out of ten cat owners said that their cats preferred Cato brand cat food to “the other leading brand” cat food. What they may not mention is that the cat owners questioned were those they found in a supermarket buying Cato, which doesn’t represent an unbiased sample of cat owners.

Imagine reading a book for the first few chapters and then being able to get a sense of what the ending will be like. This ability is provided by the field of inferential statistics. With the appropriate tools and solid grounding in the field, one can use a limited sample (e.g., reading the first five chapters of Pride & Prejudice) to make intelligent and accurate statements about the population (e.g., predicting the ending of Pride & Prejudice).

Those proceeding to higher education will learn that statistics is an extremely powerful tool available for assessing the significance of experimental data and for drawing the right conclusions from the vast amounts of data encountered by engineers, scientists, sociologists, and other professionals in most spheres of learning. There is no study with scientific, clinical, social, health, environmental or political goals that does not rely on statistical methodologies. The most essential reason for this fact is that variation is ubiquitous in nature, and probability and statistics are the fields that allow us to study, understand, model, embrace and interpret this variation.

In today’s information-overloaded age, statistics is one of the most useful subjects anyone can learn. Newspapers are filled with statistical data, and anyone who is ignorant of statistics is at risk of being seriously misled about important real-life decisions such as what to eat, who is leading the polls, how dangerous smoking is, et cetera. Statistics are often used by politicians, advertisers, and others to twist the truth for their own gain. Knowing at least a little about the field of statistics will help one to make more informed decisions about these and other important questions.

The mathematical procedure in which we make intelligent guesses about a population based on a sample is called inferential statistics.

Discuss how inferential statistics allows us to draw conclusions about a population from a random sample and corresponding tests of significance.

In statistics, statistical inference is the process of drawing conclusions from data that is subject to random variation–for example, observational errors or sampling variation. More substantially, the terms statistical inference, statistical induction, and inferential statistics are used to describe systems of procedures that can be used to draw conclusions from data sets arising from systems affected by random variation, such as observational errors, random sampling, or random experimentation. Initial requirements of such a system of procedures for inference and induction are that the system should produce reasonable answers when applied to well-defined situations and that it should be general enough to be applied across a range of situations.

The outcome of statistical inference may be an answer to the question “what should be done next? ” where this might be a decision about making further experiments or surveys, or about drawing a conclusion before implementing some organizational or governmental policy.

Suppose you have been hired by the National Election Commission to examine how the American people feel about the fairness of the voting procedures in the U.S. How will you do it? Who will you ask?

It is not practical to ask every single American how he or she feels about the fairness of the voting procedures. Instead, we query a relatively small number of Americans, and draw inferences about the entire country from their responses. The Americans actually queried constitute our sample of the larger population of all Americans. The mathematical procedures whereby we convert information about the sample into intelligent guesses about the population fall under the rubric of inferential statistics.

In the case of voting attitudes, we would sample a few thousand Americans, drawn from the hundreds of millions that make up the country. In choosing a sample, it is therefore crucial that it be representative. It must not over-represent one kind of citizen at the expense of others. For example, something would be wrong with our sample if it happened to be made up entirely of Florida residents. If the sample held only Floridians, it could not be used to infer the attitudes of other Americans. The same problem would arise if the sample were comprised only of Republicans. Inferential statistics are based on the assumption that sampling is random. We trust a random sample to represent different segments of society in close to the appropriate proportions (provided the sample is large enough).

Furthermore, when generalizing a trend found in a sample to the larger population, statisticians uses tests of significance (such as the Chi-Square test or the T-test). These tests determine the probability that the results found were by chance, and therefore not representative of the entire population.

Data can be categorized as either primary or secondary and as either qualitative or quantitative.

Differentiate between primary and secondary data and qualitative and quantitative data.

Examples

Qualitative data: race, religion, gender, etc. Quantitative data: height in inches, time in seconds, temperature in degrees, etc.

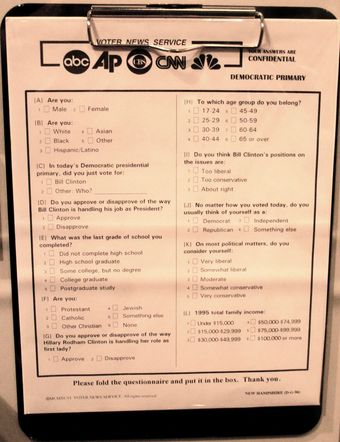

Data can be classified as either primary or secondary. Primary data is original data that has been collected specially for the purpose in mind. This type of data is collected first hand. Those who gather primary data may be an authorized organization, investigator, enumerator or just someone with a clipboard. These people are acting as a witness, so primary data is only considered as reliable as the people who gather it. Research where one gathers this kind of data is referred to as field research. An example of primary data is conducting your own questionnaire.

Secondary data is data that has been collected for another purpose. This type of data is reused, usually in a different context from its first use. You are not the original source of the data–rather, you are collecting it from elsewhere. An example of secondary data is using numbers and information found inside a textbook.

Knowing how the data was collected allows critics of a study to search for bias in how it was conducted. A good study will welcome such scrutiny. Each type has its own weaknesses and strengths. Primary data is gathered by people who can focus directly on the purpose in mind. This helps ensure that questions are meaningful to the purpose, but this can introduce bias in those same questions. Secondary data doesn’t have the privilege of this focus, but is only susceptible to bias introduced in the choice of what data to reuse. Stated another way, those who gather secondary data get to pick the questions. Those who gather primary data get to write the questions. There may be bias either way.

Qualitative data is a categorical measurement expressed not in terms of numbers, but rather by means of a natural language description. In statistics, it is often used interchangeably with “categorical” data. Collecting information about a favorite color is an example of collecting qualitative data. Although we may have categories, the categories may have a structure to them. When there is not a natural ordering of the categories, we call these nominal categories. Examples might be gender, race, religion, or sport. When the categories may be ordered, these are called ordinal categories. Categorical data that judge size (small, medium, large, etc. ) are ordinal categories. Attitudes (strongly disagree, disagree, neutral, agree, strongly agree) are also ordinal categories; however, we may not know which value is the best or worst of these issues. Note that the distance between these categories is not something we can measure.

Quantitative data is a numerical measurement expressed not by means of a natural language description, but rather in terms of numbers. Quantitative data always are associated with a scale measure. Probably the most common scale type is the ratio-scale. Observations of this type are on a scale that has a meaningful zero value but also have an equidistant measure (i.e. the difference between 10 and 20 is the same as the difference between 100 and 110). For example, a 10 year-old girl is twice as old as a 5 year-old girl. Since you can measure zero years, time is a ratio-scale variable. Money is another common ratio-scale quantitative measure. Observations that you count are usually ratio-scale (e.g. number of widgets). A more general quantitative measure is the interval scale. Interval scales also have an equidistant measure. However, the doubling principle breaks down in this scale. A temperature of 50 degrees Celsius is not “half as hot” as a temperature of 100, but a difference of 10 degrees indicates the same difference in temperature anywhere along the scale.

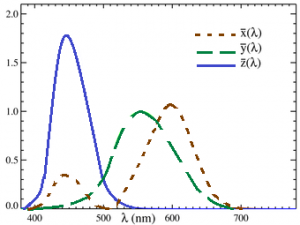

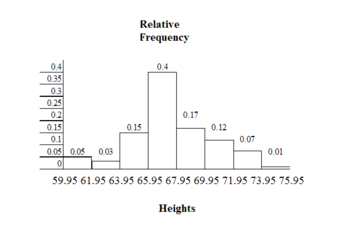

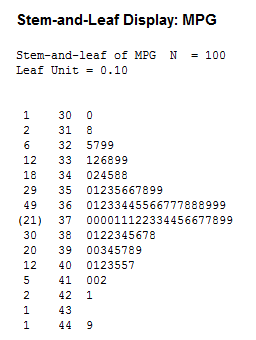

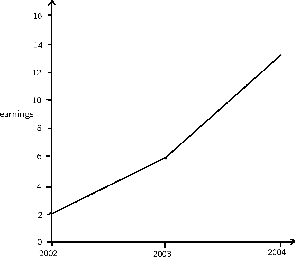

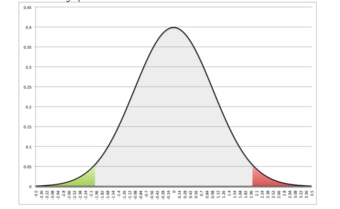

Quantitative Data: The graph shows a display of quantitative data.

Statistics deals with all aspects of the collection, organization, analysis, interpretation, and presentation of data.

Describe how statistics is applied to scientific, industrial, and societal problems.

In calculating the arithmetic mean of a sample, for example, the algorithm works by summing all the data values observed in the sample and then dividing this sum by the number of data items. This single measure, the mean of the sample, is called a statistic; its value is frequently used as an estimate of the mean value of all items comprising the population from which the sample is drawn. The population mean is also a single measure; however, it is not called a statistic; instead it is called a population parameter.

Statistics deals with all aspects of the collection, organization, analysis, interpretation, and presentation of data. It includes the planning of data collection in terms of the design of surveys and experiments.

Statistics can be used to improve data quality by developing specific experimental designs and survey samples. Statistics also provides tools for prediction and forecasting. Statistics is applicable to a wide variety of academic disciplines, including natural and social sciences as well as government and business. Statistical consultants can help organizations and companies that don’t have in-house expertise relevant to their particular questions.

Statistical methods can summarize or describe a collection of data. This is called descriptive statistics . This is particularly useful in communicating the results of experiments and research. Statistical models can also be used to draw statistical inferences about the process or population under study—a practice called inferential statistics. Inference is a vital element of scientific advancement, since it provides a way to draw conclusions from data that are subject to random variation. Conclusions are tested in order to prove the propositions being investigated further, as part of the scientific method. Descriptive statistics and analysis of the new data tend to provide more information as to the truth of the proposition.

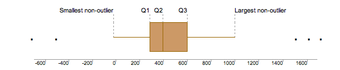

Summary statistics: In descriptive statistics, summary statistics are used to summarize a set of observations, in order to communicate the largest amount as simply as possible. This Boxplot represents Michelson and Morley’s data on the speed of light. It consists of five experiments, each made of 20 consecutive runs.

When applying statistics to a scientific, industrial, or societal problems, it is necessary to begin with a population or process to be studied. Populations can be diverse topics such as “all persons living in a country” or “every atom composing a crystal”. A population can also be composed of observations of a process at various times, with the data from each observation serving as a different member of the overall group. Data collected about this kind of “population” constitutes what is called a time series. For practical reasons, a chosen subset of the population called a sample is studied—as opposed to compiling data about the entire group (an operation called census). Once a sample that is representative of the population is determined, data is collected for the sample members in an observational or experimental setting. This data can then be subjected to statistical analysis, serving two related purposes: description and inference.

Descriptive statistics summarize the population data by describing what was observed in the sample numerically or graphically. Numerical descriptors include mean and standard deviation for continuous data types (like heights or weights), while frequency and percentage are more useful in terms of describing categorical data (like race). Inferential statistics uses patterns in the sample data to draw inferences about the population represented, accounting for randomness. These inferences may take the form of: answering yes/no questions about the data (hypothesis testing), estimating numerical characteristics of the data (estimation), describing associations within the data (correlation) and modeling relationships within the data (for example, using regression analysis). Inference can extend to forecasting, prediction and estimation of unobserved values either in or associated with the population being studied. It can include extrapolation and interpolation of time series or spatial data and can also include data mining.

Statistical analysis of a data set often reveals that two variables of the population under consideration tend to vary together, as if they were connected. For example, a study of annual income that also looks at age of death might find that poor people tend to have shorter lives than affluent people. The two variables are said to be correlated; however, they may or may not be the cause of one another. The correlation could be caused by a third, previously unconsidered phenomenon, called a confounding variable. For this reason, there is no way to immediately infer the existence of a causal relationship between the two variables.

To use a sample as a guide to an entire population, it is important that it truly represent the overall population. Representative sampling assures that inferences and conclusions can safely extend from the sample to the population as a whole. A major problem lies in determining the extent that the sample chosen is actually representative. Statistics offers methods to estimate and correct for any random trending within the sample and data collection procedures. There are also methods of experimental design for experiments that can lessen these issues at the outset of a study, strengthening its capability to discern truths about the population. Randomness is studied using the mathematical discipline of probability theory. Probability is used in “mathematical statistics” (alternatively, “statistical theory”) to study the sampling distributions of sample statistics and, more generally, the properties of statistical procedures. The use of any statistical method is valid when the system or population under consideration satisfies the assumptions of the method.

In applying statistics to a scientific, industrial, or societal problem, it is necessary to begin with a population or process to be studied.

Recall that the field of Statistics involves using samples to make inferences about populations and describing how variables relate to each other.

In applying statistics to a scientific, industrial, or societal problem, it is necessary to begin with a population or process to be studied. Populations can be diverse topics such as “all persons living in a country” or “every atom composing a crystal.”. A population can also be composed of observations of a process at various times, with the data from each observation serving as a different member of the overall group. Data collected about this kind of “population” constitutes what is called a time series.

For practical reasons, a chosen subset of the population called a sample is studied—as opposed to compiling data about the entire group (an operation called census). Once a sample that is representative of the population is determined, data is collected for the sample members in an observational or experimental setting. This data can then be subjected to statistical analysis, serving two related purposes: description and inference.

The concept of correlation is particularly noteworthy for the potential confusion it can cause. Statistical analysis of a data set often reveals that two variables (properties) of the population under consideration tend to vary together, as if they were connected. For example, a study of annual income that also looks at age of death might find that poor people tend to have shorter lives than affluent people. The two variables are said to be correlated; however, they may or may not be the cause of one another. The correlation phenomena could be caused by a third, previously unconsidered phenomenon, called a confounding variable. For this reason, there is no way to immediately infer the existence of a causal relationship between the two variables.

To use a sample as a guide to an entire population, it is important that it truly represent the overall population. Representative sampling assures that inferences and conclusions can safely extend from the sample to the population as a whole. A major problem lies in determining the extent that the sample chosen is actually representative. Statistics offers methods to estimate and correct for any random trending within the sample and data collection procedures. There are also methods of experimental design for experiments that can lessen these issues at the outset of a study, strengthening its capability to discern truths about the population.

Randomness is studied using the mathematical discipline of probability theory. Probability is used in “mathematical statistics” (alternatively, “statistical theory”) to study the sampling distributions of sample statistics and, more generally, the properties of statistical procedures. The use of any statistical method is valid when the system or population under consideration satisfies the assumptions of the method.

The essential skill of critical thinking will go a long way in helping one to develop statistical literacy.

Interpret the role that the process of critical thinking plays in statistical literacy.

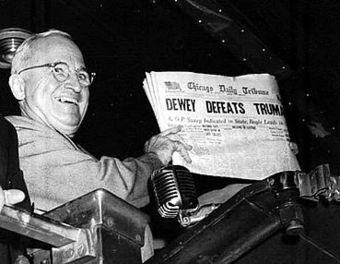

Each day people are inundated with statistical information from advertisements (“4 out of 5 dentists recommend”), news reports (“opinion polls show the incumbent leading by four points”), and even general conversation (“half the time I don’t know what you’re talking about”). Experts and advocates often use numerical claims to bolster their arguments, and statistical literacy is a necessary skill to help one decide what experts mean and which advocates to believe. This is important because statistics can be made to produce misrepresentations of data that may seem valid. The aim of statistical literacy is to improve the public understanding of numbers and figures.

For example, results of opinion polling are often cited by news organizations, but the quality of such polls varies considerably. Some understanding of the statistical technique of sampling is necessary in order to be able to correctly interpret polling results. Sample sizes may be too small to draw meaningful conclusions, and samples may be biased. The wording of a poll question may introduce a bias, and thus can even be used intentionally to produce a biased result. Good polls use unbiased techniques, with much time and effort being spent in the design of the questions and polling strategy. Statistical literacy is necessary to understand what makes a poll trustworthy and to properly weigh the value of poll results and conclusions.

The essential skill of critical thinking will go a long way in helping one to develop statistical literacy. Critical thinking is a way of deciding whether a claim is always true, sometimes true, partly true, or false. The list of core critical thinking skills includes observation, interpretation, analysis, inference, evaluation, explanation, and meta-cognition. There is a reasonable level of consensus that an individual or group engaged in strong critical thinking gives due consideration to establish:

Critical thinking calls for the ability to:

Critical Thinking

Critical thinking is an inherent part of data analysis and statistical literacy.

Experimental design is the design of studies where variation, which may or may not be under full control of the experimenter, is present.

Outline the methodology for designing experiments in terms of comparison, randomization, replication, blocking, orthogonality, and factorial experiments

In general usage, design of experiments or experimental design is the design of any information-gathering exercises where variation is present, whether under the full control of the experimenter or not. Formal planned experimentation is often used in evaluating physical objects, chemical formulations, structures, components, and materials. In the design of experiments, the experimenter is often interested in the effect of some process or intervention (the “treatment”) on some objects (the “experimental units”), which may be people, parts of people, groups of people, plants, animals, etc. Design of experiments is thus a discipline that has very broad application across all the natural and social sciences and engineering.

A methodology for designing experiments was proposed by Ronald A. Fisher in his innovative books The Arrangement of Field Experiments (1926) and The Design of Experiments (1935). These methods have been broadly adapted in the physical and social sciences.

Old-fashioned scale

A scale is emblematic of the methodology of experimental design which includes comparison, replication, and factorial considerations.

It is best that a process be in reasonable statistical control prior to conducting designed experiments. When this is not possible, proper blocking, replication, and randomization allow for the careful conduct of designed experiments. To control for nuisance variables, researchers institute control checks as additional measures. Investigators should ensure that uncontrolled influences (e.g., source credibility perception) are measured do not skew the findings of the study.

One of the most important requirements of experimental research designs is the necessity of eliminating the effects of spurious, intervening, and antecedent variables. In the most basic model, cause (X) leads to effect (Y">). But there could be a third variable (Z">) that influences (Y">), and X"> might not be the true cause at all. Z"> is said to be a spurious variable and must be controlled for. The same is true for intervening variables (a variable in between the supposed cause (X">) and the effect (Y">)), and anteceding variables (a variable prior to the supposed cause (X">) that is the true cause). In most designs, only one of these causes is manipulated at a time.

An unbiased random selection of individuals is important so that in the long run, the sample represents the population.

Explain how simple random sampling leads to every object having the same possibility of being chosen.

Sampling is concerned with the selection of a subset of individuals from within a statistical population to estimate characteristics of the whole population . Two advantages of sampling are that the cost is lower and data collection is faster than measuring the entire population.

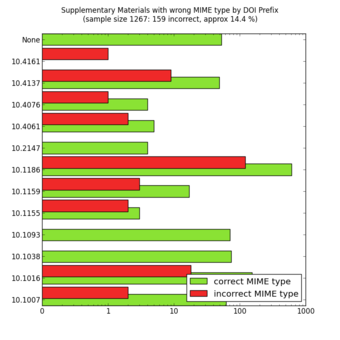

Random Sampling

MIME types of a random sample of supplementary materials from the Open Access subset in PubMed Central as of October 23, 2012. The colour code means that the MIME type of the supplementary files is indicated correctly (green) or incorrectly (red) in the XML at PubMed Central.

Each observation measures one or more properties (such as weight, location, color) of observable bodies distinguished as independent objects or individuals. In survey sampling, weights can be applied to the data to adjust for the sample design, particularly stratified sampling (blocking). Results from probability theory and statistical theory are employed to guide practice. In business and medical research, sampling is widely used for gathering information about a population.

A simple random sample is a subset of individuals chosen from a larger set (a population). Each individual is chosen randomly and entirely by chance, such that each individual has the same probability of being chosen at any stage during the sampling process and each subset of k individuals has the same probability of being chosen for the sample as any other subset of k individuals. A simple random sample is an unbiased surveying technique.

Simple random sampling is a basic type of sampling, since it can be a component of other more complex sampling methods. The principle of simple random sampling is that every object has the same possibility to be chosen. For example, N college students want to get a ticket for a basketball game, but there are not enough tickets (X) for them, so they decide to have a fair way to see who gets to go. Then, everybody is given a number (0 to N-1), and random numbers are generated. The first X numbers would be the lucky ticket winners.

In small populations and often in large ones, such sampling is typically done “without replacement” (i.e., one deliberately avoids choosing any member of the population more than once). Although simple random sampling can be conducted with replacement instead, this is less common and would normally be described more fully as simple random sampling with replacement. Sampling done without replacement is no longer independent, but still satisfies exchangeability. Hence, many results still hold. Further, for a small sample from a large population, sampling without replacement is approximately the same as sampling with replacement, since the odds of choosing the same individual twice is low.

An unbiased random selection of individuals is important so that, in the long run, the sample represents the population. However, this does not guarantee that a particular sample is a perfect representation of the population. Simple random sampling merely allows one to draw externally valid conclusions about the entire population based on the sample.

Conceptually, simple random sampling is the simplest of the probability sampling techniques. It requires a complete sampling frame, which may not be available or feasible to construct for large populations. Even if a complete frame is available, more efficient approaches may be possible if other useful information is available about the units in the population.

Advantages are that it is free of classification error, and it requires minimum advance knowledge of the population other than the frame. Its simplicity also makes it relatively easy to interpret data collected via SRS. For these reasons, simple random sampling best suits situations where not much information is available about the population and data collection can be efficiently conducted on randomly distributed items, or where the cost of sampling is small enough to make efficiency less important than simplicity. If these conditions are not true, stratified sampling or cluster sampling may be a better choice.

II

An observational study is one in which no variables can be manipulated or controlled by the investigator.

Identify situations in which observational studies are necessary and the challenges that arise in their interpretation.

A common goal in statistical research is to investigate causality, which is the relationship between an event (the cause) and a second event (the effect), where the second event is understood as a consequence of the first. There are two major types of causal statistical studies: experimental studies and observational studies. An observational study draws inferences about the possible effect of a treatment on subjects, where the assignment of subjects into a treated group versus a control group is outside the control of the investigator. This is in contrast with experiments, such as randomized controlled trials, where each subject is randomly assigned to a treated group or a control group. In other words, observational studies have no independent variables — nothing is manipulated by the experimenter. Rather, observations have the equivalent of two dependent variables.

In an observational study, the assignment of treatments may be beyond the control of the investigator for a variety of reasons:

Observational studies can never identify causal relationships because even though two variables are related both might be caused by a third, unseen, variable. Since the underlying laws of nature are assumed to be causal laws, observational findings are generally regarded as less compelling than experimental findings.

Observational studies can, however:

A major challenge in conducting observational studies is to draw inferences that are acceptably free from influences by overt biases, as well as to assess the influence of potential hidden biases.

Observational Studies

Nature Observation and Study Hall in The Natural and Cultural Gardens, The Expo Memorial Park, Suita City, Osaka, Japan. Observational studies are a type of experiments in which the variables are outside the control of the investigator.

The Clofibrate Trial was a placebo-controlled study to determine the safety and effectiveness of drugs treating coronary heart disease in men.

Outline how the use of placebos in controlled experiments leads to more reliable results.

Clofibrate (tradename Atromid-S) is an organic compound that is marketed as a fibrate. It is a lipid-lowering agent used for controlling the high cholesterol and triacylglyceride level in the blood. Clofibrate was one of four lipid-modifying drugs tested in an observational study known as the Coronary Drug Project. Also known as the World Health Organization Cooperative Trial on Primary Prevention of Ischaemic Heart Disease, the study was a randomized, multi-center, double-blind, placebo-controlled trial that was intended to study the safety and effectiveness of drugs for long-term treatment of coronary heart disease in men.

Placebo-controlled studies are a way of testing a medical therapy in which, in addition to a group of subjects that receives the treatment to be evaluated, a separate control group receives a sham “placebo” treatment which is specifically designed to have no real effect. Placebos are most commonly used in blinded trials, where subjects do not know whether they are receiving real or placebo treatment.

The purpose of the placebo group is to account for the placebo effect — that is, effects from treatment that do not depend on the treatment itself. Such factors include knowing one is receiving a treatment, attention from health care professionals, and the expectations of a treatment’s effectiveness by those running the research study. Without a placebo group to compare against, it is not possible to know whether the treatment itself had any effect.

Appropriate use of a placebo in a clinical trial often requires, or at least benefits from, a double-blind study design, which means that neither the experimenters nor the subjects know which subjects are in the “test group” and which are in the “control group. ” This creates a problem in creating placebos that can be mistaken for active treatments. Therefore, it can be necessary to use a psychoactive placebo, a drug that produces physiological effects that encourage the belief in the control groups that they have received an active drug.

Patients frequently show improvement even when given a sham or “fake” treatment. Such intentionally inert placebo treatments can take many forms, such as a pill containing only sugar, a surgery where nothing is actually done, or a medical device (such as ultrasound) that is not actually turned on. Also, due to the body’s natural healing ability and statistical effects such as regression to the mean, many patients will get better even when given no treatment at all. Thus, the relevant question when assessing a treatment is not “does the treatment work? ” but “does the treatment work better than a placebo treatment, or no treatment at all? ”

Therefore, the use of placebos is a standard control component of most clinical trials which attempt to make some sort of quantitative assessment of the efficacy of medicinal drugs or treatments.

Those in the placebo group who adhered to the placebo treatment (took the placebo regularly as instructed) showed nearly half the mortality rate as those who were not adherent. A similar study of women found survival was nearly 2.5 times greater for those who adhered to their placebo. This apparent placebo effect may have occurred because:

The Coronary Drug Project found that subjects using clofibrate to lower serum cholesterol observed excess mortality in the clofibrate-treated group despite successful cholesterol lowering (47% more deaths during treatment with clofibrate and 5% after treatment with clofibrate) than the non-treated high cholesterol group. These deaths were due to a wide variety of causes other than heart disease, and remain “unexplained”.

Clofibrate was discontinued in 2002 due to adverse affects.

Placebo-Controlled Observational Studies

Prescription placebos used in research and practice.

A confounding variable is an extraneous variable in a statistical model that correlates with both the dependent variable and the independent variable.

Break down why confounding variables may lead to bias and spurious relationships and what can be done to avoid these phenomenons.

In risk assessments, factors such as age, gender, and educational levels often have impact on health status and so should be controlled. Beyond these factors, researchers may not consider or have access to data on other causal factors. An example is on the study of smoking tobacco on human health. Smoking, drinking alcohol, and diet are lifestyle activities that are related. A risk assessment that looks at the effects of smoking but does not control for alcohol consumption or diet may overestimate the risk of smoking. Smoking and confounding are reviewed in occupational risk assessments such as the safety of coal mining. When there is not a large sample population of non-smokers or non-drinkers in a particular occupation, the risk assessment may be biased towards finding a negative effect on health.

A confounding variable is an extraneous variable in a statistical model that correlates (positively or negatively) with both the dependent variable and the independent variable. A perceived relationship between an independent variable and a dependent variable that has been misestimated due to the failure to account for a confounding factor is termed a spurious relationship, and the presence of misestimation for this reason is termed omitted-variable bias.

As an example, suppose that there is a statistical relationship between ice cream consumption and number of drowning deaths for a given period. These two variables have a positive correlation with each other. An individual might attempt to explain this correlation by inferring a causal relationship between the two variables (either that ice cream causes drowning, or that drowning causes ice cream consumption). However, a more likely explanation is that the relationship between ice cream consumption and drowning is spurious and that a third, confounding, variable (the season) influences both variables: during the summer, warmer temperatures lead to increased ice cream consumption as well as more people swimming and, thus, more drowning deaths.

Confounding by indication has been described as the most important limitation of observational studies. Confounding by indication occurs when prognostic factors cause bias, such as biased estimates of treatment effects in medical trials. Controlling for known prognostic factors may reduce this problem, but it is always possible that a forgotten or unknown factor was not included or that factors interact complexly. Randomized trials tend to reduce the effects of confounding by indication due to random assignment.

Confounding variables may also be categorised according to their source:

A reduction in the potential for the occurrence and effect of confounding factors can be obtained by increasing the types and numbers of comparisons performed in an analysis. If a relationship holds among different subgroups of analyzed units, confounding may be less likely. That said, if measures or manipulations of core constructs are confounded (i.e., operational or procedural confounds exist), subgroup analysis may not reveal problems in the analysis.

Peer review is a process that can assist in reducing instances of confounding, either before study implementation or after analysis has occurred. Similarly, study replication can test for the robustness of findings from one study under alternative testing conditions or alternative analyses (e.g., controlling for potential confounds not identified in the initial study). Also, confounding effects may be less likely to occur and act similarly at multiple times and locations.

Moreover, depending on the type of study design in place, there are various ways to modify that design to actively exclude or control confounding variables:

The Berkeley study is one of the best known real life examples of an experiment suffering from a confounding variable.

Women have traditionally had limited access to higher education. Moreover, when women began to be admitted to higher education, they were encouraged to major in less-intellectual subjects. For example, the study of English literature in American and British colleges and universities was instituted as a field considered suitable to women’s “lesser intellects”.

However, since 1991 the proportion of women enrolled in college in the U.S. has exceeded the enrollment rate for men, and that gap has widened over time. As of 2007, women made up the majority — 54 percent — of the 10.8 million college students enrolled in the U.S.

This has not negated the fact that gender bias exists in higher education. Women tend to score lower on graduate admissions exams, such as the Graduate Record Exam (GRE) and the Graduate Management Admissions Test (GMAT). Representatives of the companies that publish these tests have hypothesized that greater number of female applicants taking these tests pull down women’s average scores. However, statistical research proves this theory wrong. Controlling for the number of people taking the test does not account for the scoring gap.

On February 7, 1975, a study was published in the journal Science by P.J. Bickel, E.A. Hammel, and J.W. O’Connell entitled “Sex Bias in Graduate Admissions: Data from Berkeley. ” This study was conducted in the aftermath of a law suit filed against the University, citing admission figures for the fall of 1973, which showed that men applying were more likely than women to be admitted, and the difference was so large that it was unlikely to be due to chance.

Examination of the aggregate data on admissions showed a blatant, if easily misunderstood, pattern of gender discrimination against applicants.

| All | Men | Women | ||||

|---|---|---|---|---|---|---|

| Applicants | Admitted | Applicants | Admitted | Applicants | Admitted | |

| Total | 12,763 | 41% | 8,442 | 44% | 4,321 | 35% |

When examining the individual departments, it appeared that no department was significantly biased against women. In fact, most departments had a small but statistically significant bias in favor of women. The data from the six largest departments are listed below.

| Department | Men (# Applicants) | Men (% Admitted) | Women (# Applicants) | Women (% Admitted) |

|---|---|---|---|---|

| A | 825 | 62 | 108 | 82 |

| B | 560 | 63 | 25 | 68 |

| C | 325 | 37 | 593 | 34 |

| D | 417 | 33 | 375 | 35 |

| E | 191 | 28 | 393 | 24 |

| F | 272 | 6 | 341 | 7 |

The research paper by Bickel et al. concluded that women tended to apply to competitive departments with low rates of admission even among qualified applicants (such as in the English Department), whereas men tended to apply to less-competitive departments with high rates of admission among the qualified applicants (such as in engineering and chemistry). The study also concluded that the graduate departments that were easier to enter at the University, at the time, tended to be those that required more undergraduate preparation in mathematics. Therefore, the admission bias seemed to stem from courses previously taken.

The above study is one of the best known real life examples of an experiment suffering from a confounding variable. In this particular case, we can see an occurrence of Simpson’s Paradox . Simpson’s Paradox is a paradox in which a trend that appears in different groups of data disappears when these groups are combined, and the reverse trend appears for the aggregate data. This result is often encountered in social-science and medical-science statistics, and is particularly confounding when frequency data are unduly given causal interpretations.

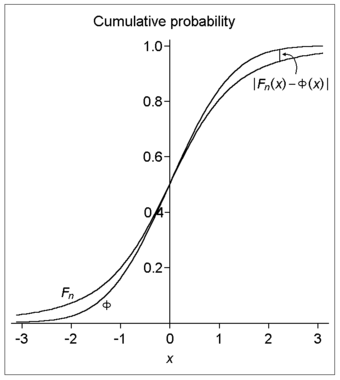

Simpson’s Paradox: For a full explanation of the figure, visit: Simpson’s Paradox on Wikipedia

The practical significance of Simpson’s paradox surfaces in decision making situations where it poses the following dilemma: Which data should we consult in choosing an action, the aggregated or the partitioned? The answer seems to be that one should sometimes follow the partitioned and sometimes the aggregated data, depending on the story behind the data; with each story dictating its own choice.

As to why and how a story, not data, should dictate choices, the answer is that it is the story which encodes the causal relationships among the variables. Once we extract these relationships we can test algorithmically whether a given partition, representing confounding variables, gives the correct answer.

Confounding Variables in Practice

One of the best real life examples of the presence of confounding variables occurred in a study regarding sex bias in graduate admissions here, at the University of California, Berkeley.

The Salk polio vaccine field trial incorporated a double blind placebo control methodology to determine the effectiveness of the vaccine.

The Salk polio vaccine field trials constitute one of the most famous and one of the largest statistical studies ever conducted. The field trials are of particular value to students of statistics because two different experimental designs were used.

The Salk vaccine, or inactivated poliovirus vaccine (IPV), is based on three wild, virulent reference strains:

grown in a type of monkey kidney tissue culture (Vero cell line), which are then inactivated with formalin. The injected Salk vaccine confers IgG-mediated immunity in the bloodstream, which prevents polio infection from progressing to viremia and protects the motor neurons, thus eliminating the risk of bulbar polio and post-polio syndrome.

Statistical tests of new medical treatments almost always have the same basic format. The responses of a treatment group of subjects who are given the treatment are compared to the responses of a control group of subjects who are not given the treatment. The treatment groups and control groups should be as similar as possible.

Beginning February 23, 1954, the vaccine was tested at Arsenal Elementary School and the Watson Home for Children in Pittsburgh, Pennsylvania. Salk’s vaccine was then used in a test called the Francis Field Trial, led by Thomas Francis; the largest medical experiment in history. The test began with some 4,000 children at Franklin Sherman Elementary School in McLean, Virginia, and would eventually involve 1.8 million children, in 44 states from Maine to California. By the conclusion of the study, roughly 440,000 received one or more injections of the vaccine, about 210,000 children received a placebo, consisting of harmless culture media, and 1.2 million children received no vaccination and served as a control group, who would then be observed to see if any contracted polio.

The results of the field trial were announced April 12, 1955 (the 10th anniversary of the death of President Franklin D. Roosevelt, whose paralysis was generally believed to have been caused by polio). The Salk vaccine had been 60–70% effective against PV1 (poliovirus type 1), over 90% effective against PV2 and PV3, and 94% effective against the development of bulbar polio. Soon after Salk’s vaccine was licensed in 1955, children’s vaccination campaigns were launched. In the U.S, following a mass immunization campaign promoted by the March of Dimes, the annual number of polio cases fell from 35,000 in 1953 to 5,600 by 1957. By 1961 only 161 cases were recorded in the United States.

The original design of the experiment called for second graders (with parental consent) to form the treatment group and first and third graders to form the control group. This design was known as the observed control experiment.

Two serious issues arose in this design: selection bias and diagnostic bias. Because only second graders with permission from their parents were administered the treatment, this treatment group became self-selecting.

Thus, a randomized control design was implemented to overcome these apparent deficiencies. The key distinguishing feature of the randomized control design is that study subjects, after assessment of eligibility and recruitment, but before the intervention to be studied begins, are randomly allocated to receive one or the other of the alternative treatments under study. Therefore, randomized control tends to negate all effects (such as confounding variables) except for the treatment effect.

This design also had the characteristic of being double-blind. Double-blind describes an especially stringent way of conducting an experiment on human test subjects which attempts to eliminate subjective, unrecognized biases carried by an experiment’s subjects and conductors. In a double-blind experiment, neither the participants nor the researchers know which participants belong to the control group, as opposed to the test group. Only after all data have been recorded (and in some cases, analyzed) do the researchers learn which participants were which.

This combination of randomized control and double-blind experimental factors has become the gold standard for a clinical trial.

Numerous studies have been conducted to examine the value of the portacaval shunt procedure, many using randomized controls.

A portacaval shunt is a treatment for high blood pressure in the liver. A connection is made between the portal vein, which supplies 75% of the liver’s blood, and the inferior vena cava, the vein that drains blood from the lower two-thirds of the body. The most common causes of liver disease resulting in portal hypertension are cirrhosis , caused by alcohol abuse, and viral hepatitis (hepatitis B and C). Less common causes include diseases such as hemochromatosis, primary biliary cirrhosis (PBC), and portal vein thrombosis. The procedure is long and hazardous .

Numerous studies have been conducted to examine the value of and potential concerns with the surgery. Of these studies, 63% were conducted without controls, 29% were conducted with non-randomized controls, and 8% were conducted with randomized controls.

Random assignment, or random placement, is an experimental technique for assigning subjects to different treatments (or no treatment). The thinking behind random assignment is that by randomizing treatment assignments, the group attributes for the different treatments will be roughly equivalent; therefore, any effect observed between treatment groups can be linked to the treatment effect and cannot be considered a characteristic of the individuals in the group.

In experimental design, random assignment of participants in experiments or treatment and control groups help to ensure that any differences between and within the groups are not systematic at the outset of the experiment. Random assignment does not guarantee that the groups are “matched” or equivalent, only that any differences are due to chance.

The steps to random assignment include:

Because most basic statistical tests require the hypothesis of an independent randomly sampled population, random assignment is the desired assignment method. It provides control for all attributes of the members of the samples—in contrast to matching on only one or more variables—and provides the mathematical basis for estimating the likelihood of group equivalence for characteristics one is interested in. This applies both for pre-treatment checks on equivalence and the evaluation of post treatment results using inferential statistics. More advanced statistical modeling can be used to adapt the inference to the sampling method.

A scientific control is an observation designed to minimize the effects of variables other than the single independent variable.

Classify scientific controls and identify how they are used in experiments.

A scientific control is an observation designed to minimize the effects of variables other than the single independent variable. This increases the reliability of the results, often through a comparison between control measurements and the other measurements.

For example, during drug testing, scientists will try to control two groups to keep them as identical as possible, then allow one group to try the drug. Another example might be testing plant fertilizer by giving it to only half the plants in a garden: the plants that receive no fertilizer are the control group, because they establish the baseline level of growth that the fertilizer-treated plants will be compared against. Without a control group, the experiment cannot determine whether the fertilizer-treated plants grow more than they would have if untreated.

Ideally, all variables in an experiment will be controlled (accounted for by the control measurements) and none will be uncontrolled. In such an experiment, if all the controls work as expected, it is possible to conclude that the experiment is working as intended and that the results of the experiment are due to the effect of the variable being tested. That is, scientific controls allow an investigator to make a claim like “Two situations were identical until factor X occurred. Since factor X is the only difference between the two situations, the new outcome was caused by factor X. ”

Controlled experiments can be performed when it is difficult to exactly control all the conditions in an experiment. In this case, the experiment begins by creating two or more sample groups that are probabilistically equivalent, which means that measurements of traits should be similar among the groups and that the groups should respond in the same manner if given the same treatment. This equivalency is determined by statistical methods that take into account the amount of variation between individuals and the number of individuals in each group. In fields such as microbiology and chemistry, where there is very little variation between individuals and the group size is easily in the millions, these statistical methods are often bypassed and simply splitting a solution into equal parts is assumed to produce identical sample groups.

The simplest types of control are negative and positive controls. These two controls, when both are successful, are usually sufficient to eliminate most potential confounding variables. This means that the experiment produces a negative result when a negative result is expected and a positive result when a positive result is expected.

Negative controls are groups where no phenomenon is expected. They ensure that there is no effect when there should be no effect. To continue with the example of drug testing, a negative control is a group that has not been administered the drug. We would say that the control group should show a negative or null effect.

If the treatment group and the negative control both produce a negative result, it can be inferred that the treatment had no effect. If the treatment group and the negative control both produce a positive result, it can be inferred that a confounding variable acted on the experiment, and the positive results are likely not due to the treatment.

Positive controls are groups where a phenomenon is expected. That is, they ensure that there is an effect when there should be an effect. This is accomplished by using an experimental treatment that is already known to produce that effect and then comparing this to the treatment that is being investigated in the experiment.

Positive controls are often used to assess test validity. For example, to assess a new test’s ability to detect a disease, then we can compare it against a different test that is already known to work. The well-established test is the positive control, since we already know that the answer to the question (whether the test works) is yes.

For difficult or complicated experiments, the result from the positive control can also help in comparison to previous experimental results. For example, if the well-established disease test was determined to have the same effectiveness as found by previous experimenters, this indicates that the experiment is being performed in the same way that the previous experimenters did.

When possible, multiple positive controls may be used. For example, if there is more than one disease test that is known to be effective, more than one might be tested. Multiple positive controls also allow finer comparisons of the results (calibration or standardization) if the expected results from the positive controls have different sizes.

Controlled Experiments

An all-female crew of scientific experimenters began a five-day exercise on December 16, 1974. They conducted 11 selected experiments in materials science to determine their practical application for Spacelab missions and to identify integration and operational problems that might occur on actual missions. Air circulation, temperature, humidity and other factors were carefully controlled.

III

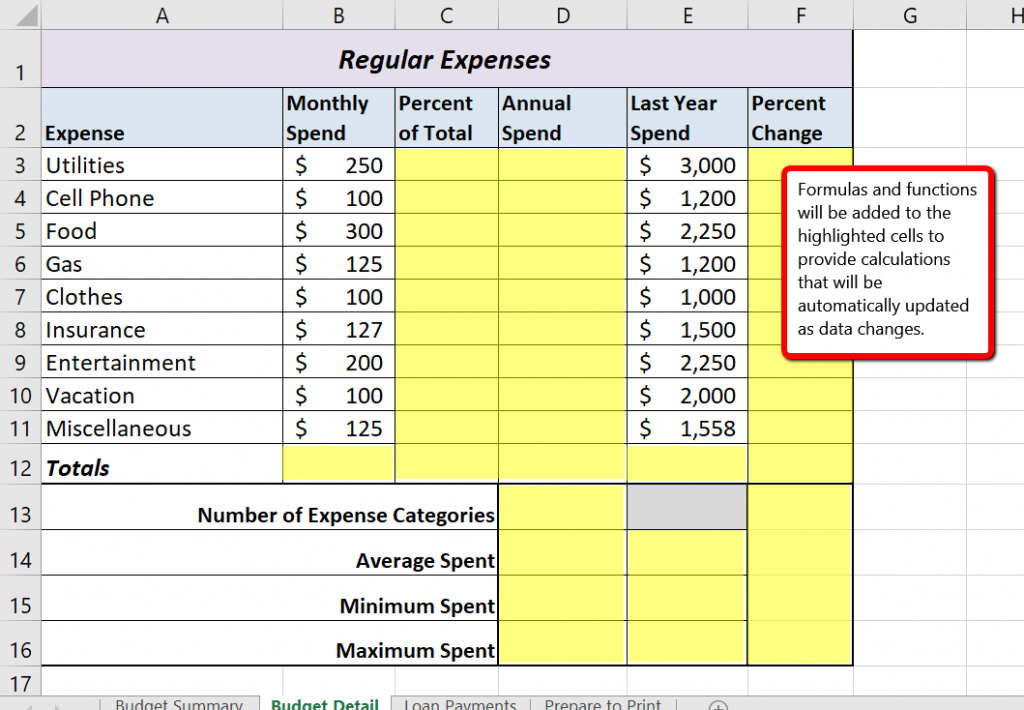

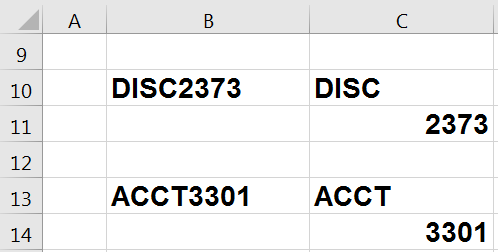

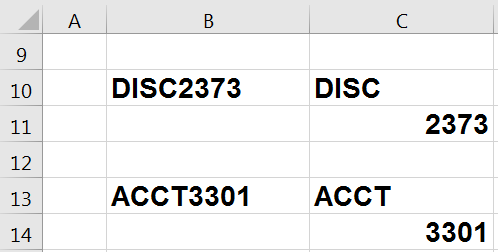

Microsoft® Excel® is a tool that can be used in virtually all careers and is valuable in both professional and personal settings. Whether you need to keep track of medications in inventory for a hospital or create a financial plan for your retirement, Excel enables you to do these activities efficiently and accurately. The following trainings and Excel Challenge assignment introduce the fundamental skills necessary to get you started in using Excel. You will find that just a few skills can make you very productive in a short period of time.

Adapted from How to Use Microsoft Excel: The Careers in Practice Series, adapted by The Saylor Foundation without attribution as requested by the work’s original creator or licensee, and licensed under CC BY-NC-SA 3.0.

Microsoft® Office contains a variety of tools that help people accomplish many personal and professional objectives. Microsoft Excel is perhaps the most versatile and widely used of all the Office applications. No matter which career path you choose, you will likely need to use Excel to accomplish your professional objectives, some of which may occur daily. This chapter provides an overview of the Excel application along with an orientation for accessing the commands and features of an Excel workbook.

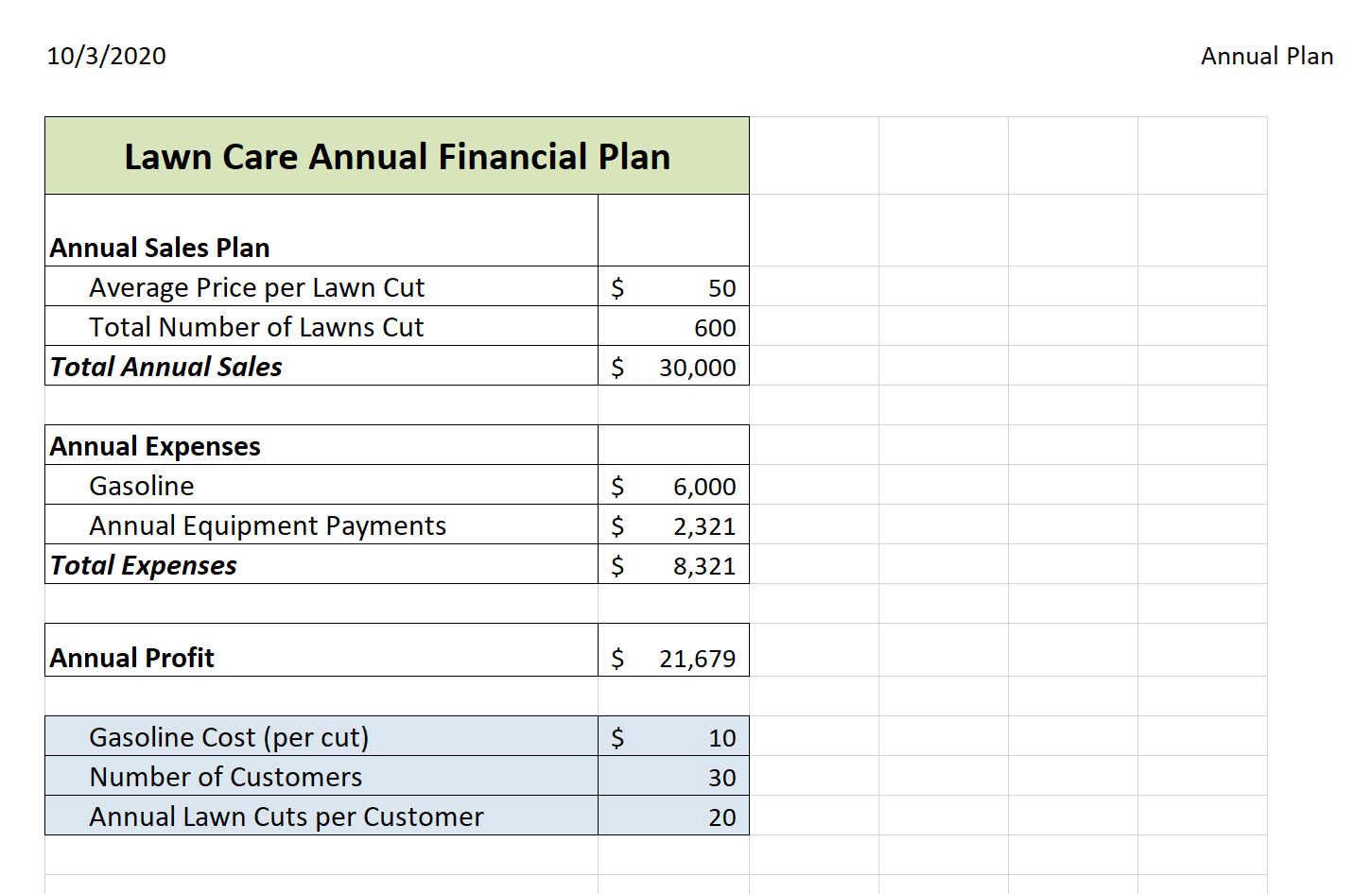

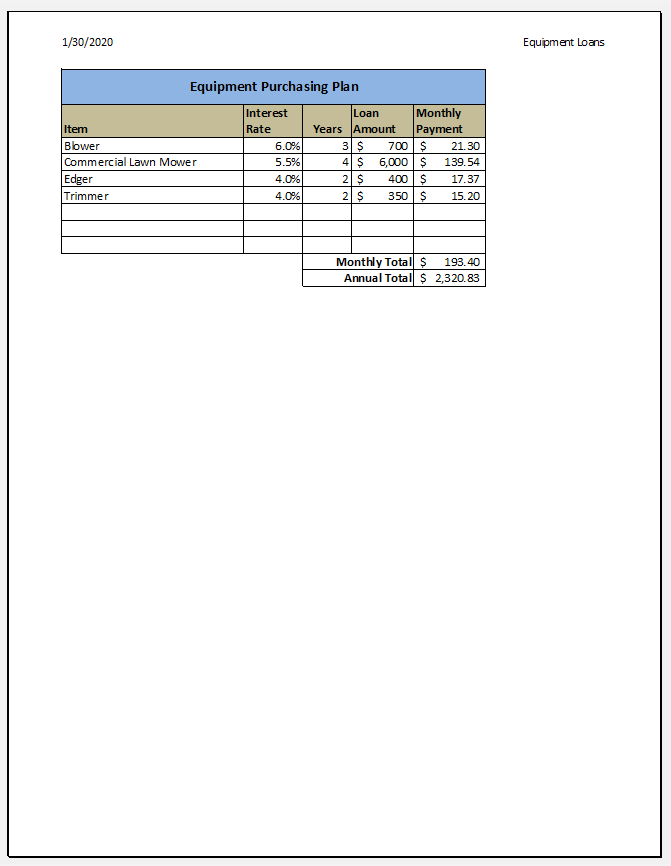

Taking a very simple view, Excel is a tool that allows you to enter quantitative data into an electronic spreadsheet to apply one or many mathematical computations. These computations ultimately convert that quantitative data into information. The information produced in Excel can be used to make decisions in both professional and personal contexts. For example, employees can use Excel to determine how much inventory to buy for a clothing retailer, how much medication to administer to a patient, or how much money to spend to stay within a budget. With respect to personal decisions, you can use Excel to determine how much money you can spend on a house, how much you can spend on car lease payments, or how much you need to save to reach your retirement goals. We will demonstrate how you can use Excel to make these decisions and many more throughout this text.

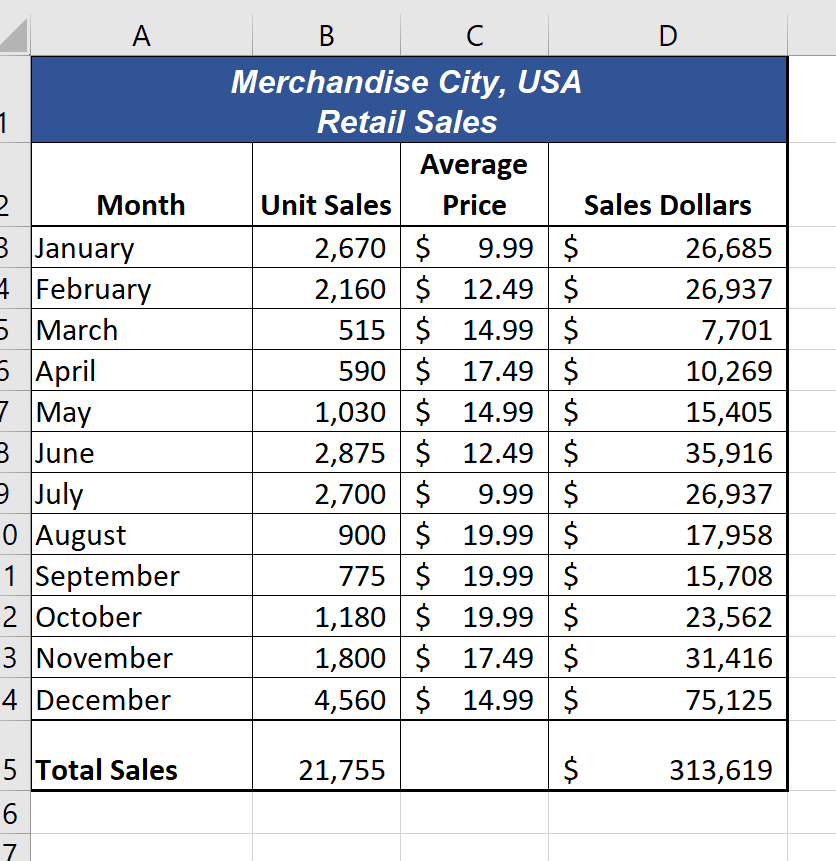

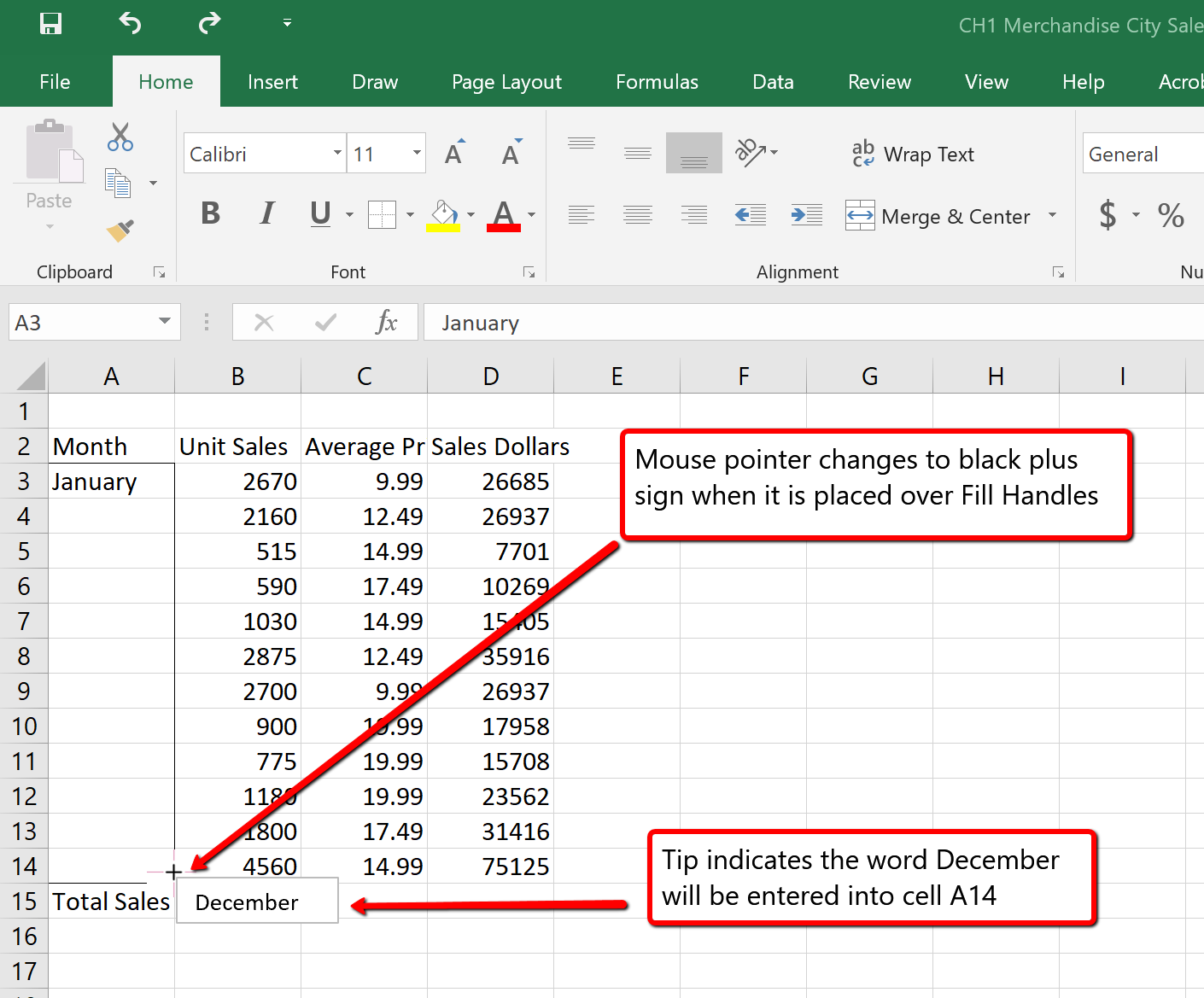

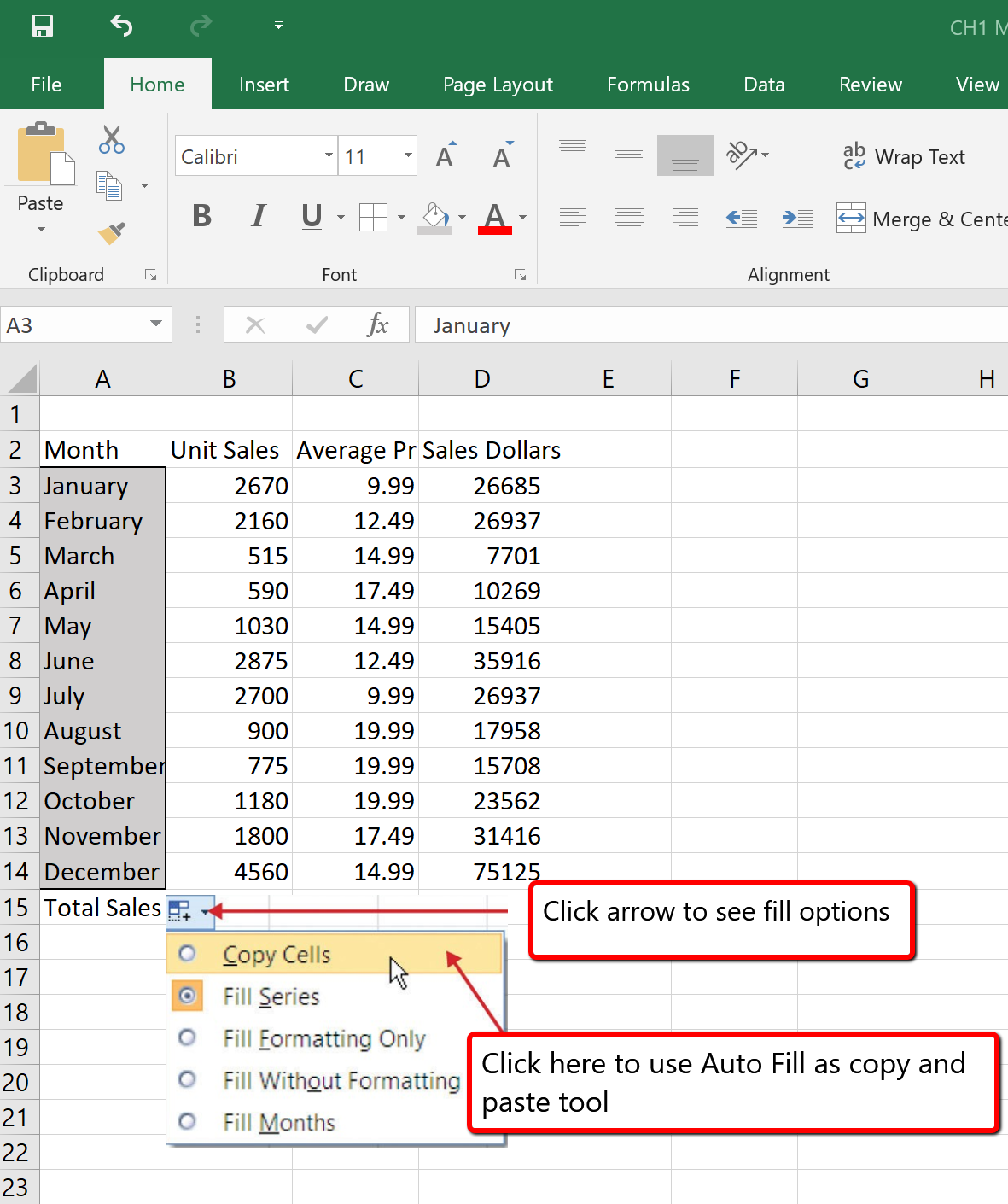

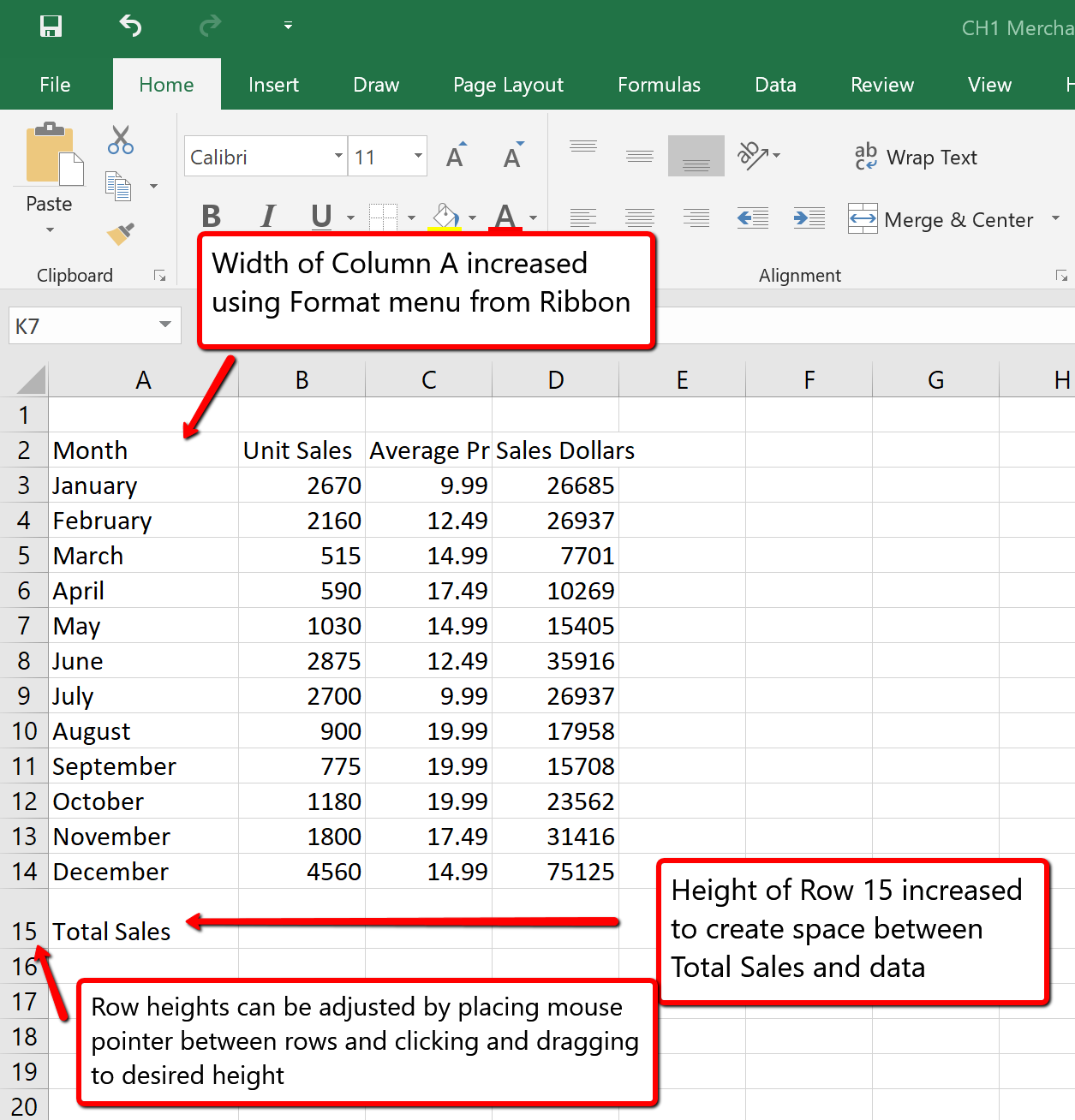

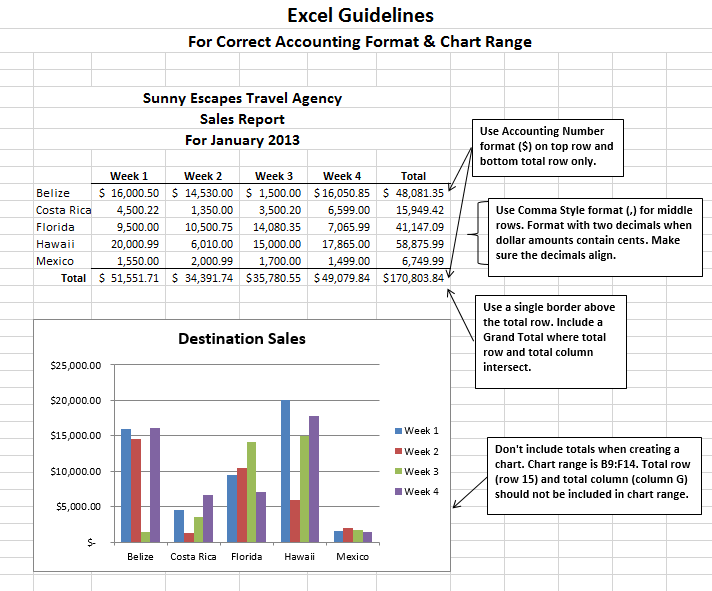

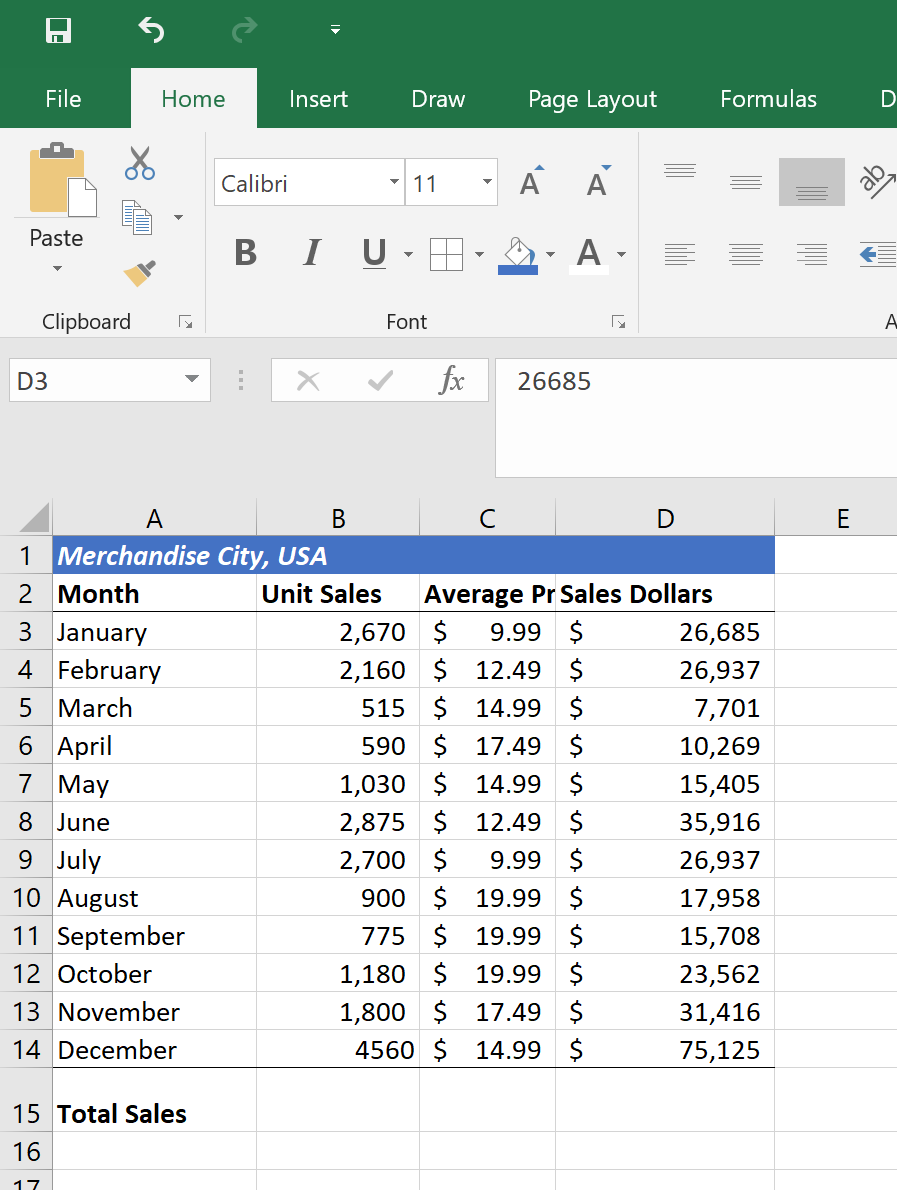

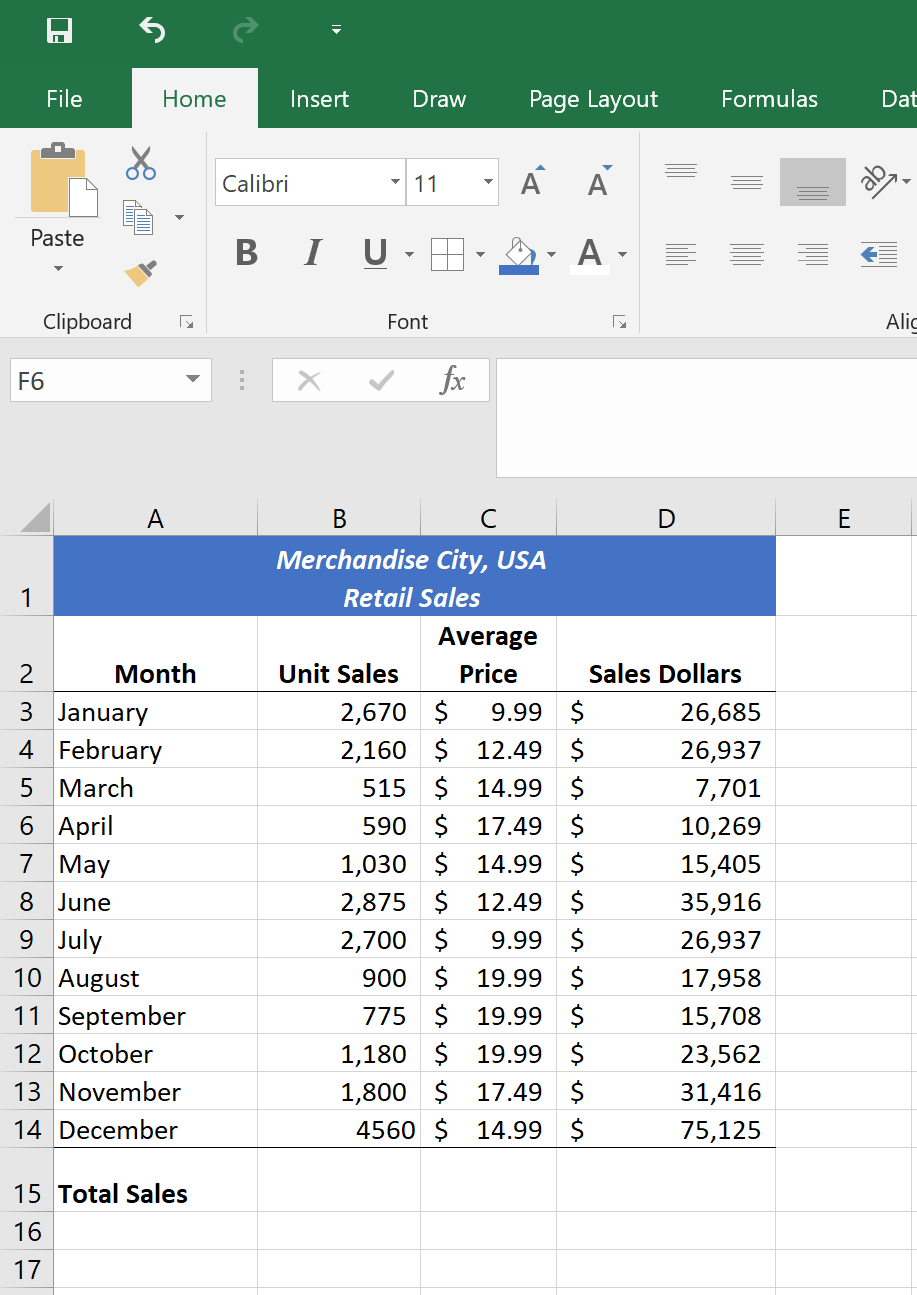

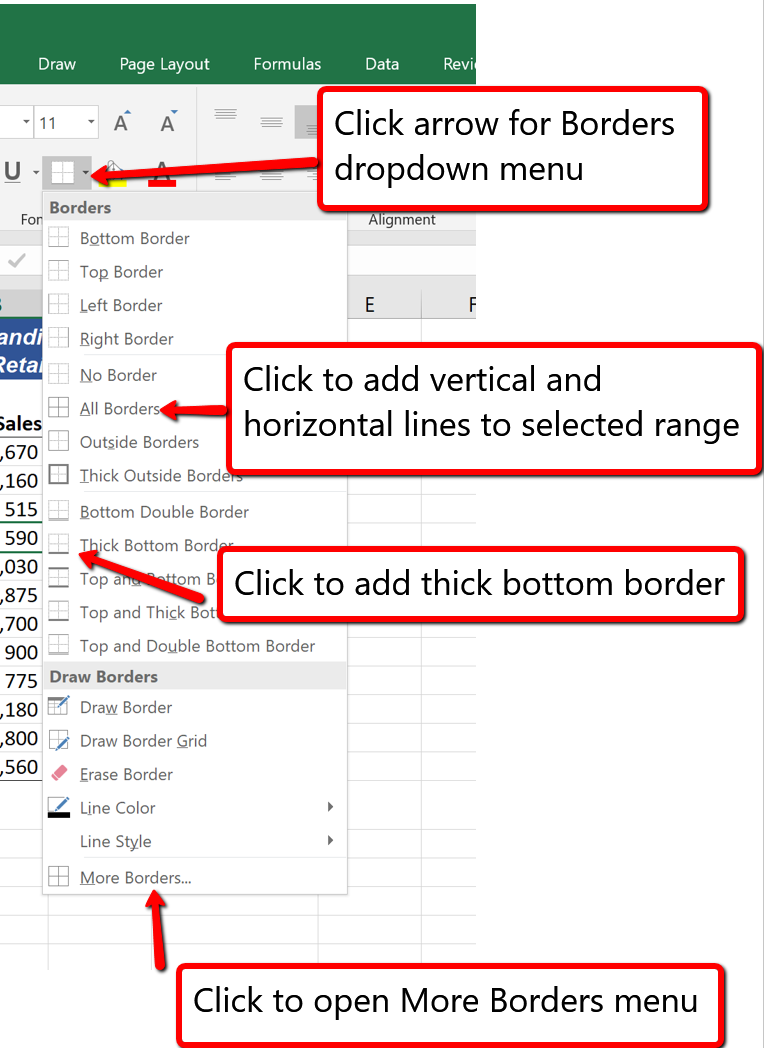

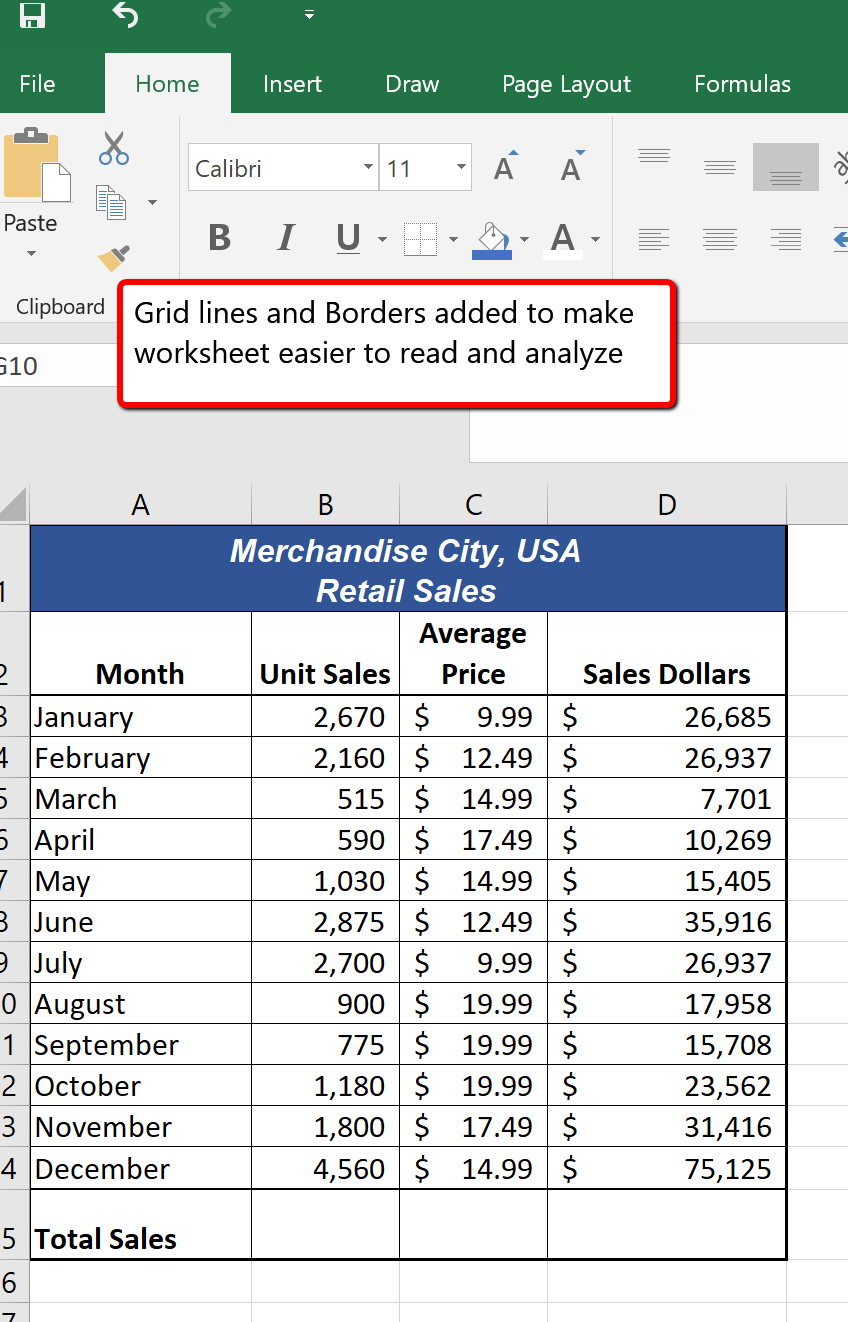

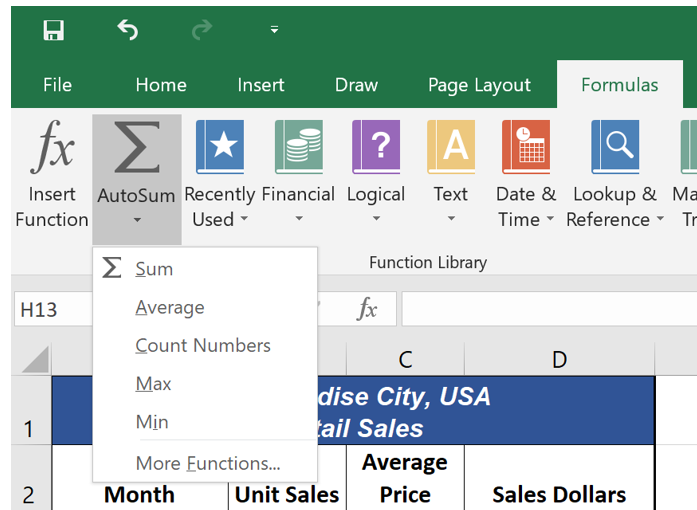

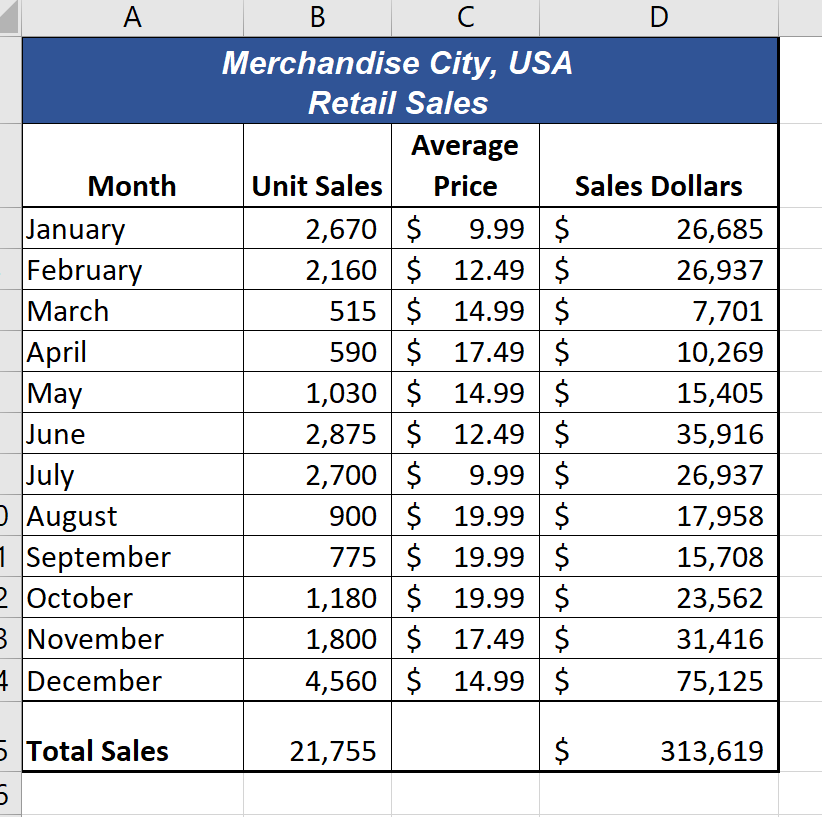

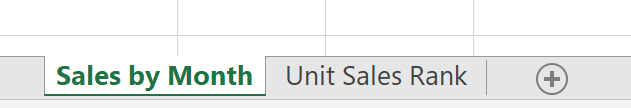

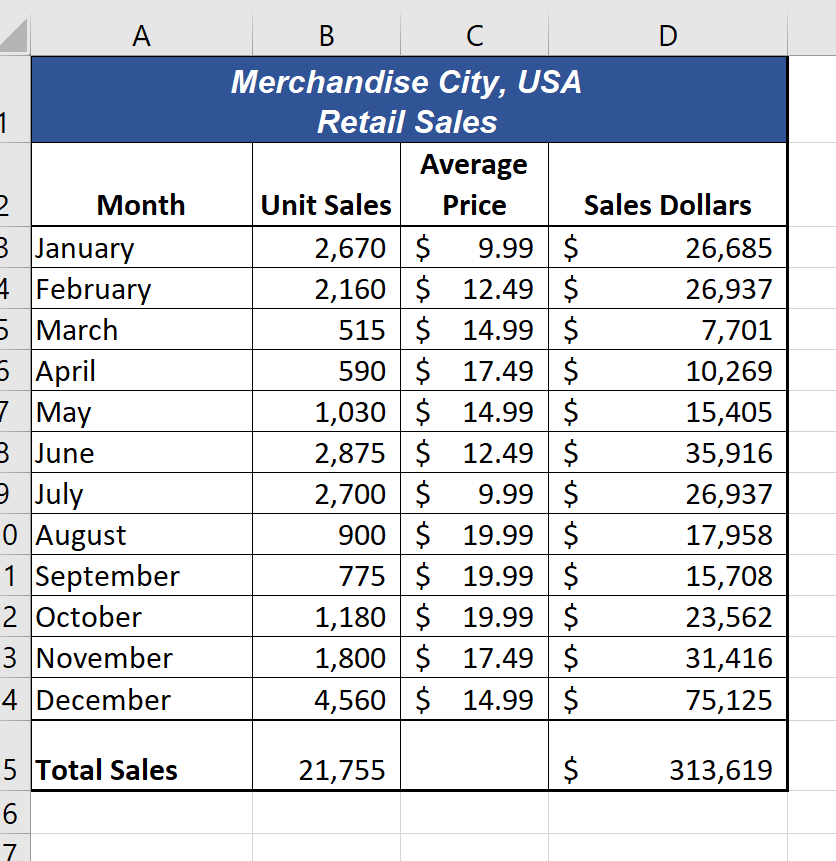

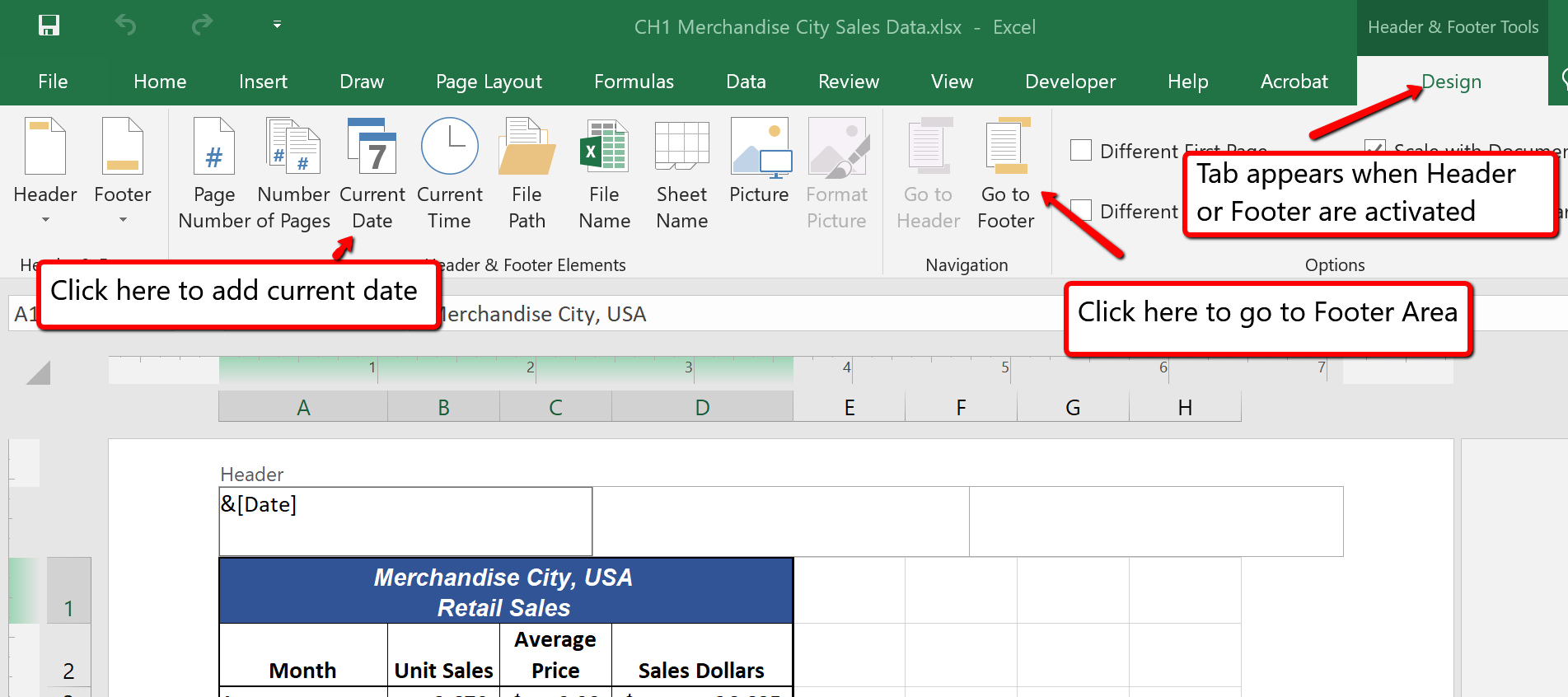

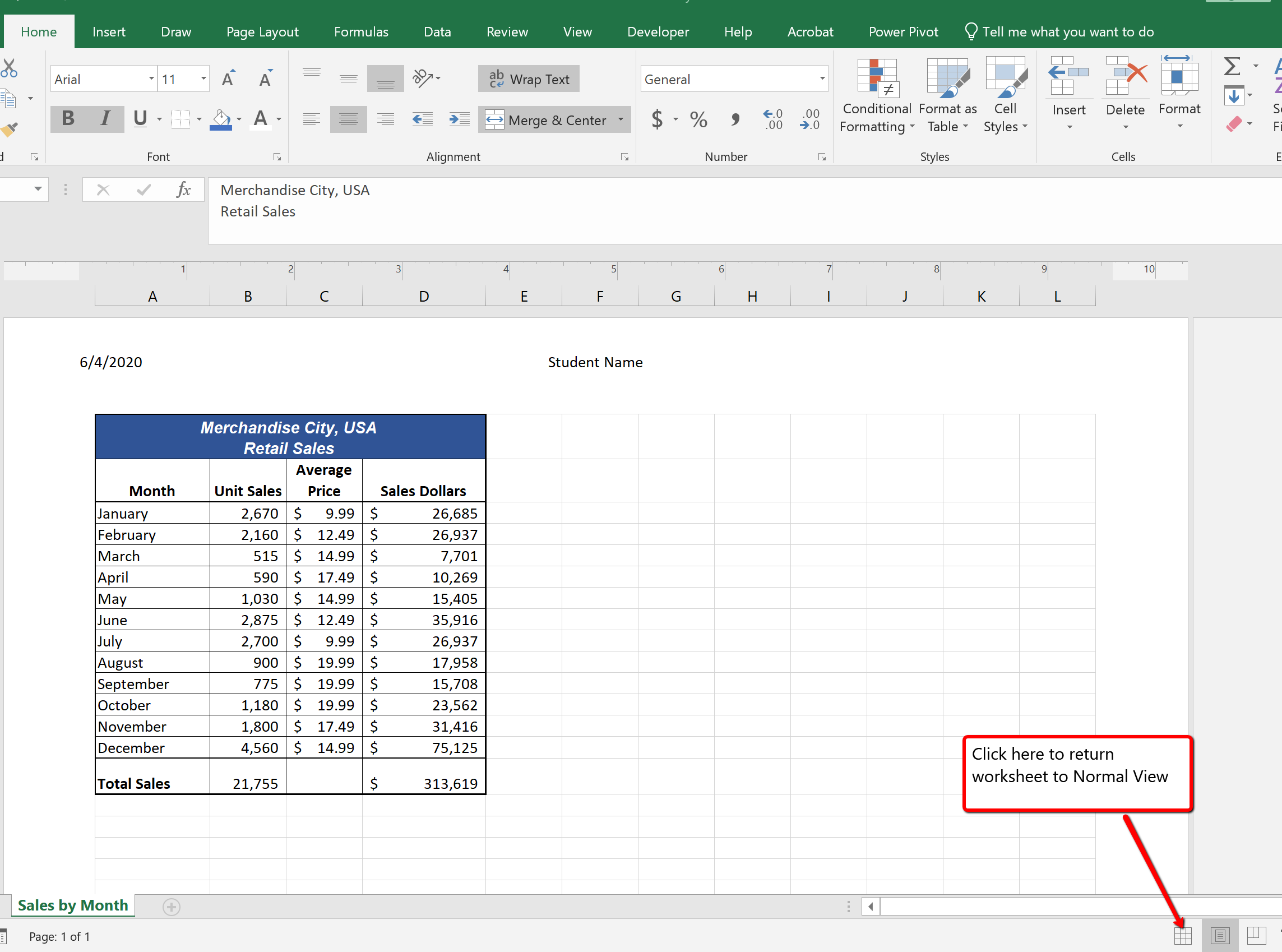

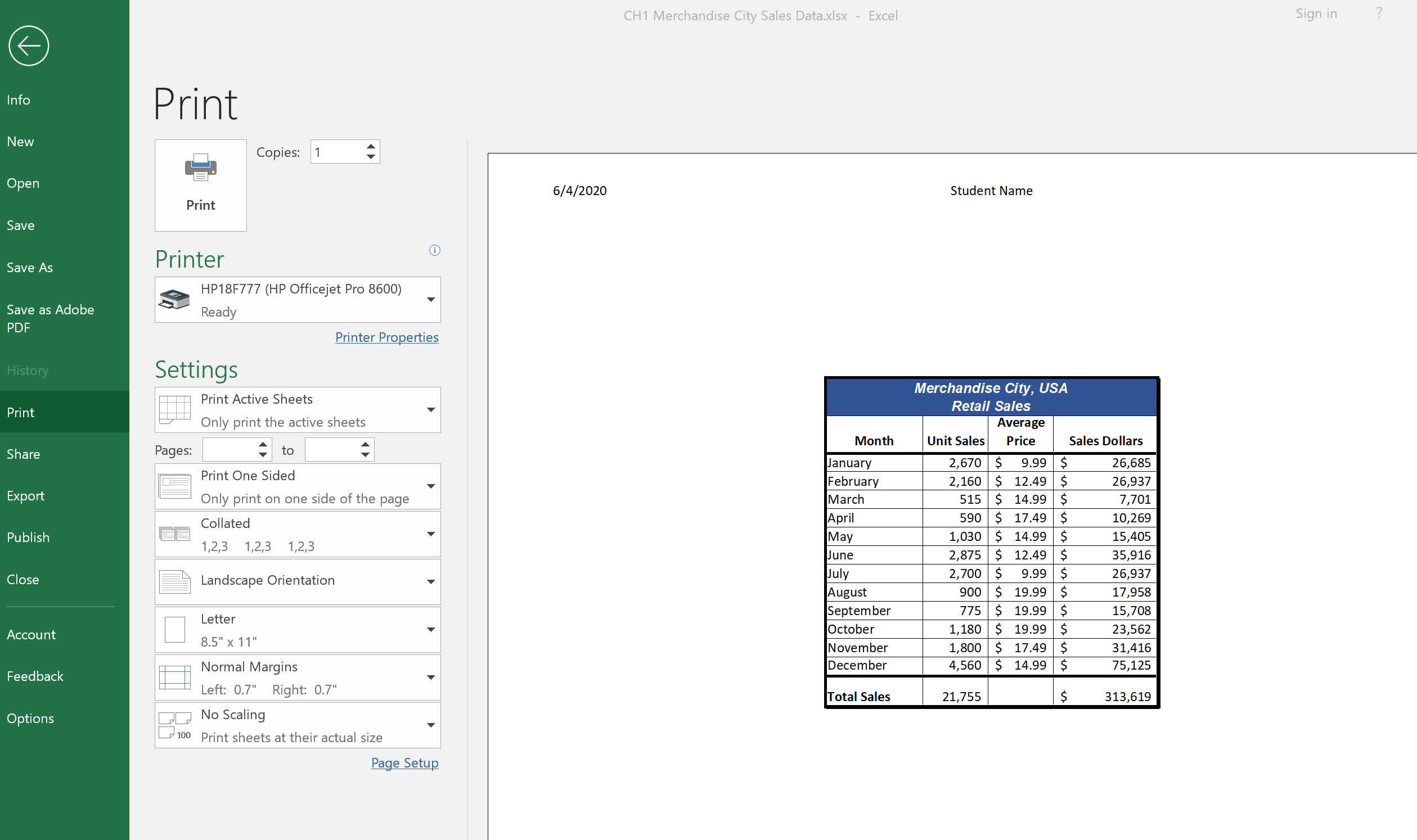

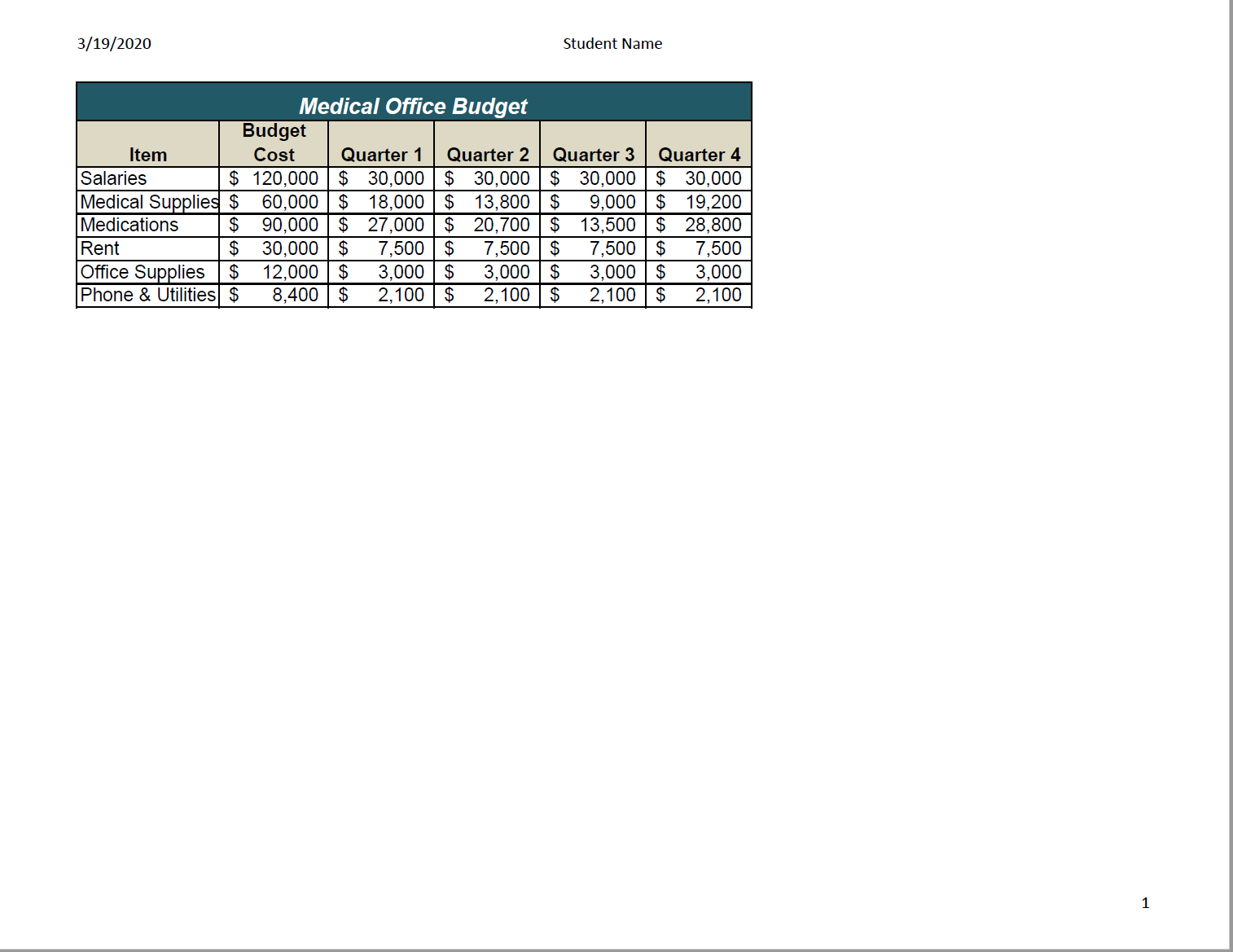

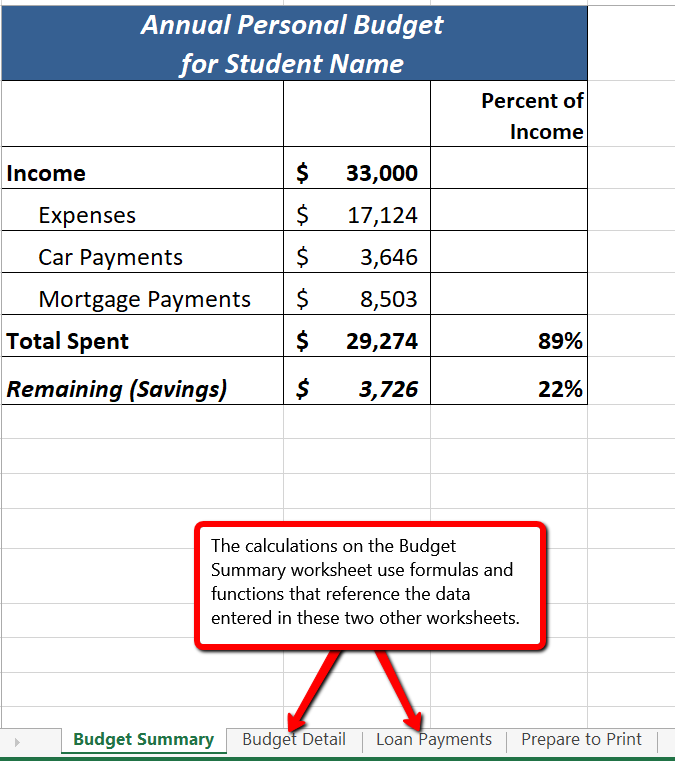

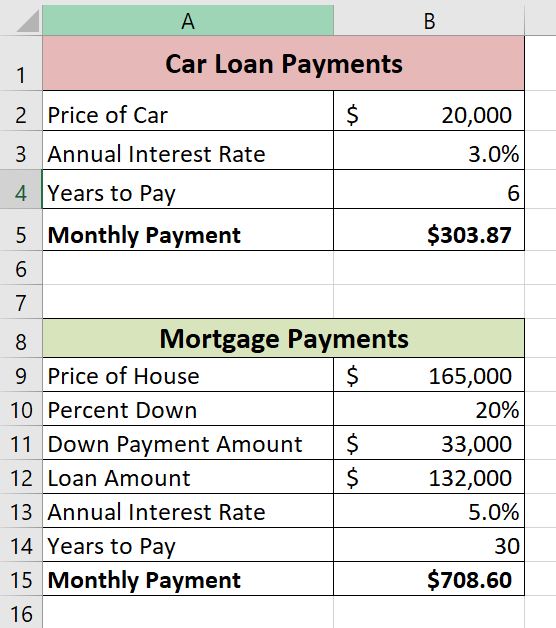

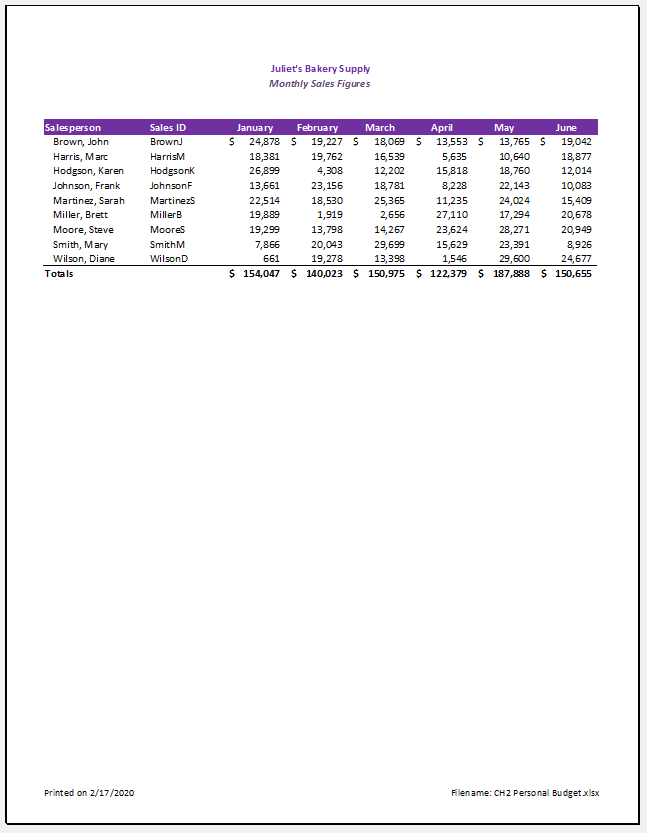

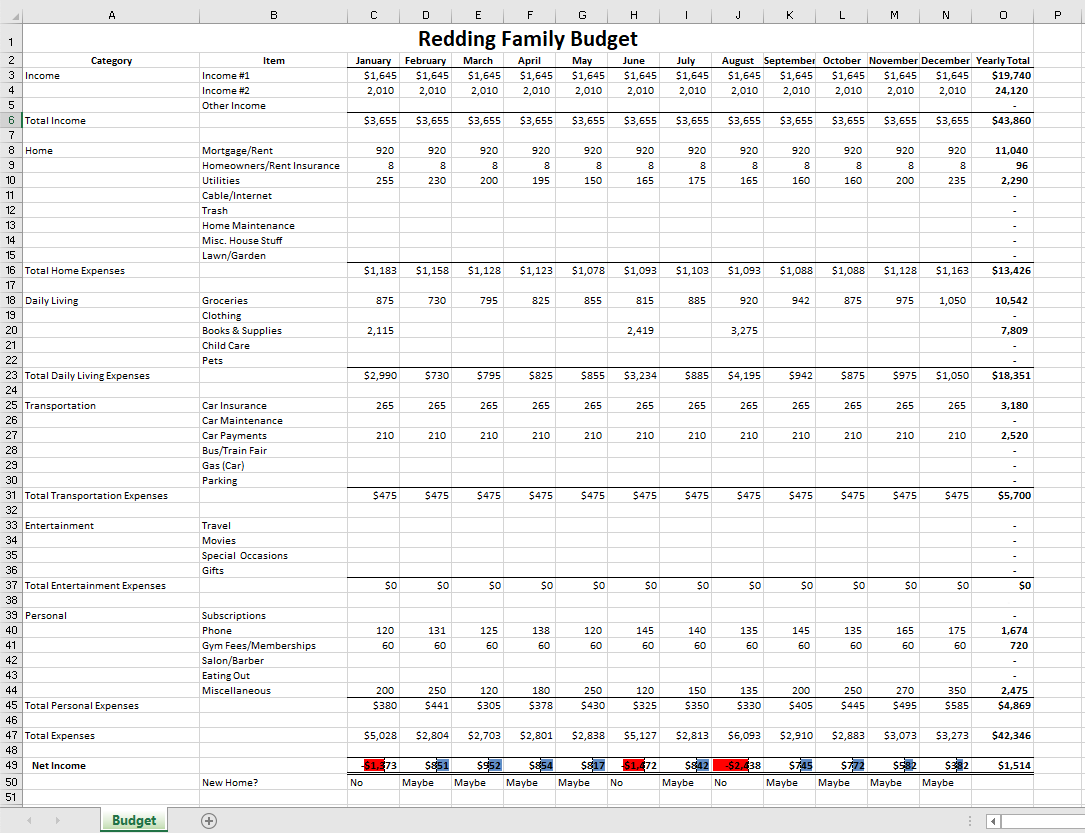

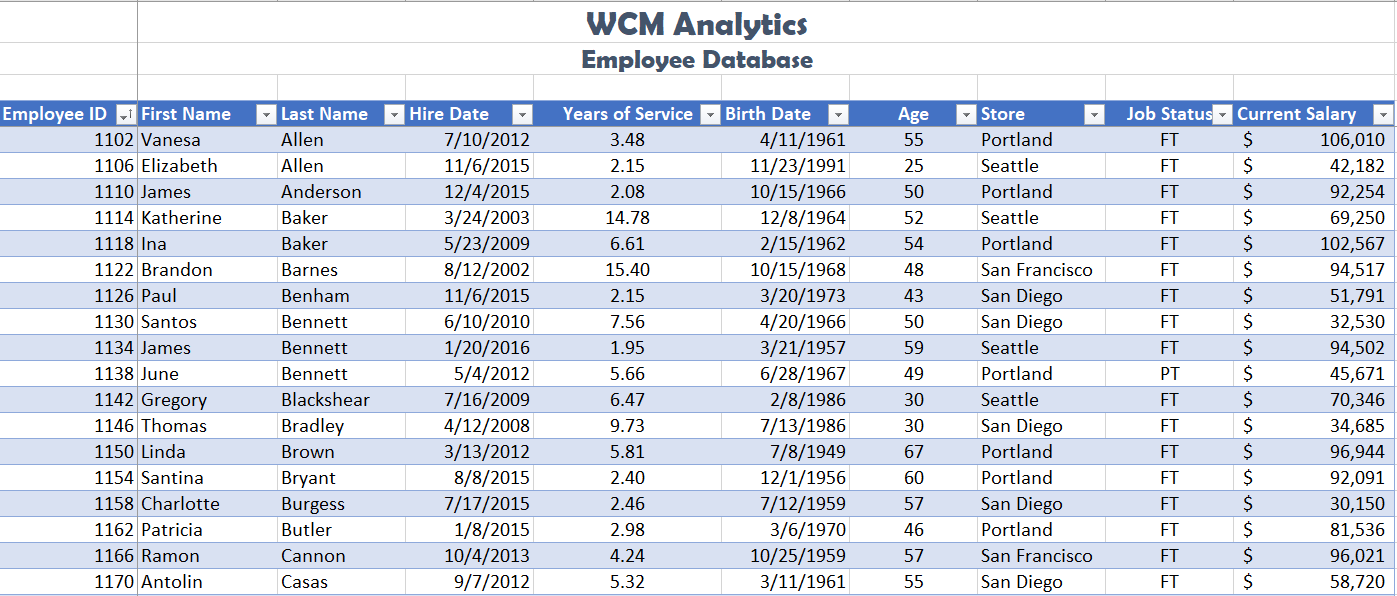

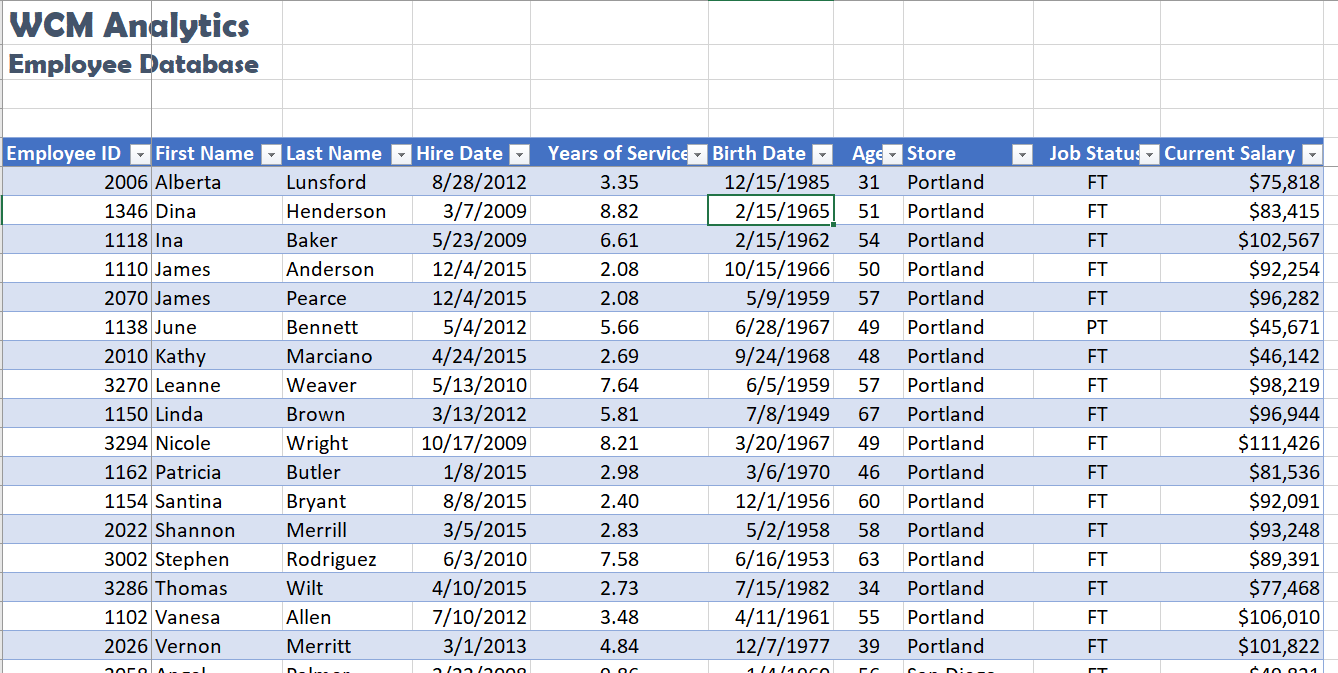

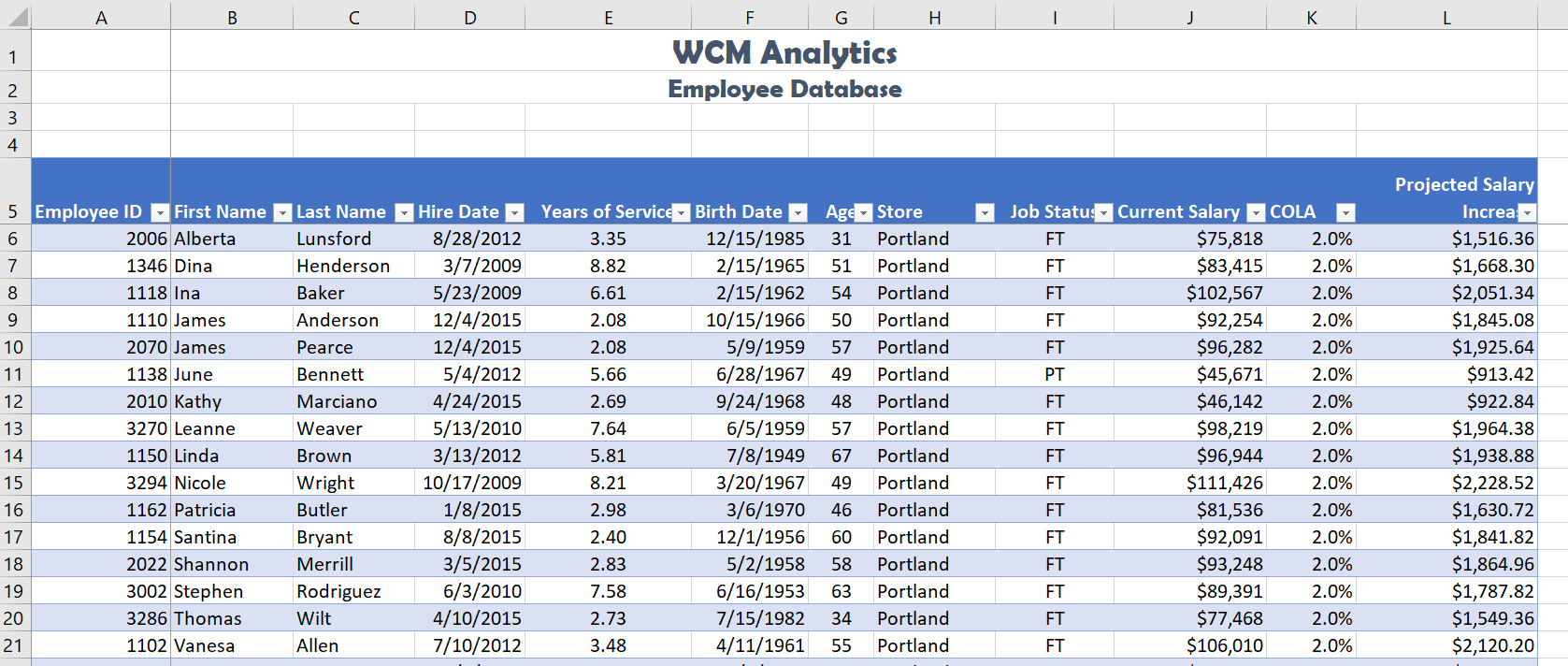

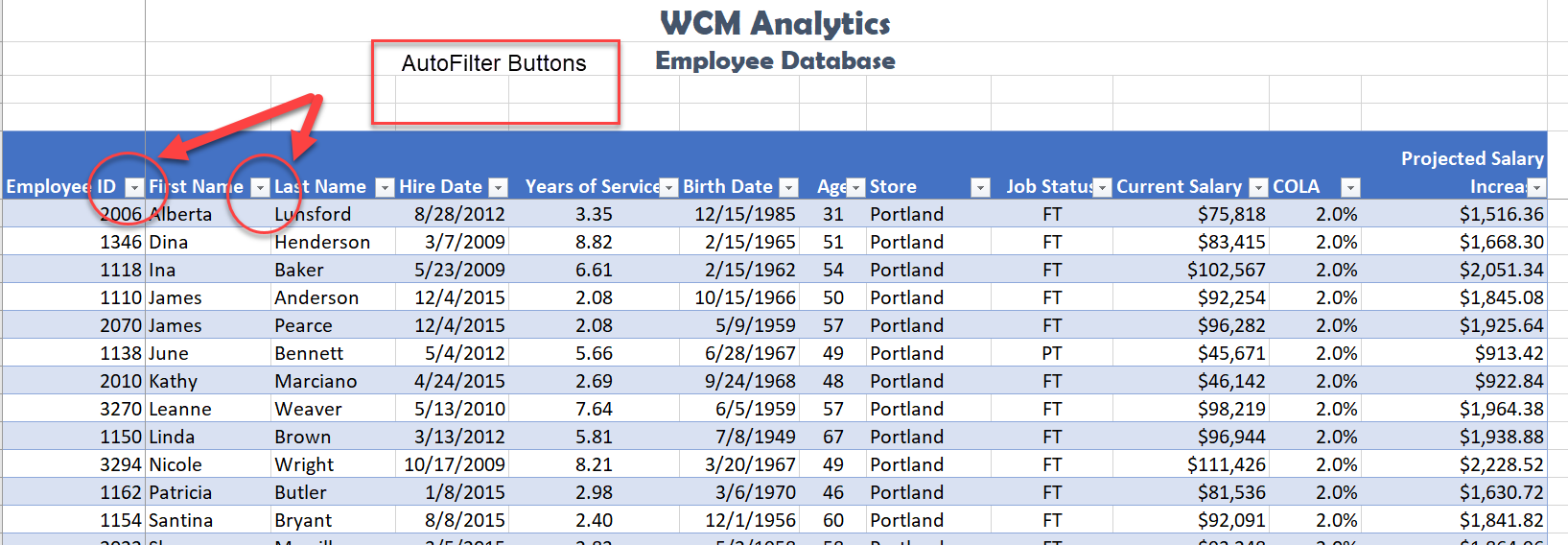

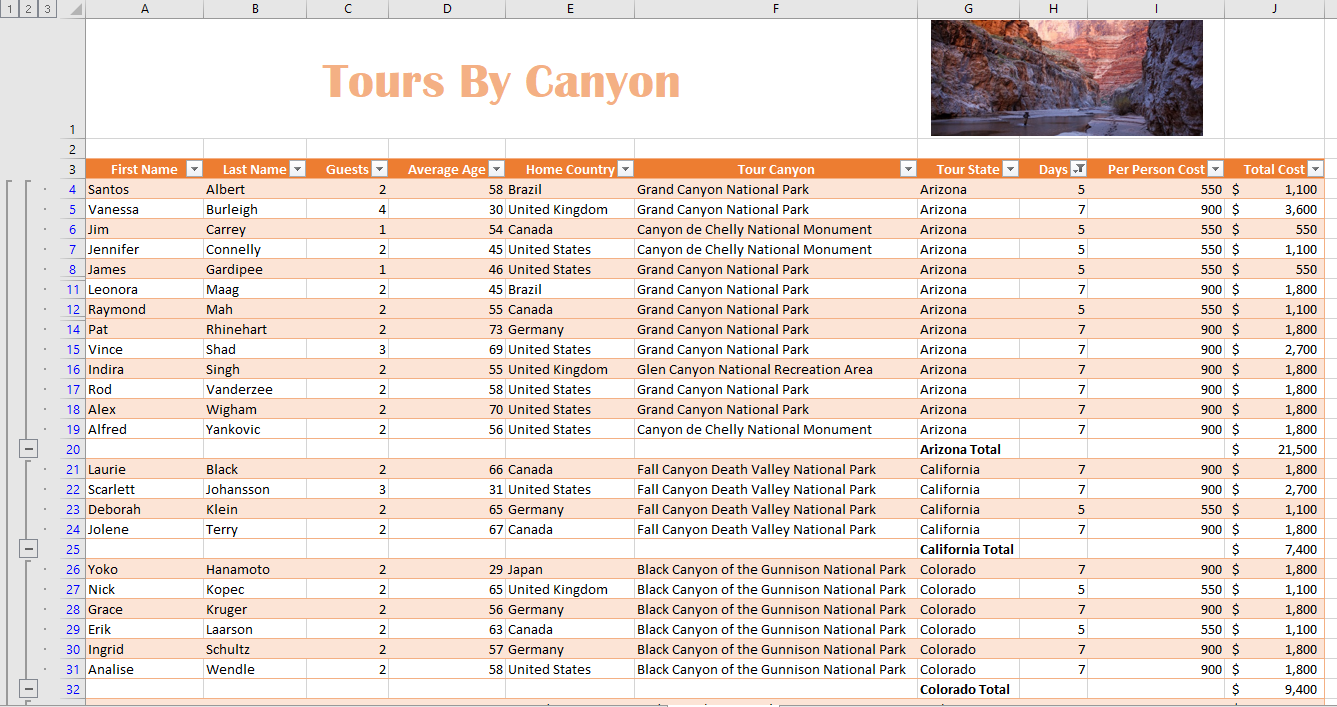

Figure 1.1 shows a completed Excel worksheet that will be constructed in this chapter. The information shown in this worksheet contains sales data for a hypothetical merchandise retail company. The worksheet data can help a retailer analyze the business and determine the number of salespeople needed for each month for example.

The Excel for Windows and Excel for Mac software versions are very similar. Most of the features, tools and commands are available in both versions. There are, however, some differences with the Excel interface. There are also a few features that are not available in the Excel for Mac version. The screenshots and step-by-step instructions in this textbook are specific to Excel for Windows. We have attempted to provide alternate screenshots and instructions for the Mac version when the differences are significant. When you see this icon ![]() , it means we are providing information specific to Mac users.

, it means we are providing information specific to Mac users.

The Excel Workbook

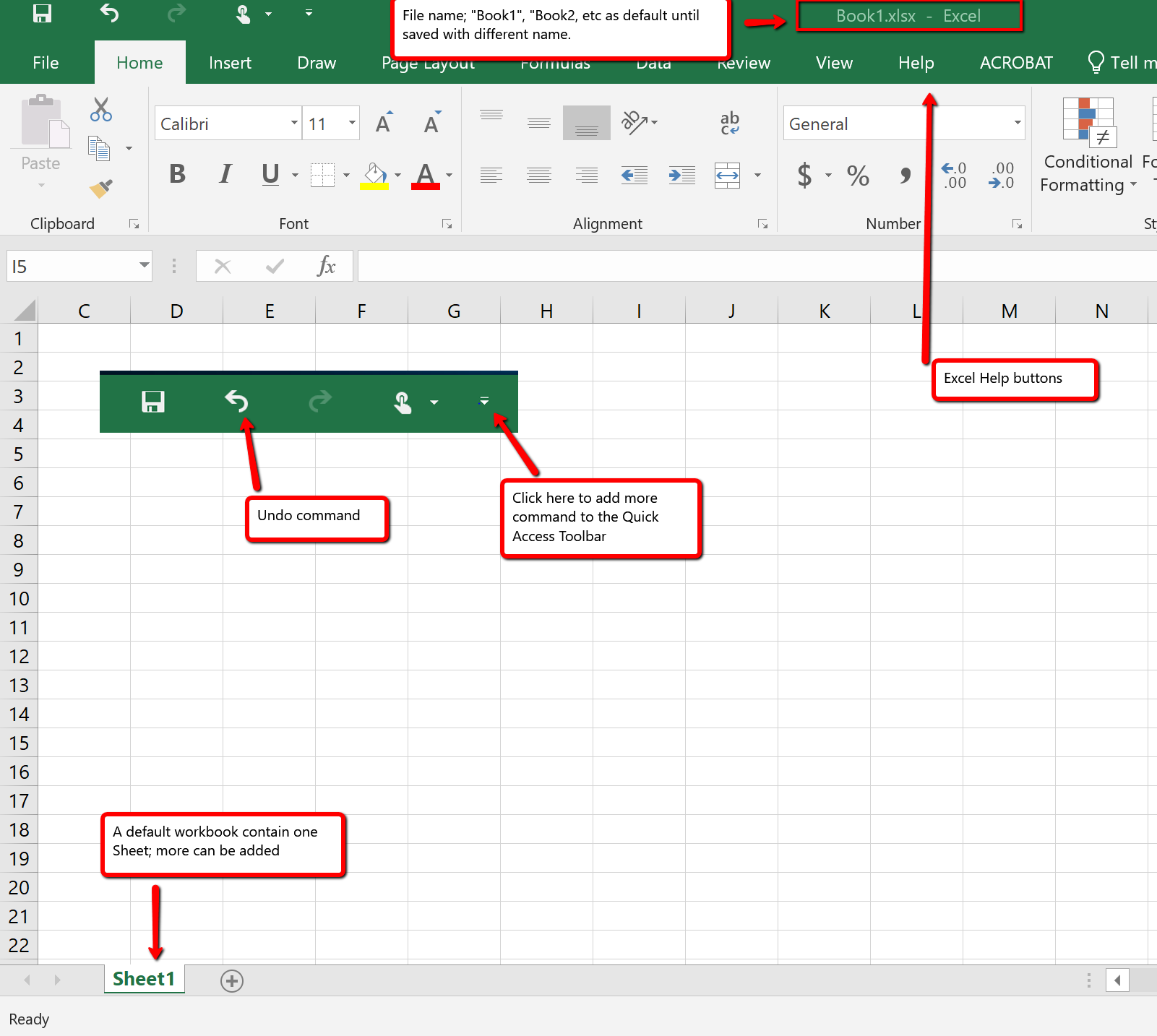

A workbook is an Excel file that contains one or more worksheets (referred to as spreadsheets). Excel will assign a file name to the workbook, such as Book1, Book2, Book3, and so on, depending on how many new workbooks are opened. Figure 1.2 shows a blank workbook after starting Excel. Take some time to familiarize yourself with this screen. Your screen may be slightly different based on the version you’re using.

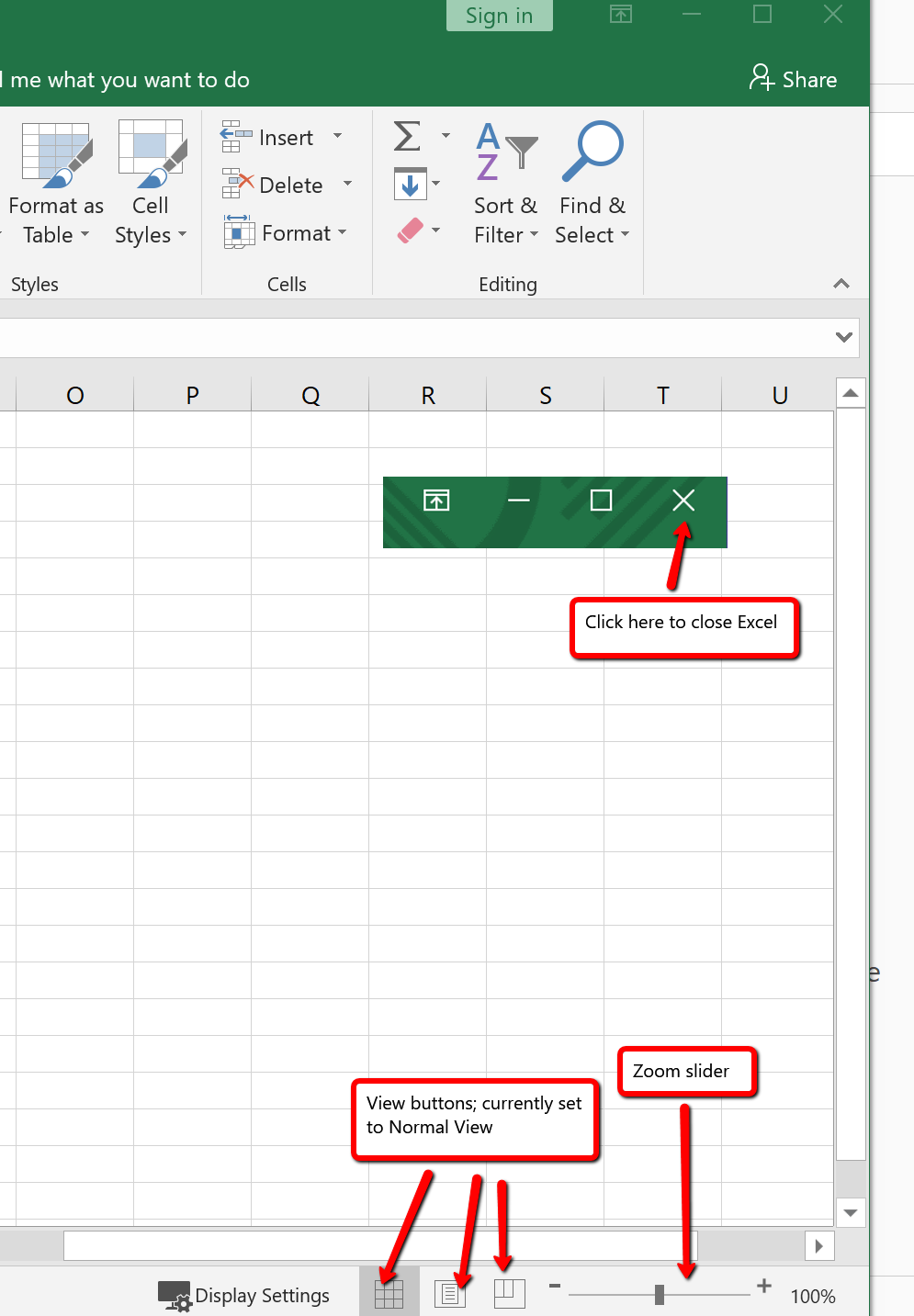

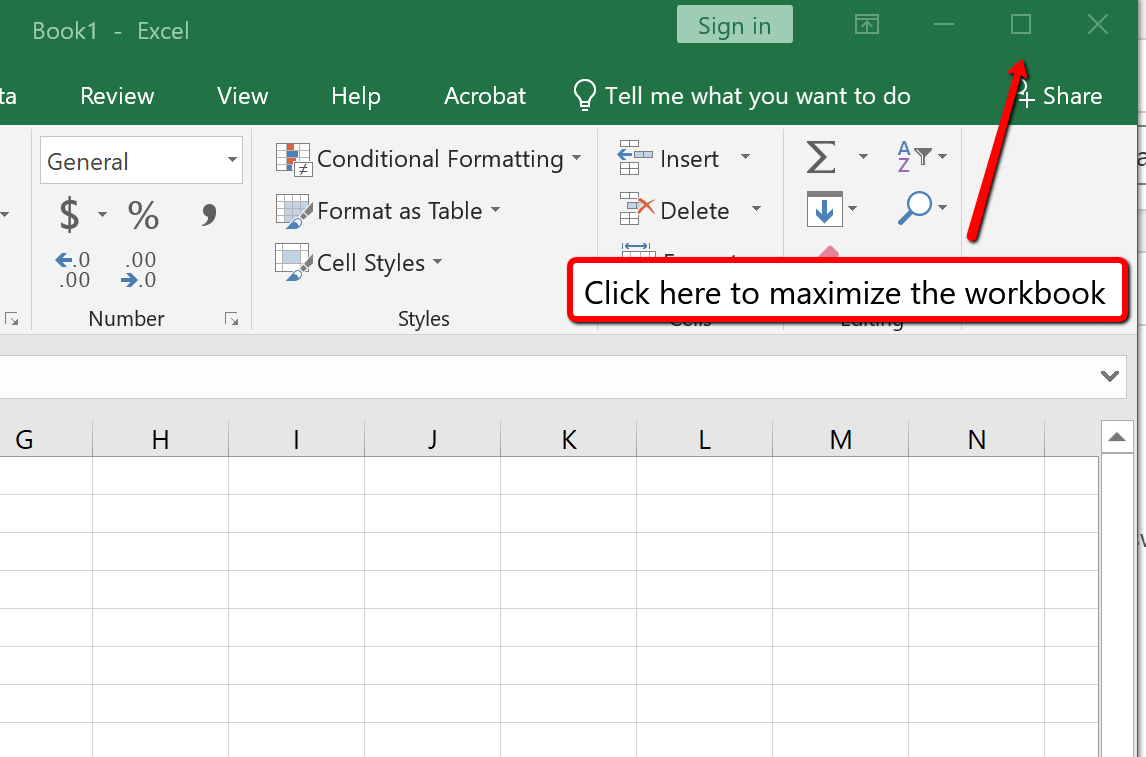

Your workbook should already be maximized (or shown at full size) once Excel is started, as shown in Figure 1.2. However, if your screen looks like Figure 1.3 after starting Excel, you should click the Maximize button, as shown in the figure.

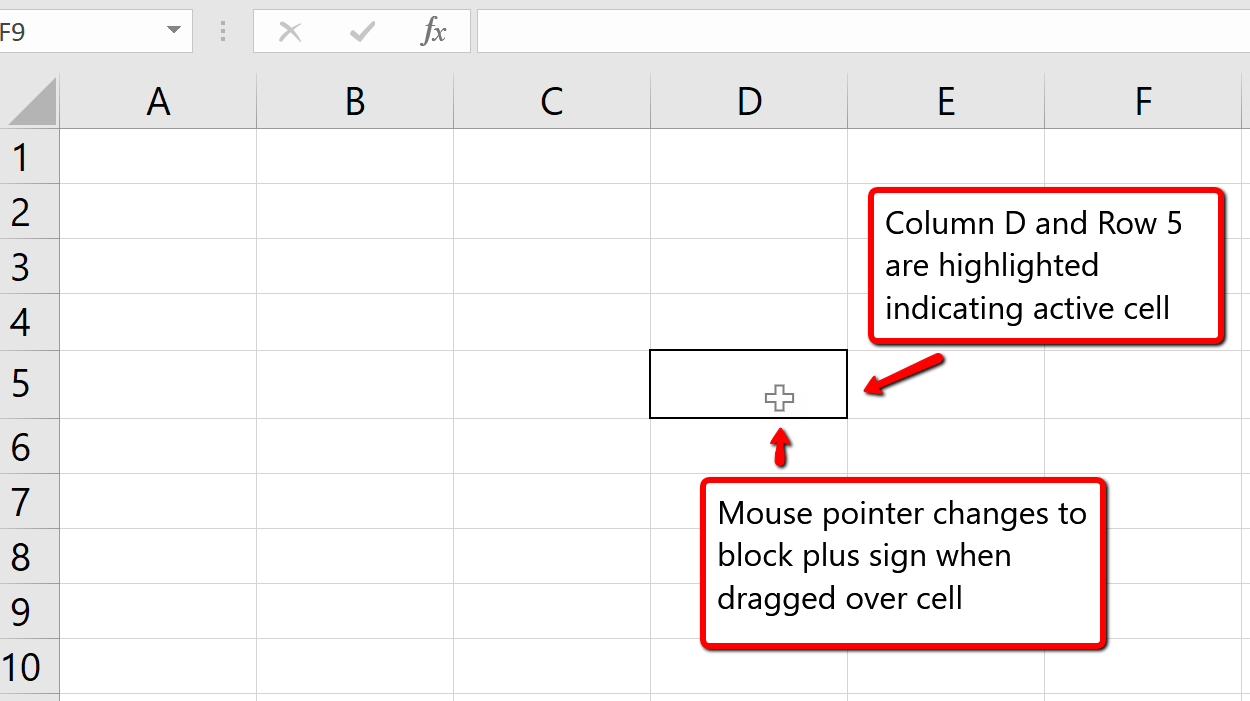

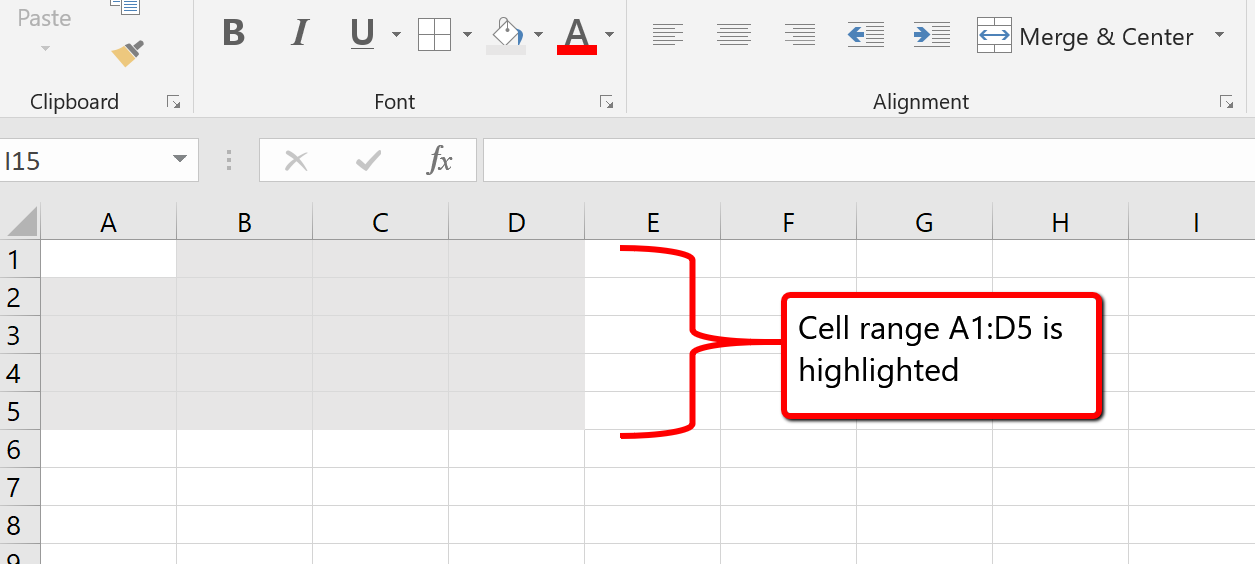

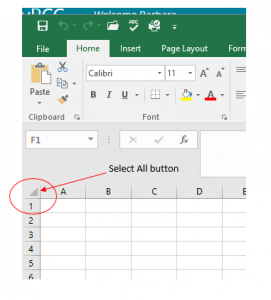

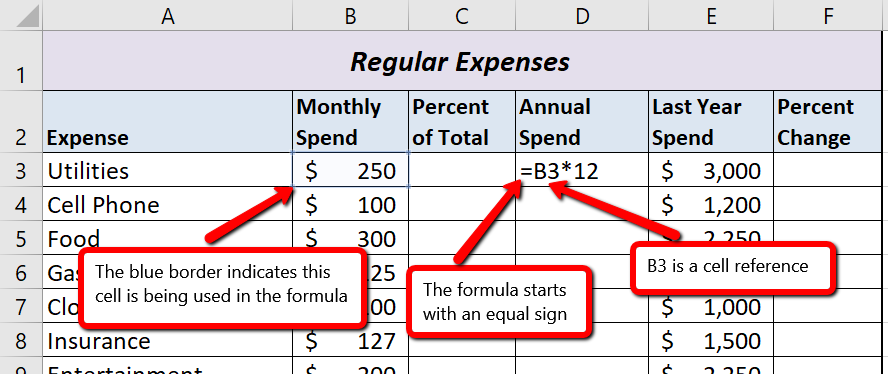

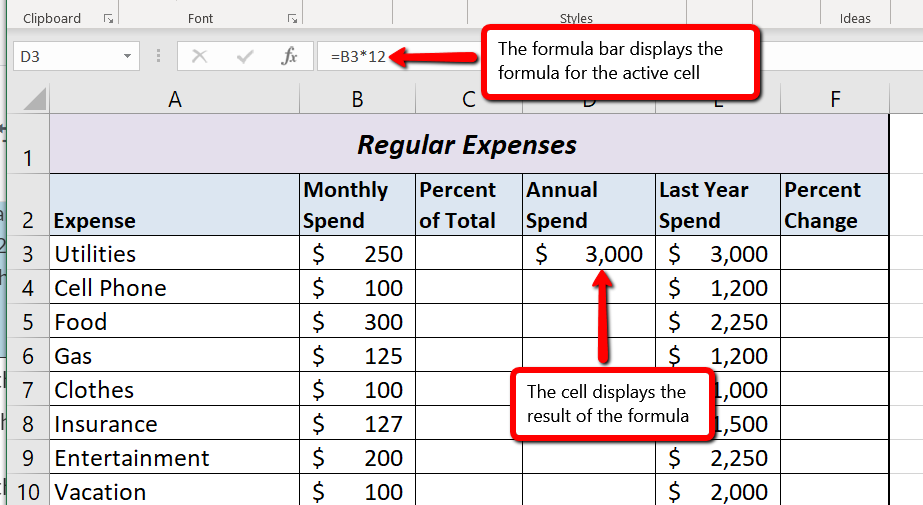

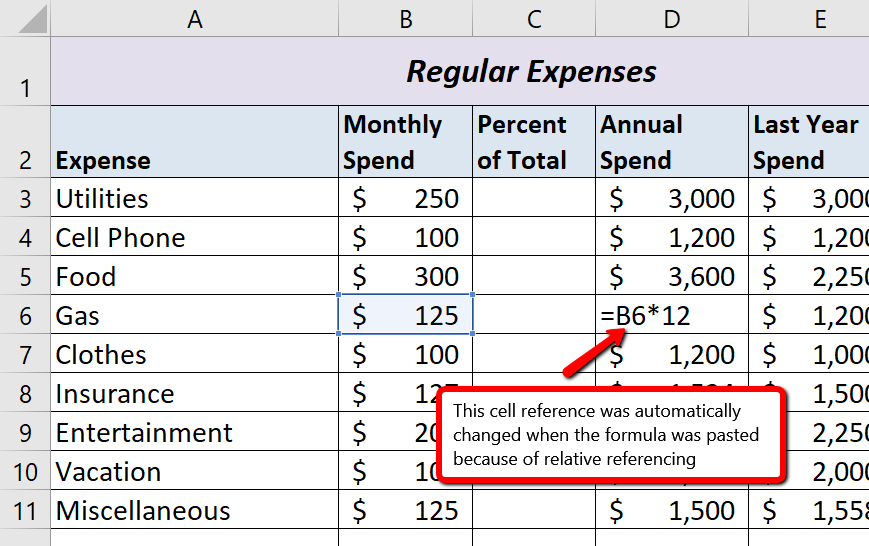

Data are entered and managed in an Excel worksheet. The worksheet contains several rectangles called cells for entering numeric and non-numeric data. Each cell in an Excel worksheet contains an address, which is defined by a column letter followed by a row number. For example, the cell that is currently activated in Figure 1.3 is A1. This would be referred to as cell location A1 or cell reference A1. The following steps explain how you can navigate in an Excel worksheet:

This is referred to as a cell range and is documented as follows: A1:D5. Any two cell locations separated by a colon are known as a cell range. The first cell is the top left corner of the range, and the second cell is the lower right corner of the range.

Basic Worksheet Navigation

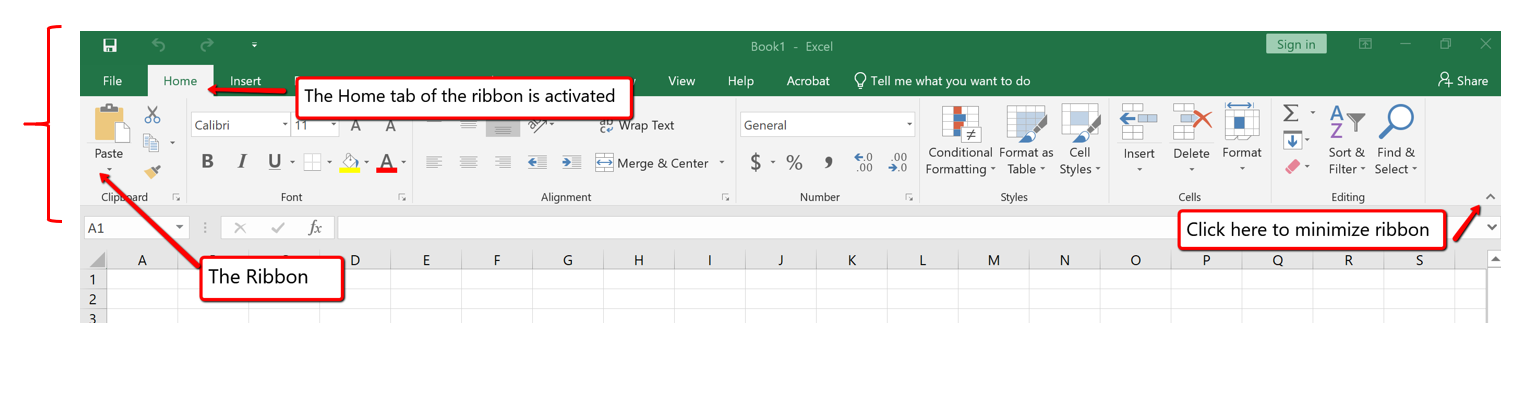

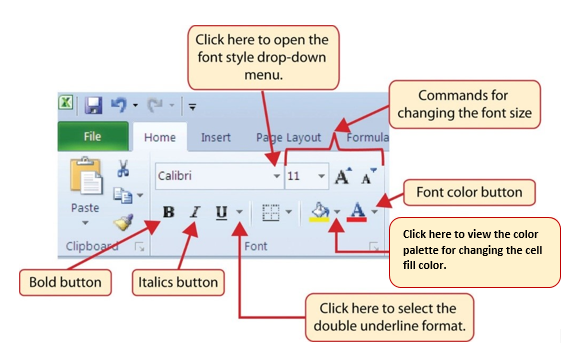

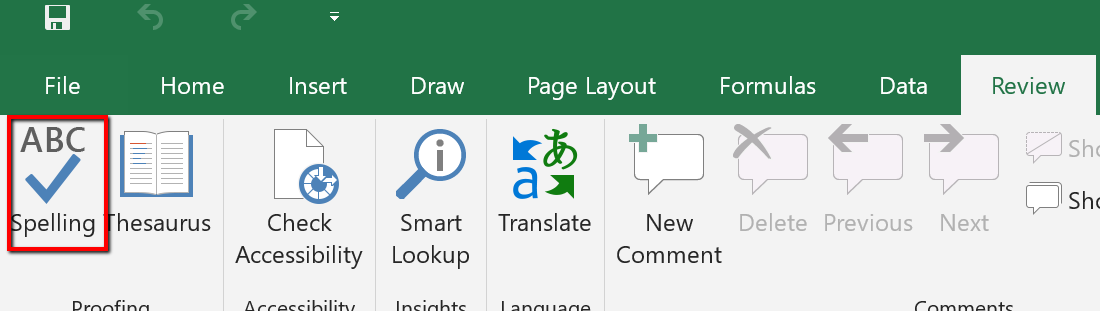

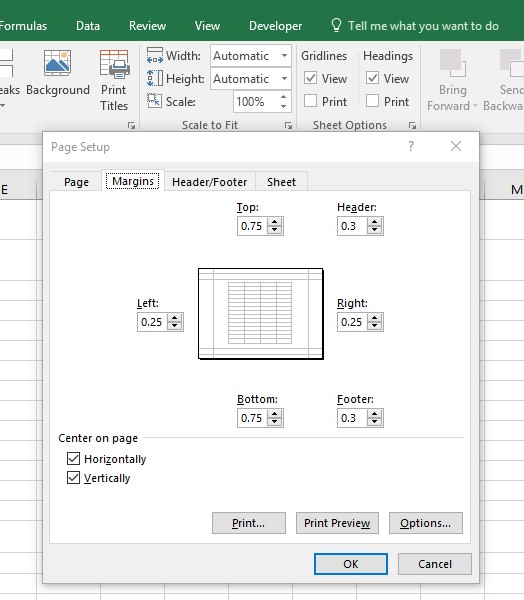

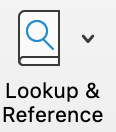

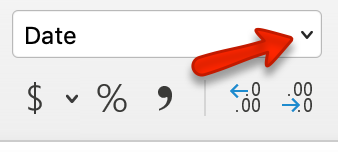

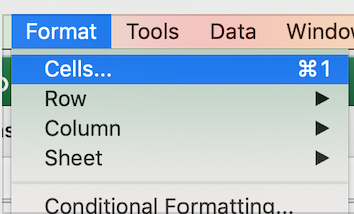

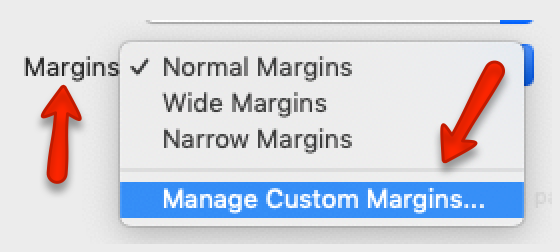

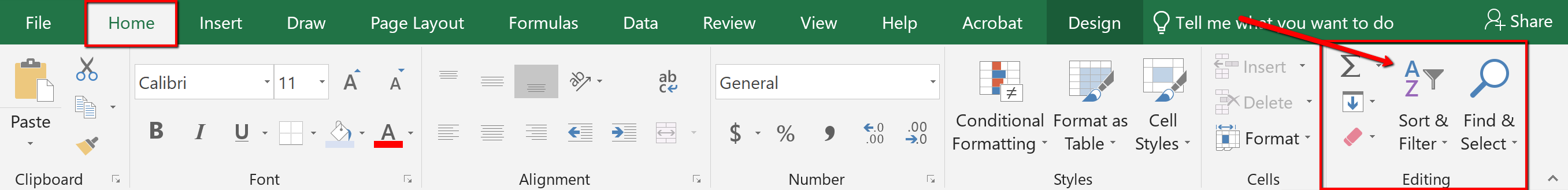

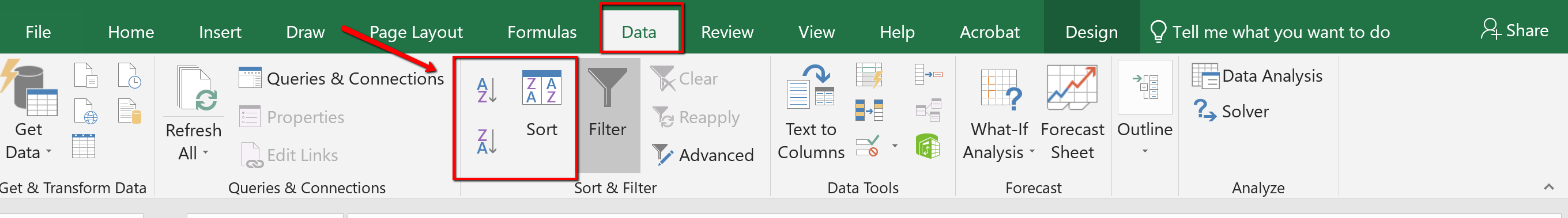

Excel’s features and commands are found in the Ribbon, which is the upper area of the Excel screen that contains several tabs running across the top. Each tab provides access to a different set of Excel commands. Figure 1.6 shows the commands available in the Home tab of the Ribbon. Table 1.1 “Command Overview for Each Tab of the Ribbon” provides an overview of the commands that are found in each tab of the Ribbon.

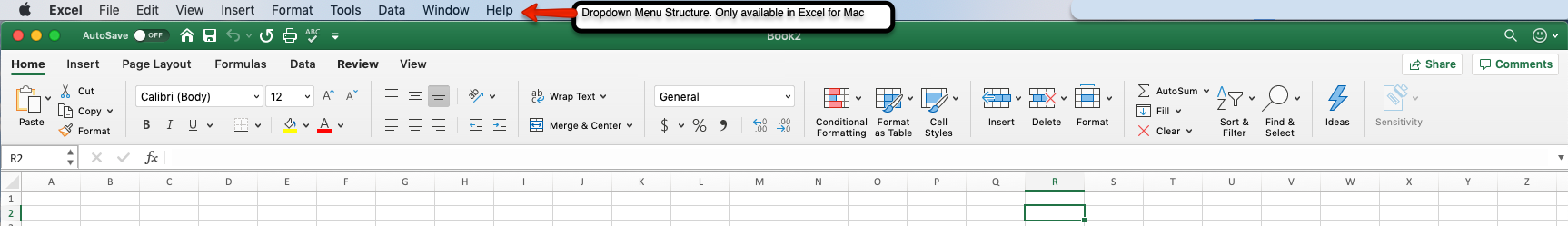

![]() The Excel for Mac ribbon, as shown in Figure 1.6a below, has two primary differences:

The Excel for Mac ribbon, as shown in Figure 1.6a below, has two primary differences:

If you look closely at the Excel Ribbon (See Figure 1.6 above), you will see that the Ribbon is separated in groups of tool buttons, and each group has a title name. On Home tab, the group title names are “Clipboard”, “Font”, “Alignment”, “Number”, “Styles”. “Cells”, “Editing”, etc. The tool buttons within each group are all related to the group title.

![]() Mac Users Only: The default “View” for the Excel for Mac ribbon does not display these “group title names”. Notice in Figure 1.6a above, there are no group title names. It is a good idea to change this “view” so you can see the group title names. Here are the steps:

Mac Users Only: The default “View” for the Excel for Mac ribbon does not display these “group title names”. Notice in Figure 1.6a above, there are no group title names. It is a good idea to change this “view” so you can see the group title names. Here are the steps:

Table 1.1 Command Overview for Each Tab of the Ribbon

| Tab Name | Description of Commands |

| File | Also known as the Backstage view of the Excel workbook. Contains all commands for opening, closing, saving, and creating new Excel workbooks. Includes print commands, document properties, e-mailing options, and help features. The default settings and options are also found in this tab. |

| Home | Contains the most frequently used Excel commands. Formatting commands are found in this tab along with commands for cutting, copying, pasting, and for inserting and deleting rows and columns. |

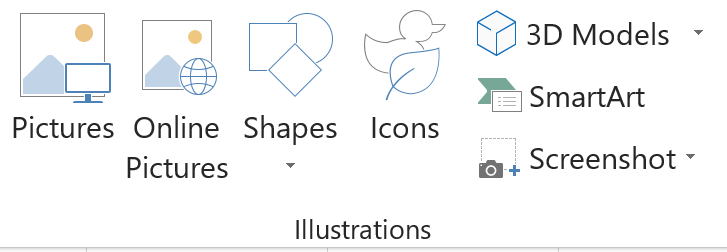

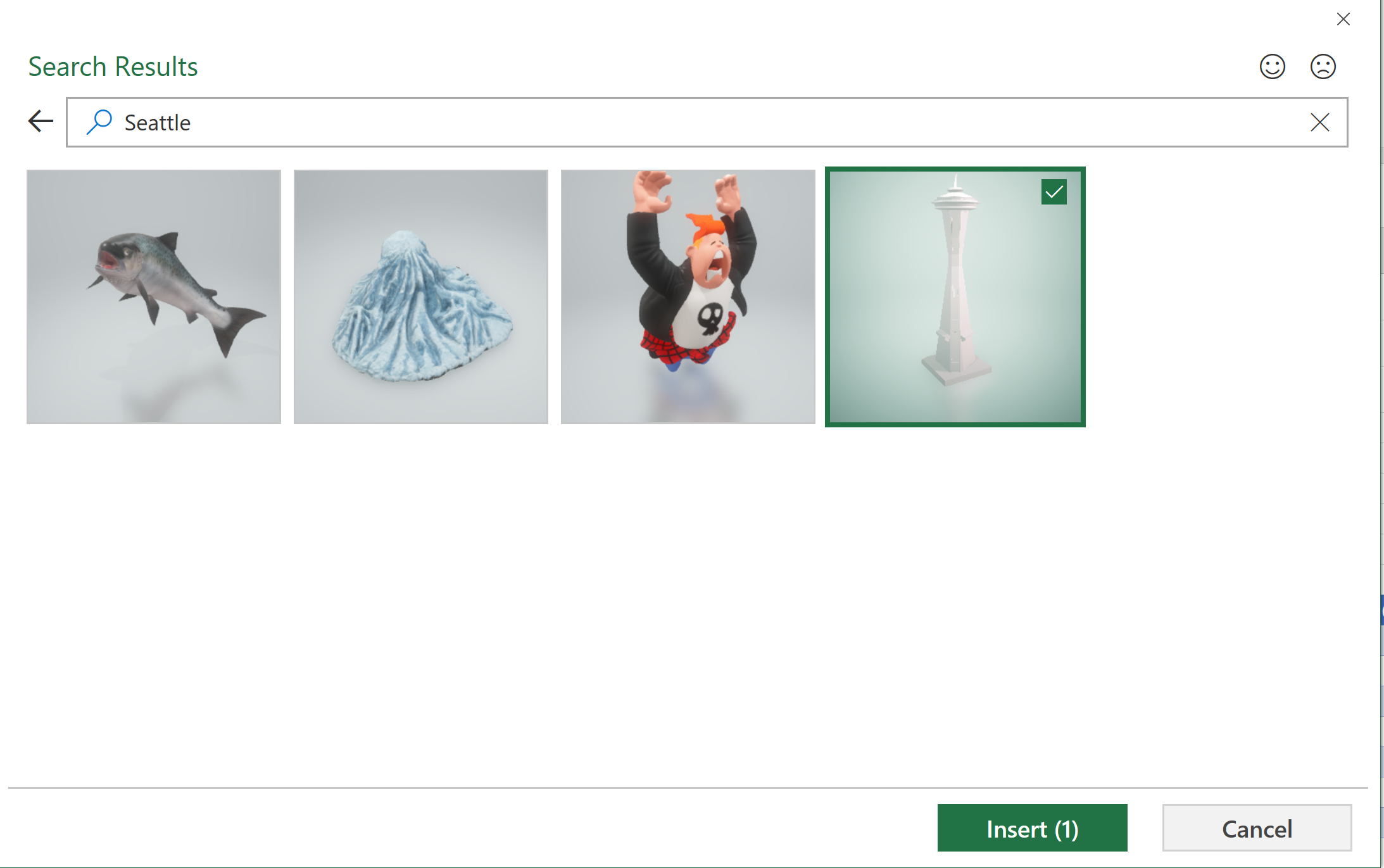

| Insert | Used to insert objects such as charts, pictures, shapes, PivotTables, Internet links, symbols, or text boxes. |

| Page Layout | Contains commands used to prepare a worksheet for printing. Also includes commands used to show and print the gridlines on a worksheet. |

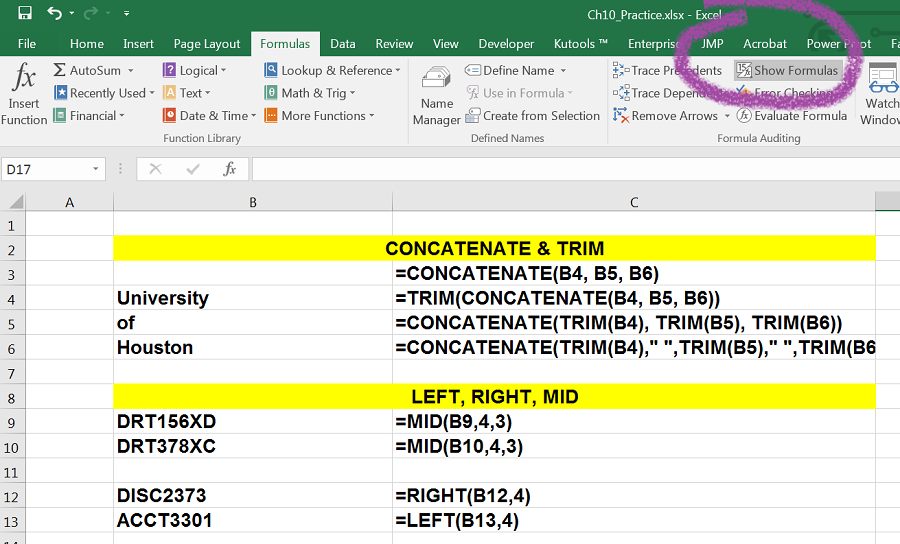

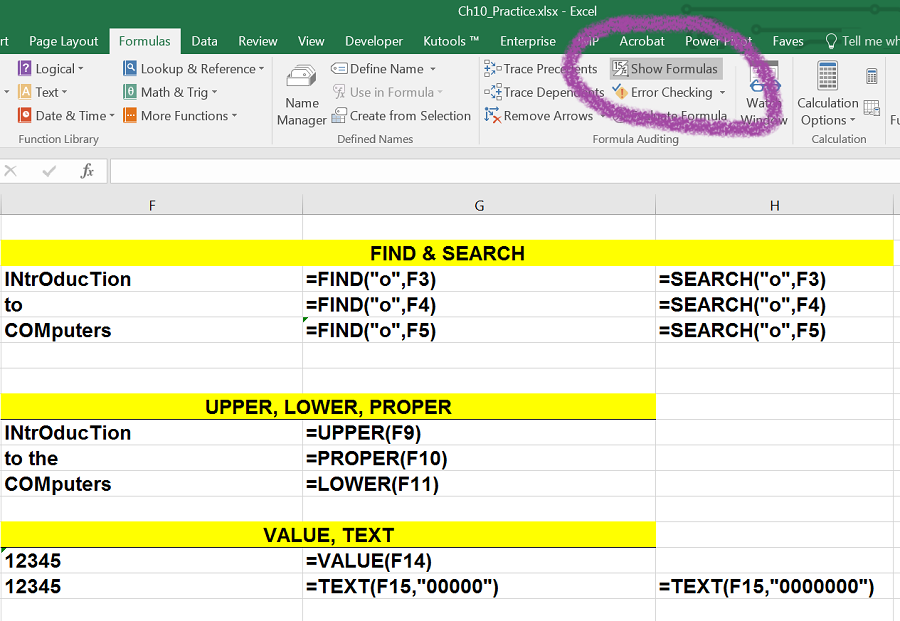

| Formulas | Includes commands for adding mathematical functions to a worksheet. Also contains tools for auditing mathematical formulas. |

| Data | Used when working with external data sources such as Microsoft® Access®, text files, or the Internet. Also contains sorting commands and access to scenario tools. |

| Review | Includes Spelling and Track Changes features. Also contains protection features to password protect worksheets or workbooks. |

| View | Used to adjust the visual appearance of a workbook. Common commands include the Zoom and Page Layout view. |

| Help | This tab provides access to help and support features such as contacting Microsoft support, sending feedback, suggesting a new feature, and community discussion groups. |

| Draw | Provides drawing options for using a digital pen, mouse or finger depending on the type of device (laptop with touch screen, tablet, computer, etc). This tab is not visible by default. See below on how to customize the Ribbon to add or remove tabs. |

| Developer | Provides access to some advanced features such as macros, form controls, and XML commands. This tab is not visible by default. See below on how to customize the Ribbon to add or remove tabs. |

The Ribbon shown in Figure 1.6 and Figure 1.6a (above) is full, or maximized. The benefit of having a full Ribbon is that the commands are always visible while you are developing a worksheet. However, depending on the screen dimensions of your computer, you may find that the Ribbon takes up too much vertical space on your worksheet. If this is the case, you can minimize the Ribbon by clicking the button shown in Figure 1.6. When minimized, the Ribbon will show only the tabs and not the command buttons. When you click on a tab, the command buttons will appear until you select a command or click anywhere on your worksheet.

![]() To hide the Ribbon with Excel for Mac you can use the keyboard shortcut:

To hide the Ribbon with Excel for Mac you can use the keyboard shortcut:

Hold down the “Command and Option” keys and tap the “R” key

The same keyboard shortcut will unhide the Ribbon as well.

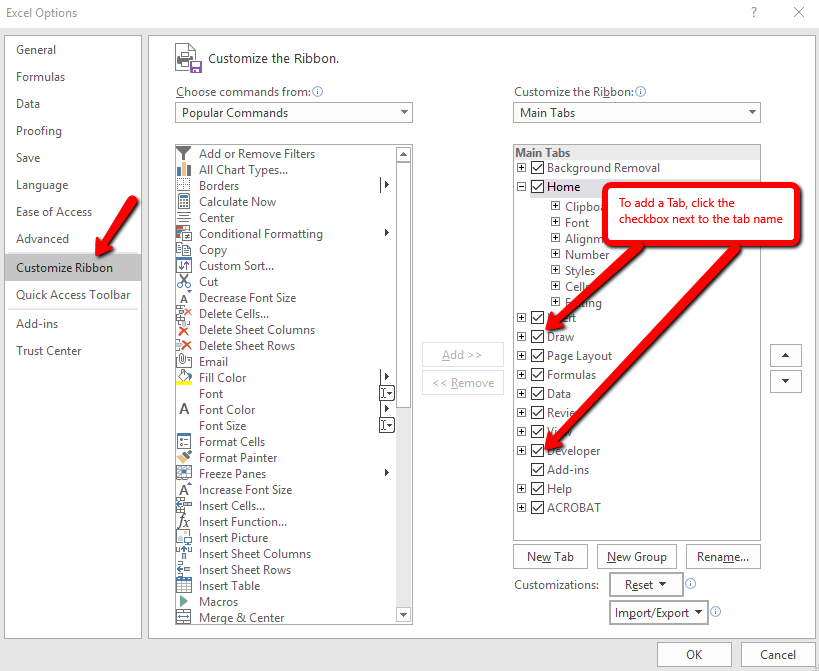

Here are the steps to add additional tabs to the Excel Ribbon

Minimizing or Maximizing the Ribbon

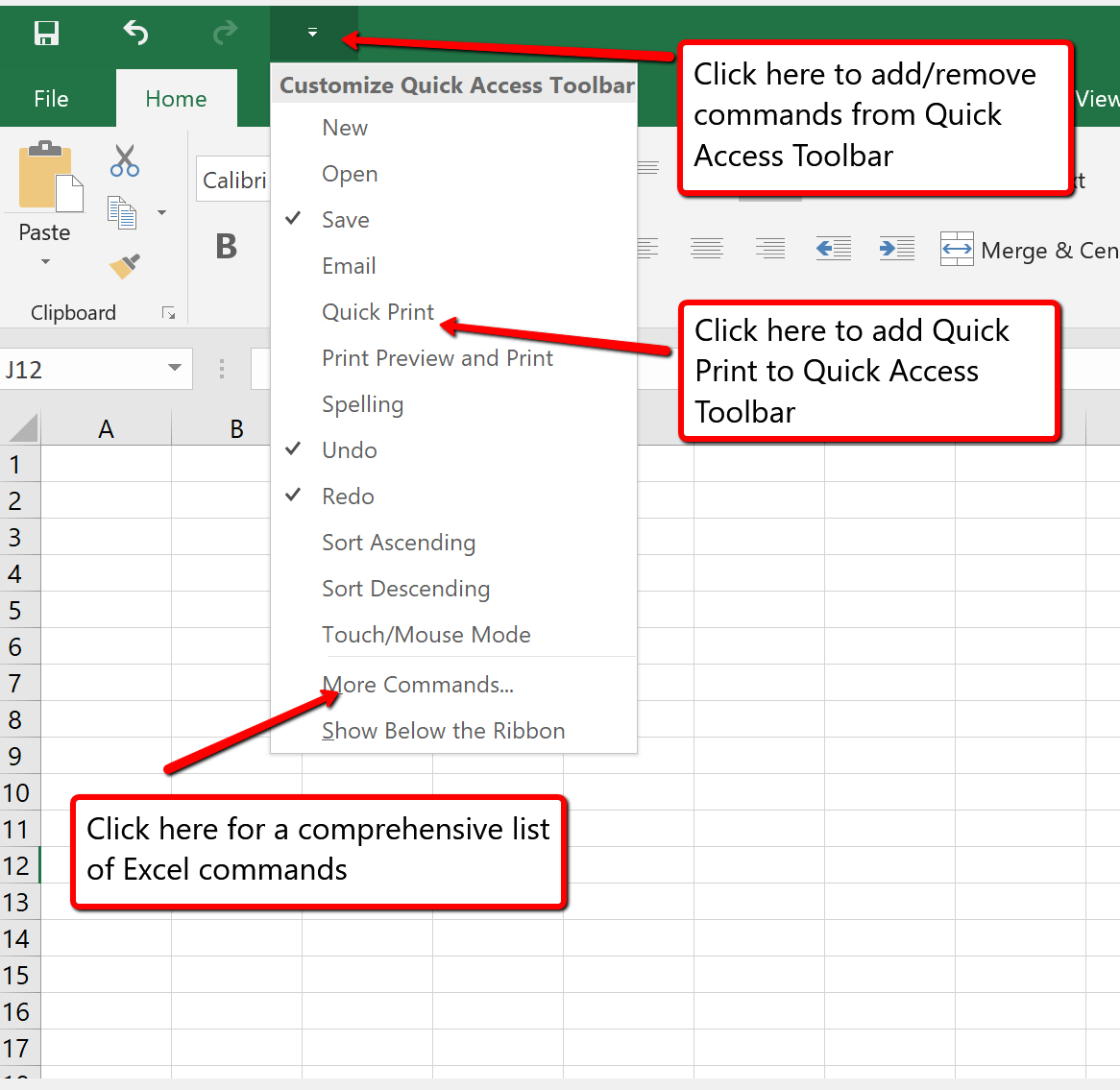

The Quick Access Toolbar is found at the upper left side of the Excel screen above the Ribbon, as shown in Figure 1.7. This area provides access to the most frequently used commands, such as Save and Undo. You also can customize the Quick Access Toolbar by adding commands that you use on a regular basis. By placing these commands in the Quick Access Toolbar, you do not have to navigate through the Ribbon to find them. To customize the Quick Access Toolbar, click the down arrow as shown in Figure 1.8. This will open a menu of commands that you can add to the Quick Access Toolbar. If you do not see the command you are looking for on the list, select the More Commands option.

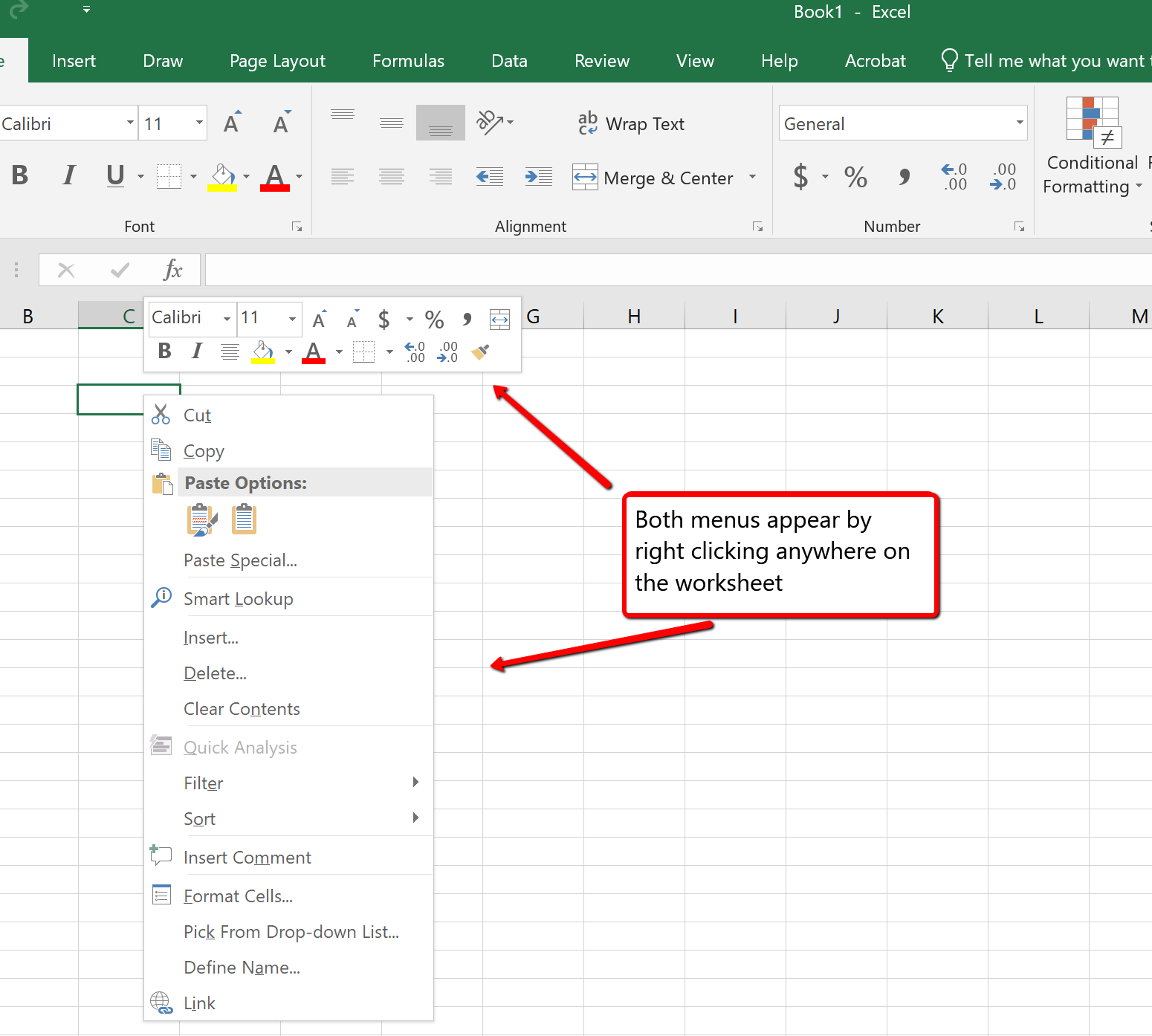

In addition to the Ribbon and Quick Access Toolbar, you can also access many commands by right clicking anywhere on the worksheet. Figure 1.9 shows an example of the commands available in the right-click menu.

![]() There is no “Right-click” option for Excel for Mac. To access the same commands with Excel for Mac, hold down the Control key and click the mouse button.

There is no “Right-click” option for Excel for Mac. To access the same commands with Excel for Mac, hold down the Control key and click the mouse button.

The File tab is also known as the Backstage view of the workbook. It contains a variety of features and commands related to the workbook that is currently open, new workbooks, or workbooks stored in other locations on your computer or network. Figure 1.10 shows the options available in the File tab or Backstage view. To leave the Backstage view and return to the worksheet, click the arrow in the upper left-hand corner as shown below.

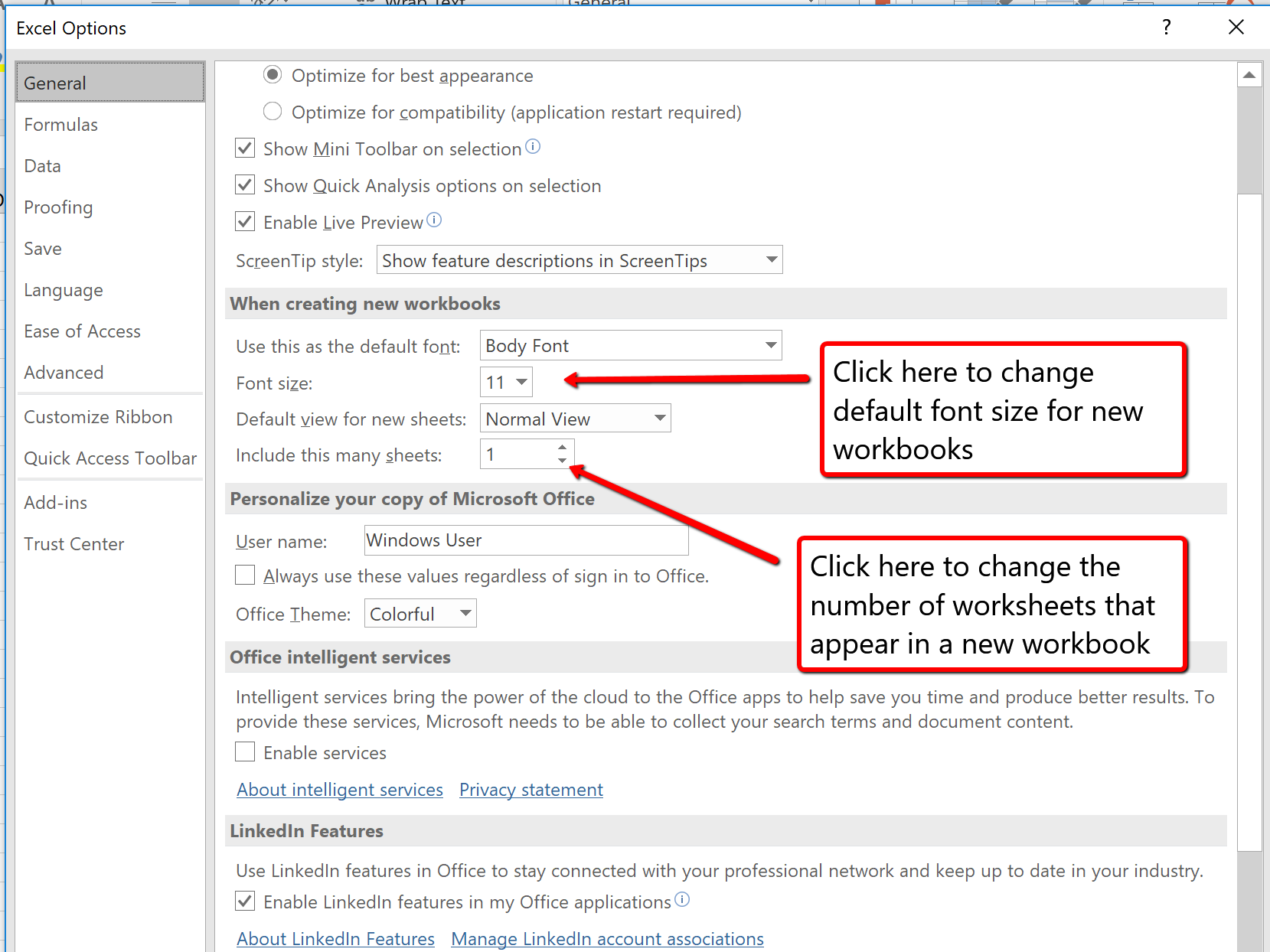

Included in the File tab are the default settings for the Excel application that can be accessed and modified by clicking the Options button. Figure 1.11 shows the Excel Options window, which gives you access to settings such as the default font style, font size, and the number of worksheets that appear in new workbooks.

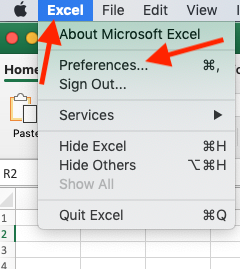

![]() To access these same options in Excel for Mac, you must click the “Excel” menu option and choose “Preferences” (see Figure 1.12 below)

To access these same options in Excel for Mac, you must click the “Excel” menu option and choose “Preferences” (see Figure 1.12 below)

![]()

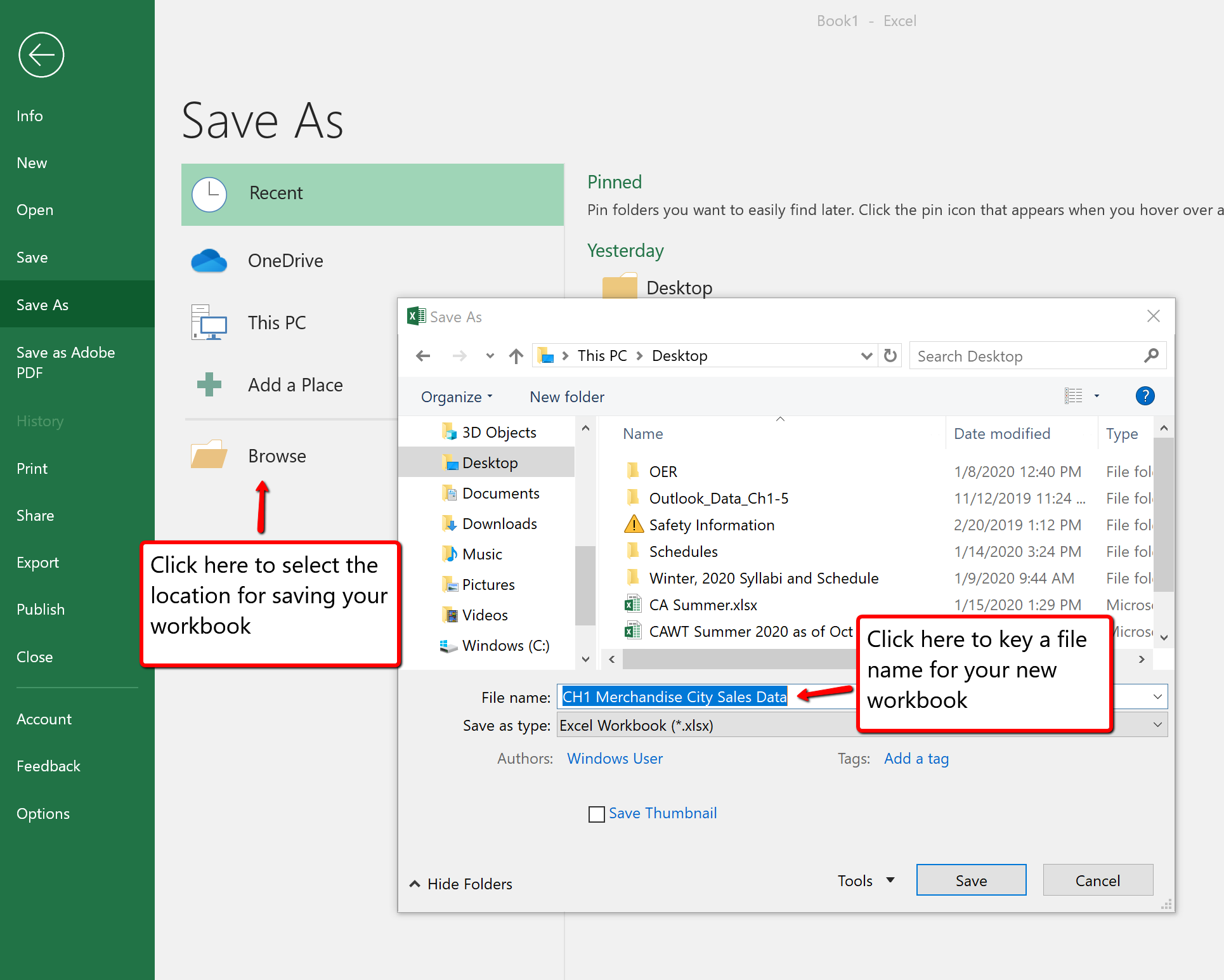

Once you create a new workbook, you will need to change the file name and choose a location on your computer or network to save that file. It is important to remember where you save this workbook on your computer or network as you will be using this file in the Section 1.2 “Entering, Editing, and Managing Data” to construct the workbook shown in Figure 1.1. The process of saving can be different with different versions of Excel. Please be sure you follow the steps for the version of Excel you are using. The following steps explain how to save a new workbook and assign it a file name.

Save As

Saving Workbooks (Save As)

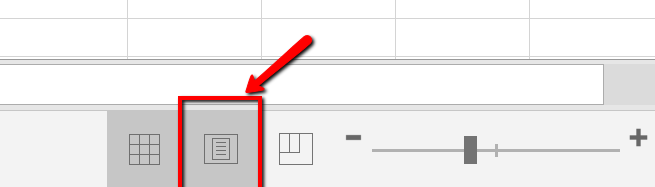

The Status Bar is located below the worksheet tabs on the Excel screen (see Figure 1.13). It displays a variety of information, such as the status of certain keys on your keyboard (e.g., CAPS LOCK), the available views for a workbook, the magnification of the screen, and mathematical functions that can be performed when data are highlighted on a worksheet. You can customize the Status Bar as follows:

The Help feature provides extensive information about the Excel application. Although some of this information may be stored on your computer, the Help window will automatically connect to the Internet, if you have a live connection, to provide you with resources that can answer most of your questions. You can open the Excel Help window by clicking the question mark in the upper right area of the screen or ribbon. With newer versions of Excel, use the query box to enter your question and select from helpful option links or select the question mark from the dropdown list to launch Excel Help windows.

Excel Help

Adapted by Barbara Lave from How to Use Microsoft Excel: The Careers in Practice Series, adapted by The Saylor Foundation without attribution as requested by the work’s original creator or licensee, and licensed under CC BY-NC-SA 3.0.

In this section, we will begin the development of the workbook shown in Figure 1.1. The skills covered in this section are typically used in the early stages of developing one or more worksheets in a workbook.

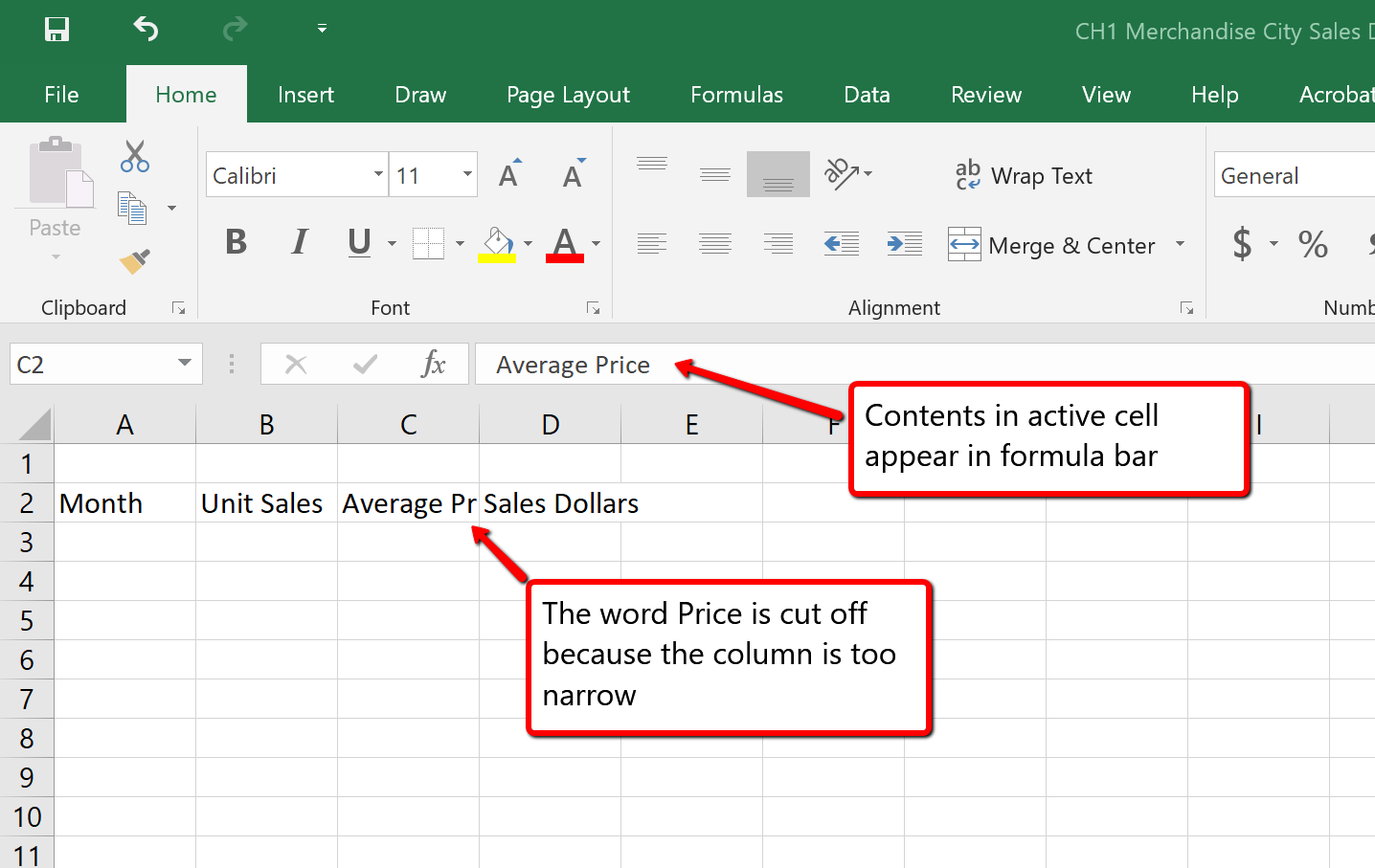

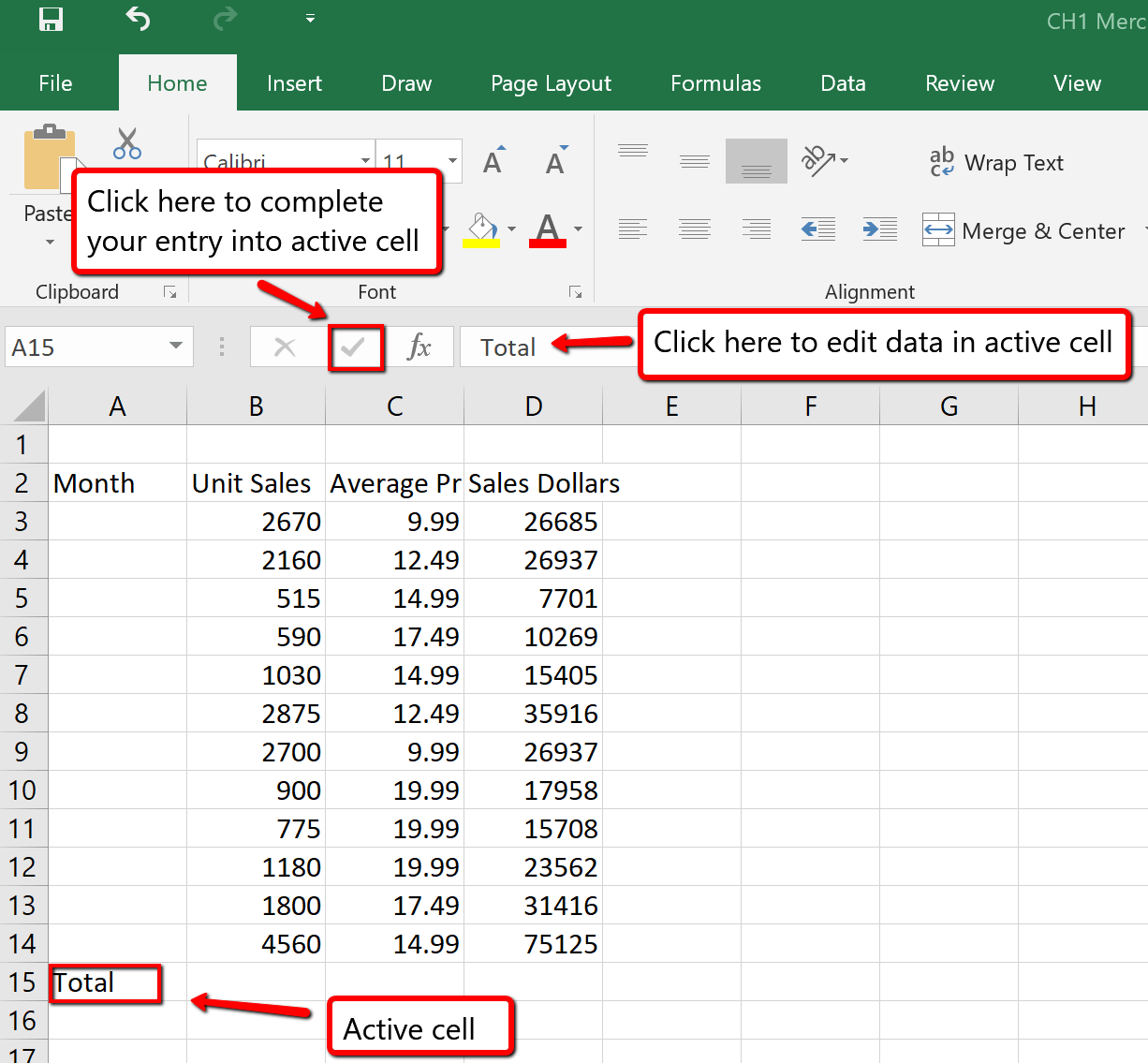

You will begin building the workbook shown in Figure 1.1 by manually entering data into the worksheet. The following steps explain how the column headings in Row 2 are typed into the worksheet:

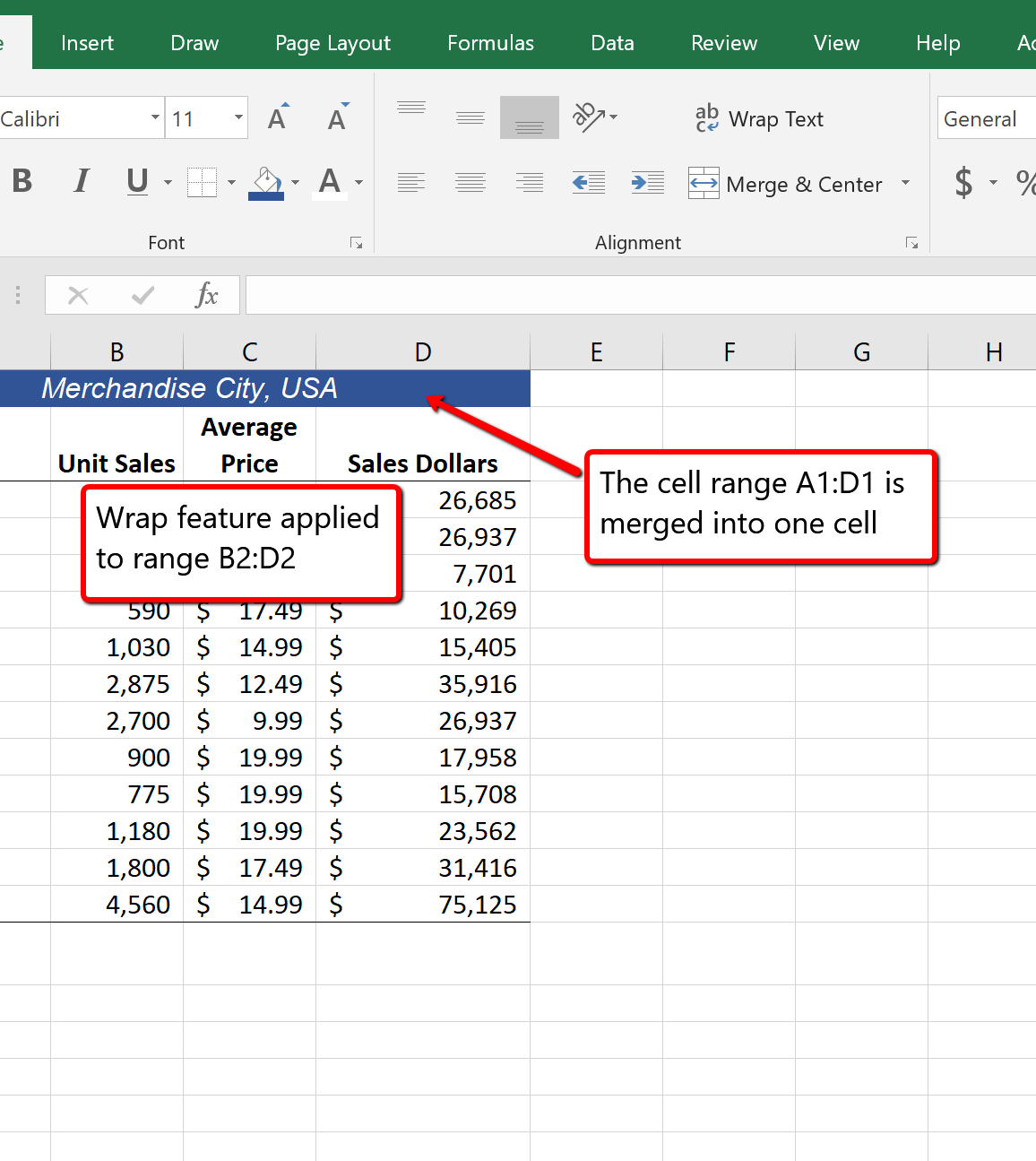

Figure 1.15 shows how your worksheet should appear after you have typed the column headings into Row 2. Notice that the word Price in cell location C2 is not visible. This is because the column is too narrow to fit the entry you typed. We will examine formatting techniques to correct this problem in the next section.

Column Headings

It is critical to include column headings that accurately describe the data in each column of a worksheet. In professional environments, you will likely be sharing Excel workbooks with coworkers. Good column headings reduce the chance of someone misinterpreting the data contained in a worksheet, which could lead to costly errors depending on your career.

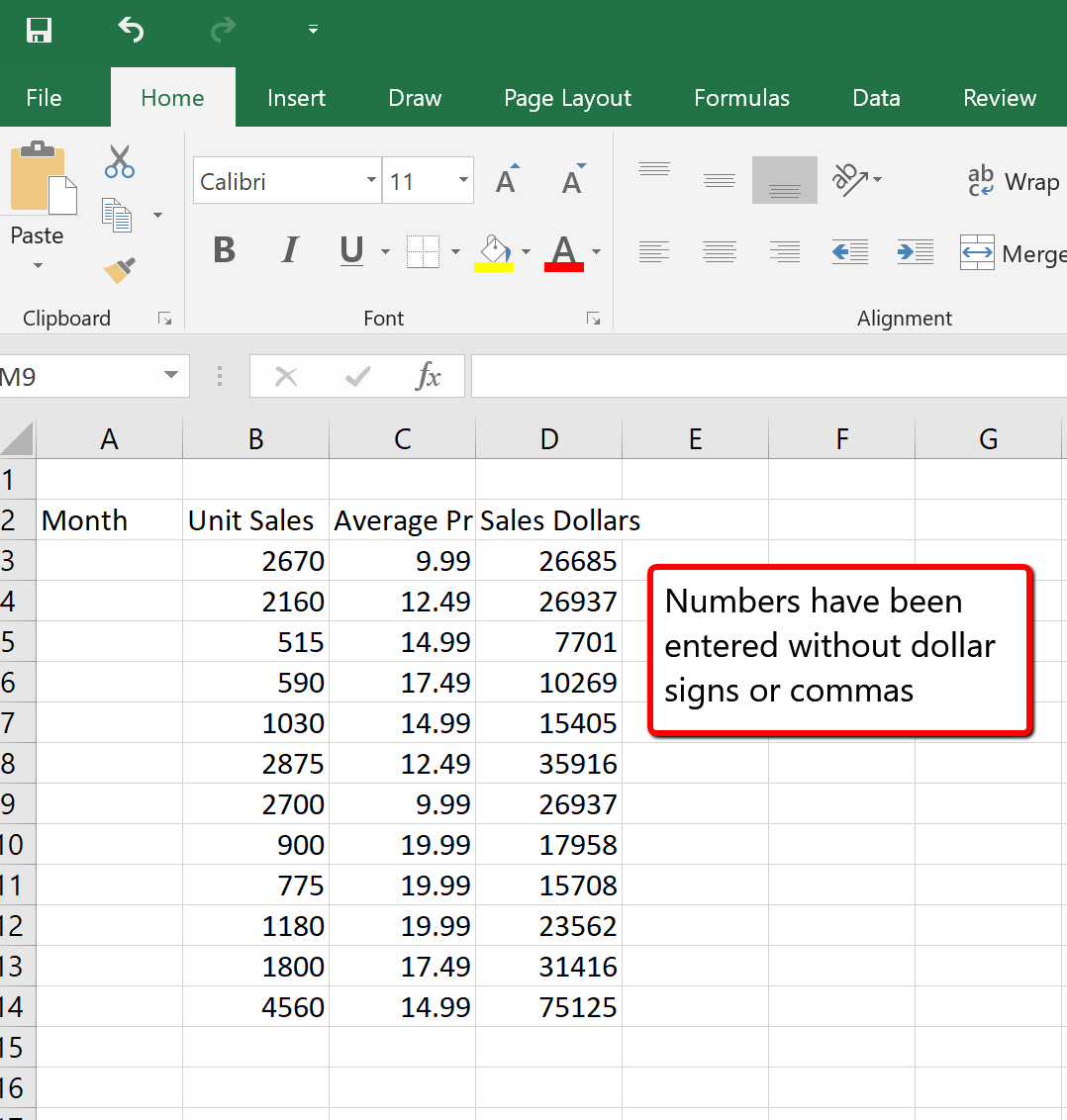

Avoid Formatting Symbols When Entering Numbers

When typing numbers into an Excel worksheet, it is best to avoid adding any formatting symbols such as dollar signs and commas. Although Excel allows you to add these symbols while typing numbers, it slows down the process of entering data. It is more efficient to use Excel’s formatting features to add these symbols to numbers after you type them into a worksheet.

Data Entry

It is very important to proofread your worksheet carefully, especially when you have entered numbers. Transposing numbers when entering data manually into a worksheet is a common error. For example, the number 563 could be transposed to 536. Such errors can seriously compromise the integrity of your workbook.

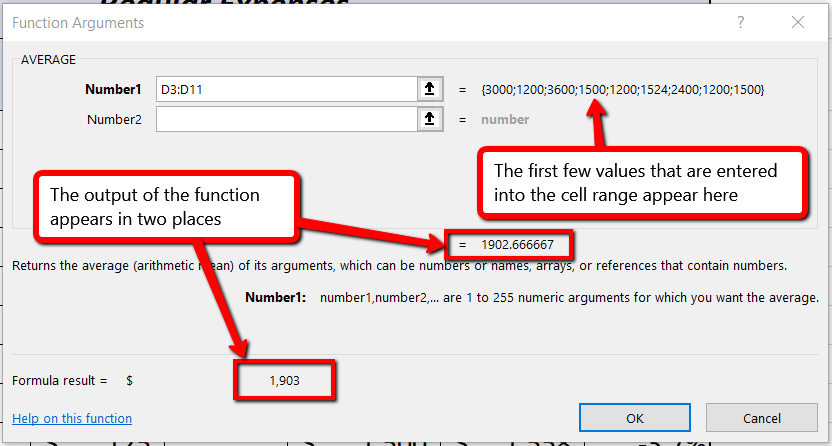

Figure 1.16 shows how your worksheet should appear after entering the data. Check your numbers carefully to make sure they are accurately entered into the worksheet.