17 Regression

Learning Outcomes

In this chapter, you will learn how to:

- Describe the concept of a linear equation, including slope and intercept

- Conduct a hypothesis test for a linear regression

- Compute a line of best fit

- Evaluate effect size for a linear regression

- Explain how regression is related to correlation and ANOVA

- Describe the concept of multiple regression

In the previous Unit, we learned about ANOVA, which involves a new way a looking at how our data are structured and the inferences we can draw from that. In the previous chapter, we learned about correlations, which analyze two variables at the same time to see if they systematically relate in a linear fashion. In this chapter, we will combine these two techniques in an analysis called simple linear regression, or regression for short. Regression uses the technique of variance partitioning from ANOVA to more formally assess the types of relationships looked at in correlations. Regression is the most general and most flexible analysis covered in this book, and we will only scratch the surface.

A major practical application of statistical methods is making predictions. Psychologists often call this kind of prediction regression. With correlation it did not matter which variable was the predictor variable or the predicted variable. But with prediction we have to decide which variable is the predictor and which variable is being predicted. The variable doing the predicting is called the predictor variable. The variable being predicted is called the criterion variable, also known as the predicted or outcome variable. In equations, the predictor variable is usually labeled X, and the criterion is labeled Y.

Again, the concepts in this chapter are directly related to correlation. This is because if two variables are correlated it means that we can predict one from the other. So if sleep the night before is correlated with happiness the next day, this means that we should be able, to some extent, predict how happy a person will be the next day from knowing how much sleep the person got the night before. The concepts in the chapter are also related to ANOVA as the goal of regression is the same as the goal of ANOVA: to take what we know about one variable (X) and use it to explain our observed differences in another variable (Y) - we are just using two continuous variables.

Line of Best Fit

In correlations, we referred to a linear trend in the data. That is, we assumed that there was a straight line we could draw through the middle of our scatterplot that would represent the relation between our two variables, X and Y. Regression involves solving for the equation of that line, which is called the Line of Best Fit.

The distances between the line of best fit and each individual data point go by two different names that mean the same thing: errors and residuals. The term “error” in regression is closely aligned with the meaning of error we've seen before (think standard error or sampling error); it does not mean that we did anything wrong, it simply means that there was some discrepancy or difference between what our analysis produced and the true value we are trying to get at it. The term residual is new to our study of statistics, and it takes on a very similar meaning in regression to what it means in everyday language: there is something left over. In regression, what is “left over” – that is, what makes up the residual – is an imperfection in our ability to predict values of the Y variable using our line. This definition brings us to one of the primary purposes of regression and the line of best fit: predicting scores.

Prediction

The goal of regression is the same as the goal of ANOVA: to take what we know about one variable (X) and use it to explain our observed differences in another variable (Y). In ANOVA, we talked about – and tested for – group mean differences, but in regression we do not have groups for our explanatory variable; we have a continuous variable, like in correlation. Because of this, our vocabulary will be a little bit different, but the process, logic, and end result are all the same. Instead of looking for differences in Y between levels of X, we are now looking to predict Y from values of X.

Regression Equation

The form of a simple linear regression looks just like it did in geometry:

[latex]\hat{Y}=bX+a[/latex]

Where b is the slope of the line, and a is the y-intercept. [latex]\hat{Y}[/latex] represents the value for [latex]Y[/latex] that we are predicting from the equation, and [latex]X[/latex] is the value that we would plug into this equation to predict [latex]Y[/latex].

To find the slope and the y-intercept, we use the following equations:

[latex]b=\frac{SP}{SS_X}[/latex]

[latex]a=M_Y-bM_X[/latex]

As you should recall from the previous chapters,

[latex]SP=\Sigma(X-M_X)(Y-M_Y)=\Sigma(XY)-\frac{(\Sigma X)(\Sigma Y)}{n}[/latex]

[latex]SS_X=\Sigma(X-M_X)^2=\Sigma(X^2)-\frac{(\Sigma X)^2}{n}[/latex]

[latex]M_Y=\frac{\Sigma Y}{n}[/latex]

[latex]M_X=\frac{\Sigma X}{n}[/latex]

Applied examples for using regression

Example 1: Businesses often have more applicants for a job than they have openings available, so they want to know who among the applicants is most likely to be the best employee. There are many criteria that can be used, but one is a personality test for conscientiousness, with the belief being that more conscientious (more responsible) employees are better than less conscientious employees. A business might give their employees a personality inventory to assess conscientiousness and existing performance data to look for a relation. In this example, we have known values of the predictor (X, conscientiousness) and outcome (Y, job performance), so we can estimate an equation for a line of best fit and see how accurately conscientiousness predicts job performance, then use this equation to predict future job performance of applicants based only on their known values of conscientiousness from personality inventories given during the application process.

Example 2: Assume a researcher is interested in examining whether SAT scores can be an accurate predictor of college GPA. In this case, SAT scores would be the predictor variable or X and college GPA would be the criterion variable or Y.

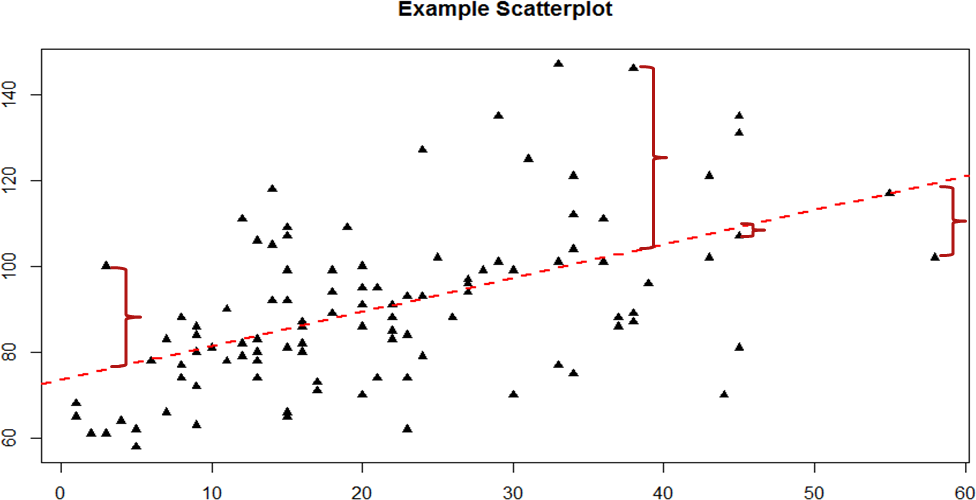

The key to assessing whether a linear regression works well is the difference between our observed and known Y values and our predicted Ŷ values. As mentioned in passing above, we use subtraction to find the difference between them (Y – Ŷ) in the same way we use subtraction for deviation scores and sums of squares. The value (Y – Ŷ) is our residual, which, as defined above, is how close our line of best fit is to our actual values. We can visualize residuals to get a better sense of what they are by creating a scatterplot and overlaying a line of best fit on it, as shown in Figure 1.

Figure 1. Scatterplot with line of best fit and residuals

We call this property of the line of best fit the Least Squares Error Solution. This term means that the solution – or equation – of the line is the one that provides the smallest possible value of the squared errors (squared so that they can be summed, just like in standard deviation) relative to any other straight line we could draw through the data.

Predicting Scores and Explaining Variance

We have now seen that the purpose of regression is twofold: we want to predict scores based on our line and, explain variance in our observed Y variable just like in ANOVA. These two purposes go hand in hand, and our ability to predict scores is literally our ability to explain variance. That is, if we cannot account for the variance in Y based on X, then we have no reason to use X to predict future values of Y.

We know that the overall variance in Y is a function of each score deviating from the mean of Y (as in our calculation of variance and standard deviation). So, just like the red brackets in figure 1 representing residuals, given as (Y – Ŷ), we can visualize the overall variance as each score’s distance from the overall mean of Y, given as (Y – MY), our normal deviation score. Thus, the residuals and the deviation scores are the same type of idea: the distance between an observed score and a given line, either the line of best fit that gives predictions or the line representing the mean that serves as a baseline. The difference between these two values, which is the distance between the lines themselves, is our model’s ability to predict scores above and beyond the baseline mean; that is, it is our model's ability to explain the variance we observe in Y based on values of X. If we have no ability to explain variance, then our line will be flat (the slope will be 0.00) and will be the same as the line representing the mean, and the distance between the lines will be 0.00 as well.

We now have three pieces of information: the distance from the observed score to the mean, the distance from the observed score to the prediction line, and the distance from the prediction line to the mean. These are our three pieces of information needed to test our hypotheses about regression and to calculate effect sizes. They are our three Sums of Squares, just like in ANOVA. Our distance from the observed score to the mean is the Sum of Squares Total, which we are trying to explain. Our distance from the observed score to the prediction line is our Sum of Squares Error, or residual, which we are trying to minimize. Our distance from the prediction line to the mean is our Sum of Squares Model, which is our observed effect and our ability to explain variance. Each of these will go into the ANOVA table to calculate our test statistic.

ANOVA Table

Our ANOVA table in regression follows the exact same format as it did for ANOVA (hence the name). Our top row is our observed effect, our middle row is our error, and our bottom row is our total. The columns take on the same interpretations as well: from left to right, we have our sums of squares, our degrees of freedom, our mean squares, and our F statistic.

|

Source |

SS |

df |

MS |

F |

|

Model |

∑(Ŷ −MY)2 |

1 |

SSM/dfM |

MSM/MSE |

|

Error |

∑(Y − Ŷ)2 |

n-2 |

SSE/dfE |

|

|

Total |

∑(Y −MY)2 |

n-1 |

|

|

As with ANOVA, getting the values for the SS column is a straightforward but somewhat arduous process. First, you take the raw scores of X and Y and calculate the means, variances, and covariance using the sum of products table introduced in our chapter on correlations. Next, you use the variance of X and the covariance of X and Y to calculate the slope of the line, b, the formula for which is given above. After that, you use the means and the slope to find the intercept, a, which is given alongside b. After that, you use the full prediction equation for the line of best fit to get predicted Y scores (Ŷ) for each person. Finally, you use the observed Y scores, predicted Y scores, and mean of Y to find the appropriate deviation scores for each person for each sum of squares source in the table and sum them to get the Sum of Squares Model, Sum of Squares Error, and Sum of Squares Total. The other columns in the ANOVA table are all familiar. The degrees of freedom column still has n – 1 for our total, but now we have n – 2 for our error degrees of freedom and 1 for our model degrees of freedom; this is because simple linear regression only has one predictor, so our degrees of freedom for the model is always 1 and does not change. The total degrees of freedom must still be the sum of the other two, so our degrees of freedom error will always be n – 2 for simple linear regression. The mean square columns are still the SS column divided by the df column, and the test statistic F is still the ratio of the mean squares. Based on this, it is now explicitly clear that not only do regression and ANOVA have the same goal but they are, in fact, the same analysis entirely. The only difference is the type of data we feed into the predictor side of the equations: continuous for regression and categorical for ANOVA.

Hypothesis Testing in Regression

Regression, like all other analyses, will test a null hypothesis in our data. In regression, we are interested in predicting Y scores and explaining variance using a line, the slope of which is what allows us to get closer to our observed scores than the mean of Y can. Thus, our hypotheses concern the slope of the line, which is estimated in the prediction equation by b. Specifically, we want to test that the slope is not zero:

H0: There is no explanatory relationship between our variables

H0: ß = 0

HA: There is an explanatory relationship between our variables

HA: ß ≠ 0

or if directional - specify direction for relationship (positive or negative):

H0: ß < 0 and HA: ß > 0

H0: ß > 0 and HA: ß < 0

Note that the slope in our regression equation based on our sample is notated with a b, thus in our hypotheses it is notated with a beta, the Greek symbol corresponding to b. Just as we have been using mu to represent the population parameter in our hypotheses in prior units, we use beta to represent our population parameter here.

A non-zero slope indicates that we can explain values in Y based on X and therefore predict future values of Y based on X. Our alternative hypotheses are analogous to those in correlation: positive relations have values above zero, negative relationships have values below zero, and two-tailed tests are possible. Just like ANOVA, we will test the significance of this relationship using the F statistic calculated in our ANOVA table compared to a critical value from the F distribution table. Let’s take a look at an example and regression in action.

Example: Happiness and Well-Being

Researchers are interested in explaining differences in how happy people are based on how healthy people are. They gather data on each of these variables from 18 people and fit a linear regression model to explain the variance. We will follow the four-step hypothesis testing procedure to see if there is a relationship between these variables that is statistically significant.

Step 1: State the Hypotheses

The null hypothesis in regression states that there is no relationship between our variables. The alternative states that there is a relationship, but because our research description did not explicitly state a direction of the relationship, we will use a non-directional hypothesis.

H0: There is no explanatory relationship between health and happiness

H0: ß = 0

HA: There is an explanatory relationship between health and happiness

HA: ß ≠ 0

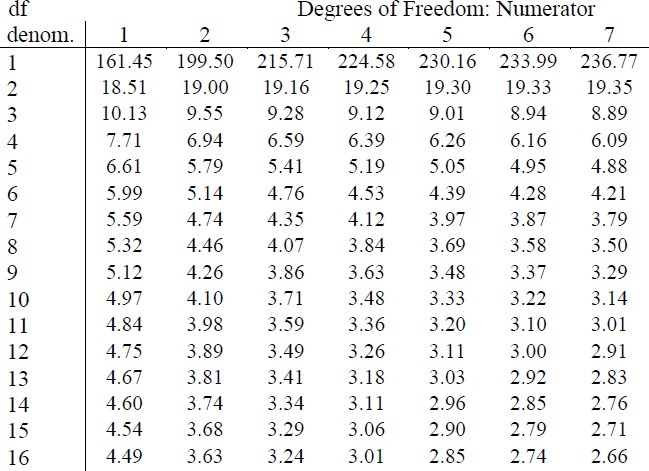

Step 2: Find the Critical Value

Because regression and ANOVA are the same analysis, our critical value for regression will come from the same place: the F distribution table, which uses two types of degrees of freedom. We saw above that the degrees of freedom for our numerator – the Model line – is always 1 in simple linear regression, and that the denominator degrees of freedom – from the Error line – is n – 2. In this instance, we have 18 people so our degrees of freedom for the denominator is 16. Going to our F table, we find that the appropriate critical value for 1 and 16 degrees of freedom is Fcrit = 4.49, shown below in figure 2.

Figure 2. Critical value from F distribution table

Step 3: Calculate the Test Statistic

The process of calculating the test statistic for regression first involves computing the parameter estimates for the line of best fit. To do this, we first calculate the means, standard deviations, and sum of products for our X and Y variables, as shown below.

|

X |

(X −MX) |

(X −MX)2 |

Y |

(Y −MY) |

(Y − ̅MY)2 |

(X −MX)(Y −MY) |

|

17.65 |

-2.13 |

4.53 |

10.36 |

-7.10 |

50.37 |

15.10 |

|

16.99 |

-2.79 |

7.80 |

16.38 |

-1.08 |

1.16 |

3.01 |

|

18.30 |

-1.48 |

2.18 |

15.23 |

-2.23 |

4.97 |

3.29 |

|

18.28 |

-1.50 |

2.25 |

14.26 |

-3.19 |

10.18 |

4.79 |

|

21.89 |

2.11 |

4.47 |

17.71 |

0.26 |

0.07 |

0.55 |

|

22.61 |

2.83 |

8.01 |

16.47 |

-0.98 |

0.97 |

-2.79 |

|

17.42 |

-2.36 |

5.57 |

16.89 |

-0.56 |

0.32 |

1.33 |

|

20.35 |

0.57 |

0.32 |

18.74 |

1.29 |

1.66 |

0.73 |

|

18.89 |

-0.89 |

0.79 |

21.96 |

4.50 |

20.26 |

-4.00 |

|

18.63 |

-1.15 |

1.32 |

17.57 |

0.11 |

0.01 |

-0.13 |

|

19.67 |

-0.11 |

0.01 |

18.12 |

0.66 |

0.44 |

-0.08 |

|

18.39 |

-1.39 |

1.94 |

12.08 |

-5.37 |

28.87 |

7.48 |

|

22.48 |

2.71 |

7.32 |

17.11 |

-0.34 |

0.12 |

-0.93 |

|

23.25 |

3.47 |

12.07 |

21.66 |

4.21 |

17.73 |

14.63 |

|

19.91 |

0.13 |

0.02 |

17.86 |

0.40 |

0.16 |

0.05 |

|

18.21 |

-1.57 |

2.45 |

18.49 |

1.03 |

1.07 |

-1.62 |

|

23.65 |

3.87 |

14.99 |

22.13 |

4.67 |

21.82 |

18.08 |

|

19.45 |

-0.33 |

0.11 |

21.17 |

3.72 |

13.82 |

-1.22 |

| totals/∑ | ||||||

|

356.02 |

0.00 |

76.14 |

314.18 |

0.00 |

173.99 |

58.29 |

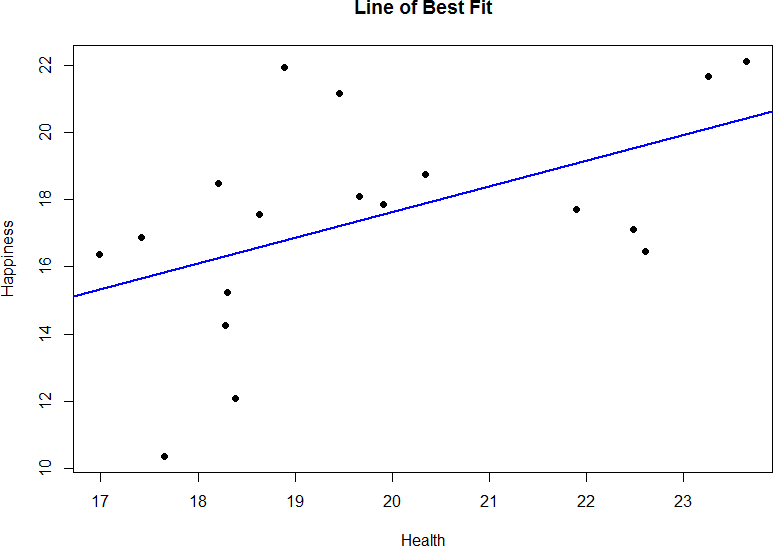

From the raw data in our X and Y columns, we find that the means are MX = 356.02/18 = 19.78 and MY = 314.18/18 = 17.45. The deviation scores for each variable sum to zero, so all is well there. The sums of squares for X and Y ultimately lead us to standard deviations of sX = 2.12 and sY = 3.20. Finally, our sum of products is 58.29, which gives us a covariance of covXY = 3.43, so we know our relationship will be positive. This is all the information we need for our equations for the line of best.

We can plot this relation in a scatterplot and overlay our line onto it, as shown in figure 3.

Figure 3. Health and happiness data and regression line.

We can use the line equation to find predicted values for each observation and use them to calculate our sums of squares model and error, but this is tedious to do by hand, so we will let the computer software do the heavy lifting in that column of our ANOVA table:

|

Source |

SS |

df |

MS |

F |

|

Model |

44.62 |

|

|

|

|

Error |

129.37 |

|

|

|

|

Total |

|

|

|

|

Now that we have these, we can fill in the rest of the ANOVA table. We already found our degrees of freedom in Step 2:

|

Source |

SS |

df |

MS |

F |

|

Model |

44.62 |

1 |

|

|

|

Error |

129.37 |

16 |

|

|

|

Total |

|

|

|

|

Our total line is always the sum of the other two lines, giving us:

|

Source |

SS |

df |

MS |

F |

|

Model |

44.62 |

1 |

|

|

|

Error |

129.37 |

16 |

|

|

|

Total |

173.99 |

17 |

|

|

Our mean squares column is only calculated for the model and error lines and is always our SS divided by our df, which is:

|

Source |

SS |

df |

MS |

F |

|

Model |

44.62 |

1 |

44.62 |

|

|

Error |

129.37 |

16 |

8.09 |

|

|

Total |

173.99 |

17 |

|

|

Finally, our F statistic is the ratio of the mean squares:

|

Source |

SS |

df |

MS |

F |

|

Model |

44.62 |

1 |

44.62 |

5.52 |

|

Error |

129.37 |

16 |

8.09 |

|

|

Total |

173.99 |

17 |

|

|

This gives us an obtained F statistic of 5.52, which we will now use to test our hypothesis.

Step 4: Make the Decision

We now have everything we need to make our final decision. Our obtained test statistic was Ftest = 5.52 and our critical value was Fcrit = 4.49. Since our obtained test statistic is greater than our critical value, we can reject the null hypothesis.

Effect Size

Effect size (r2)

As you should recall from the previous chapter, we can calculate r2 from the numbers that we have already calculated so far.

[latex]r=\frac{SP}{\sqrt{(SS_X)(SS_Y)}}[/latex]

[latex]r^2=r*r[/latex]

Now, for our example, using the data from Step 3:

[latex]r=\frac{58.29}{\sqrt{(76.14)(173.99)}}=\frac{58.29}{115.10}=0.51[/latex]

And, then:

[latex]r^2=.51^2=.26[/latex]

We are explaining 26% of the variance in happiness based on health, which is a large effect size (r2 uses the same effect size cutoffs as η2).

Accuracy in Prediction

We found a large, statistically significant predictive relationship between our variables, which is what we hoped for. However, if we want to use our estimated line of best fit for future prediction, we will also want to know how precise or accurate our predicted values are. What we want to know is the average distance from our predictions to our actual observed values, or the average size of the residual (Y − Ŷ). The average size of the residual is known by a specific name: the standard error of the estimate, s(Y− Ŷ). The formula is almost identical to our standard deviation formula, and it follows the same logic.

Standard Error of the Estimate

[latex]s_{(Y-\hat{Y})}=\sqrt{\frac{SS_{error}}{df}}=\sqrt{\frac{\Sigma(Y-\hat{Y})^2}{n-2}}[/latex]

Again, we'll let the computer do this one for us. But for our example s(Y− Ŷ) = 2.84. So on average, our predictions are just under 3 points away from our actual values. There are no specific cutoffs or guidelines for how big our standard error of the estimate can or should be; it is highly dependent on both our sample size and the scale of our original Y variable, so expert judgment should be used. In this case, the estimate is not that far off and can be considered reasonably precise.

Quick recap of regression (without the math)

Two variables of regression

1. Predictor (X)

2. Criterion (Y)

With correlation it did not matter which variable was the predictor variable or the criterion variable. But with prediction we have to decide which variable is being predicted from and which variable is being predicted. The variable being predicted from is called the predictor variable. The variable being predicted is called the criterion variable. In equations, the predictor variable is usually labeled X, and the criterion is labeled Y.

The Linear Prediction Rule: Ideally we want to make a prediction rule that is both simple and depends on every case for each prediction. In a linear prediction rule the formal name for the baseline number is the regression constant or just constant. It has the name constant because it is a fixed value that we always add in to the prediction.

The number we multiplied by the person's score on the predictor variable, b, is called the regression coefficient because a “coefficient” is a number we multiply by something.

Let's revisit example 2, predicting college GPA from SAT scores. For our SAT and GPA example, the rule might be “to predict a person’s graduating GPA, start with .3 and at the result of multiplying .004 by the person’s SAT scores”. So, the baseline number (a) would be .3 and the predictor value (b) is .004. If a person had an SAT of 600 we would predict the person would graduate with a GPA of 2.7. This idea is known as the linear prediction rule.

Criterion Variable (Ŷ)

The variable we are predicting in a regression equation is called the criterion variable. It is labeled as Ŷ. The mark above Y indicates that this variable is a predicted variable and is dependent on the value of X.

Slope of the Regression Line (b)

The steepness of the angle of the regression line, called its slope, is the amount that the line moves up for every unit it is moved across. In our SAT example the line moves up .004 on the GPA scale for every additional point on the SAT. In fact, the slope of the line is exactly b, the regression coefficient.

Intercept of the Regression Line (a)

The point at which the regression line crosses or intersects the vertical axis is called the intercept.

- The intercept is the predicted score on the criterion variable when the score on the predictor variable is 0. It turns out that the intercept is the same as the regression constant.

- The reason this works is the regression constant is the number we always add in, a kind of baseline number, the number we start with.

- It is reasonable that the best baseline number would be the number we predict from a predictor score of 0.

In the SAT example the line crosses the vertical axis at .3. That is, when a person has an SAT score of zero, they are predicted to have a college GPA .3.

Linear regression standardized coefficient (β)

This formula has the effect of changing the regular (unstandardized) regression coefficient (b), to a standardized regression coefficient (β) that shows the relationship between the predictor and criterion variables in terms of standard deviation units. When you run a regression using statistical software, you are able to get the unstandardized and standardized versions of the regression coefficients, that is you can get b and β for your predictor variable(s). This allows you to use more than one predictor variable and compare those that have a different range of original scores available (e.g., SAT scores and ACT scores).

Standardized Regression Coefficient

[latex]\beta=(b)\sqrt{\frac{SS_X}{SS_Y}}[/latex]

Multiple Regression and Other Extensions

Simple linear regression as presented here is only a stepping stone towards an entire field of research and application. Regression is an incredibly flexible and powerful tool, and the extensions and variations on it are far beyond the scope of this chapter (indeed, even entire books struggle to accommodate all possible applications of the simple principles laid out here). The next step in regression is to study multiple regression, which uses multiple X variables as predictors for a single Y variable at the same time. The math of multiple regression is very complex but the logic is the same: we are trying to use variables that are statistically significantly related to our outcome to explain the variance we observe in that outcome. (Luckily we have statistical programs that will do this math for us.) Other forms of regression include curvilinear models that can explain curves in the data rather than the straight lines used here, as well as moderation models that measure the relationships between two variables based on levels of a third. The possibilities are truly endless and offer a lifetime of discovery.

an analysis in which the predictor or independent variables (xs) are assumed to be related to the criterion or dependent variable (y) in such a manner that increases in an x variable result in consistent increases in the y variable. In other words, the direction and rate of change of one variable is constant with respect to changes in the other variable.

a variable used to estimate, forecast, or project future events or circumstances. This term sometimes is used interchangeably with independent variable.

the effect that one wants to predict or explain in correlational research.

A straight line that minimizes the distance between it and the data points in a scatter plot.

in regression analysis, the difference between the value of an empirical observation and the value predicted by a model.

the point at which either axis of a graph is intersected by a line plotted on the graph.

the steepness or slant of a line on a graph, measured as the change of value on the y-axis associated with a change of one unit of value on the x-axis.

in regression analysis, the principle that one should estimate the values of parameters iin a way that will minimize the squared error of predictions from the model.